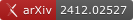

Multimodal Foundation Models for

Scientific Data

Deployment in Astrophysics

François Lanusse

National Center for Scientific Research (CNRS)

Polymathic AI

Methodologies and practices that will

transfer across domains

Why a Dedicated Effort?

Transposing these methodologies to scientific data and problems brings

unique challenges

-

Scientific Data is Complex and Diverse

- Impacts data collection

- Requires dedicated architectures

- Adoption by Scientists Requires Flexible Models and Quantitative Methods

Data

Challenge

The Challenges of Scientific Data

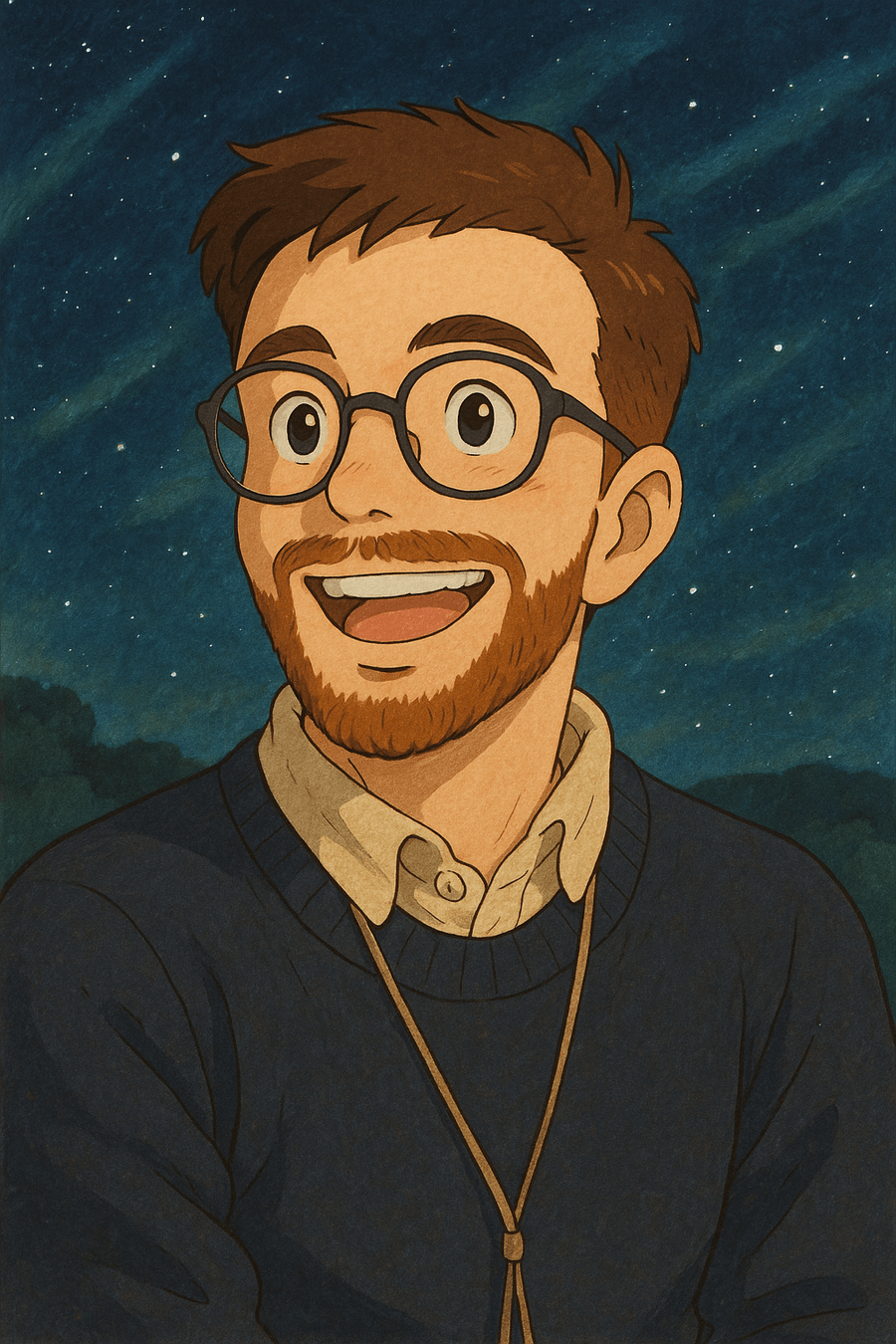

- Success of recent foundation models is driven by large corpora of uniform data (e.g LAION 5B).

- Scientific data comes with many additional challenges:

- Metadata matters

- Wide variety of measurements/observations

- Accessing and formatting data requires very specific expertise

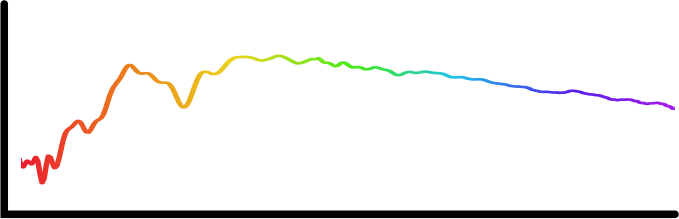

Credit: Melchior et al. 2021

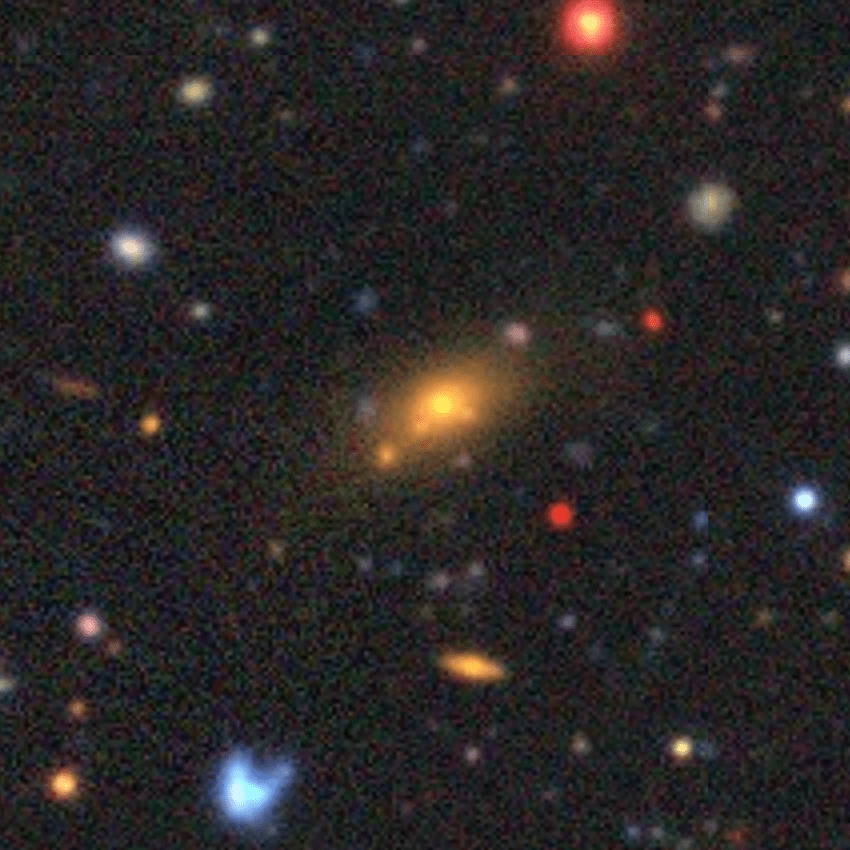

Credit:DESI collaboration/DESI Legacy Imaging Surveys/LBNL/DOE & KPNO/CTIO/NOIRLab/NSF/AURA/unWISE

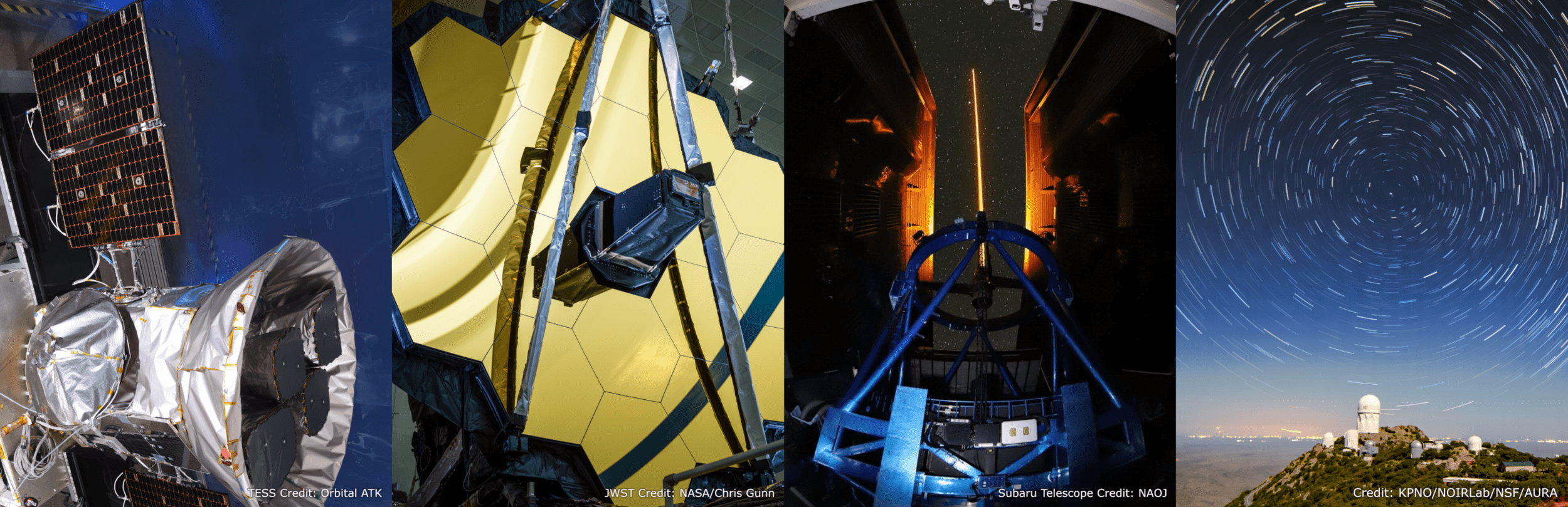

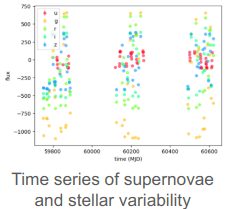

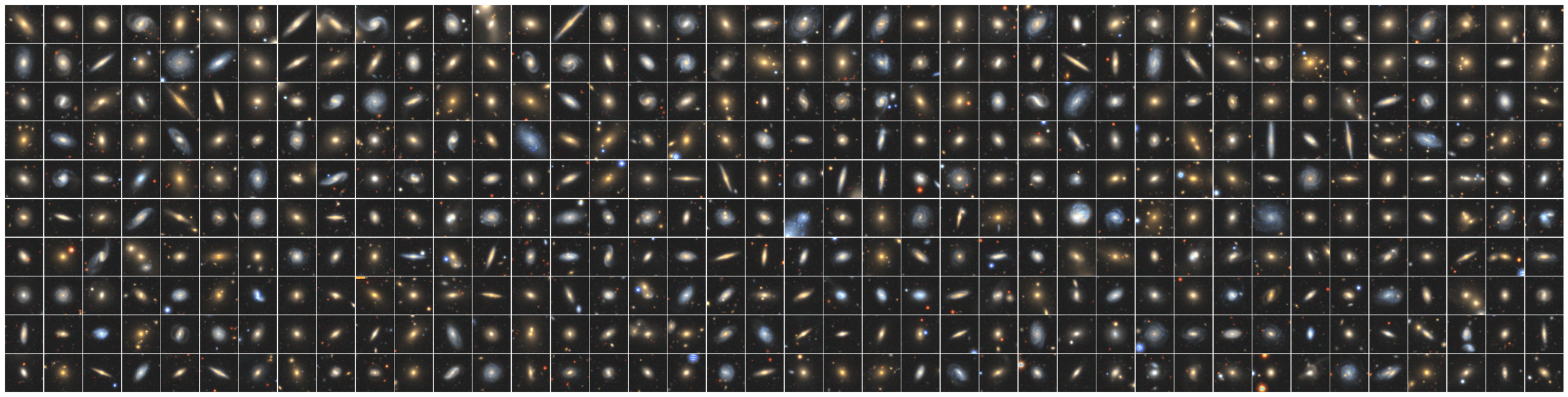

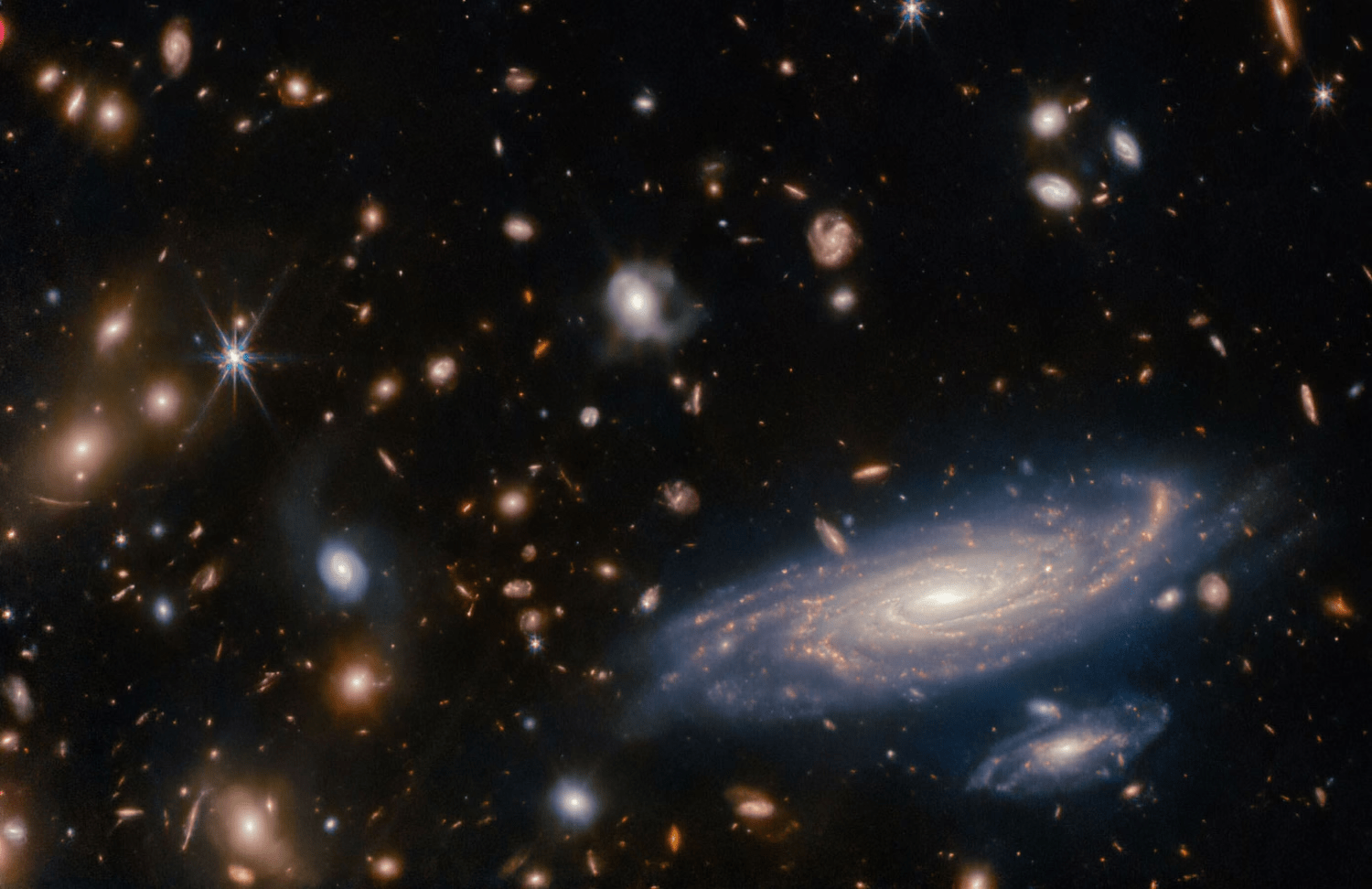

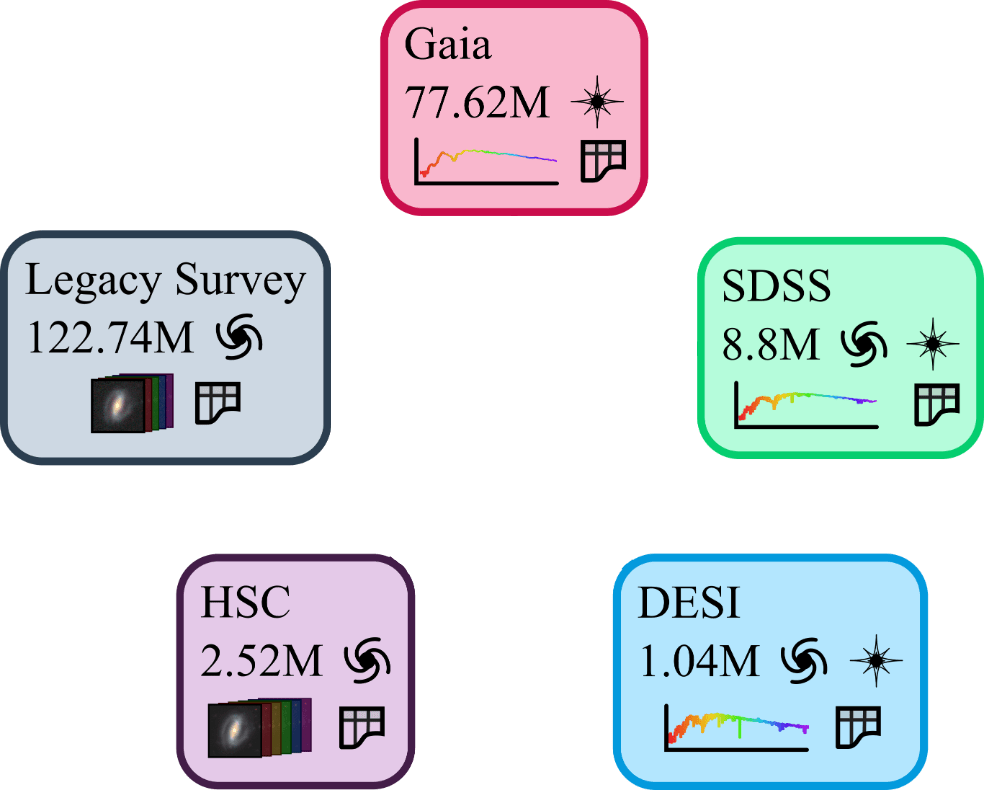

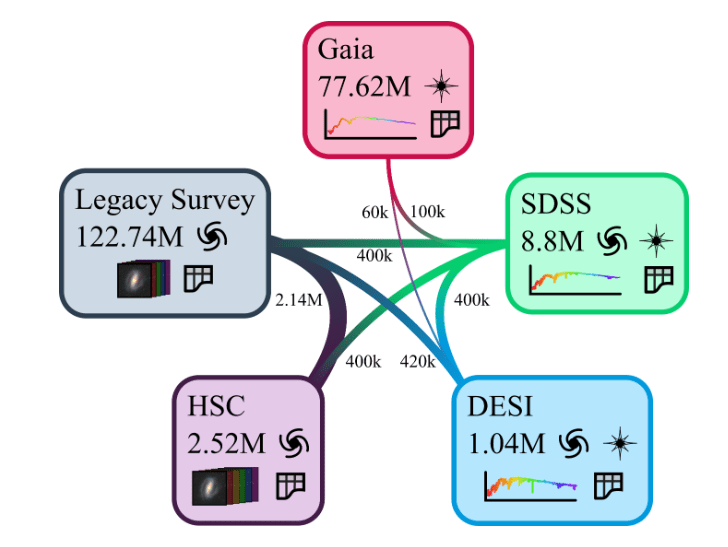

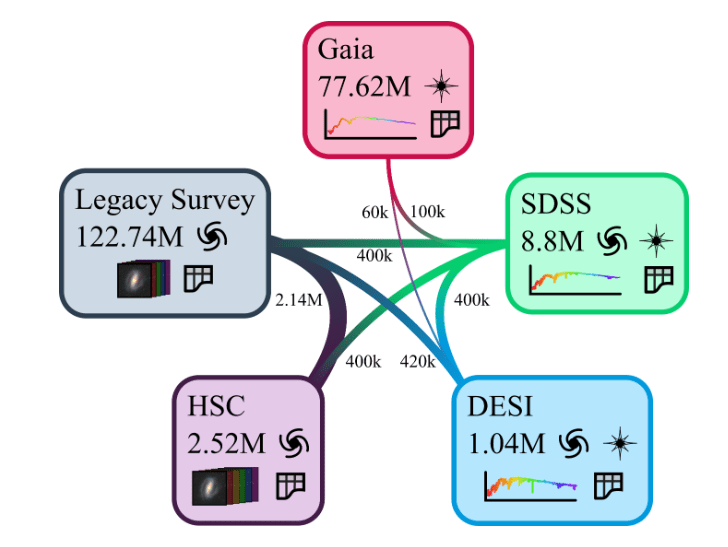

The Multimodal Universe

Enabling Large-Scale Machine Learning with 100TBs of Astronomical Scientific Data

Collaborative project with about 30 contributors

Presented at NeurIPS 2024 Datasets & Benchmark track

The MultiModal Universe Project

- Goal: Assemble the first large-scale multimodal dataset for machine learning in astrophysics.

-

Strategy:

- Engage with a broad community of AI+Astro experts.

- Target large astronomical surveys, varied types of instruments, many different astrophysics sub-fields.

- Adopt standardized conventions for storing and accessing data and metadata through mainstream tools (e.g. Hugging Face Datasets).

Ground-based imaging from Legacy Survey

Space-based imaging from JWST

MultiModal Universe Infrastructure

Presented at NeurIPS 2024 Datasets & Benchmark Track

The Well: a Large-Scale Collection of Diverse Physics Simulations for Machine Learning

- 55B tokens from 3M frames

=> First ImageNet scale dataset for fluids

-

18 subsets spanning problems in astro, bio, aerospace, chemistry, atmospheric science, and more.

- Simple self-documented HDF5 files, with pytorch readers provided.

Presented at NeurIPS 2024 Datasets & Benchmark Track

Architecture

Challenge

The Universal Neural Architecture Challenge

Most General

Most Specific

Single model capable of processing all types of data

Independent models for all types of data

The Universal Neural Architecture Challenge

Most General

Most Specific

Independent models for all types of data

Single model capable of processing all types of data

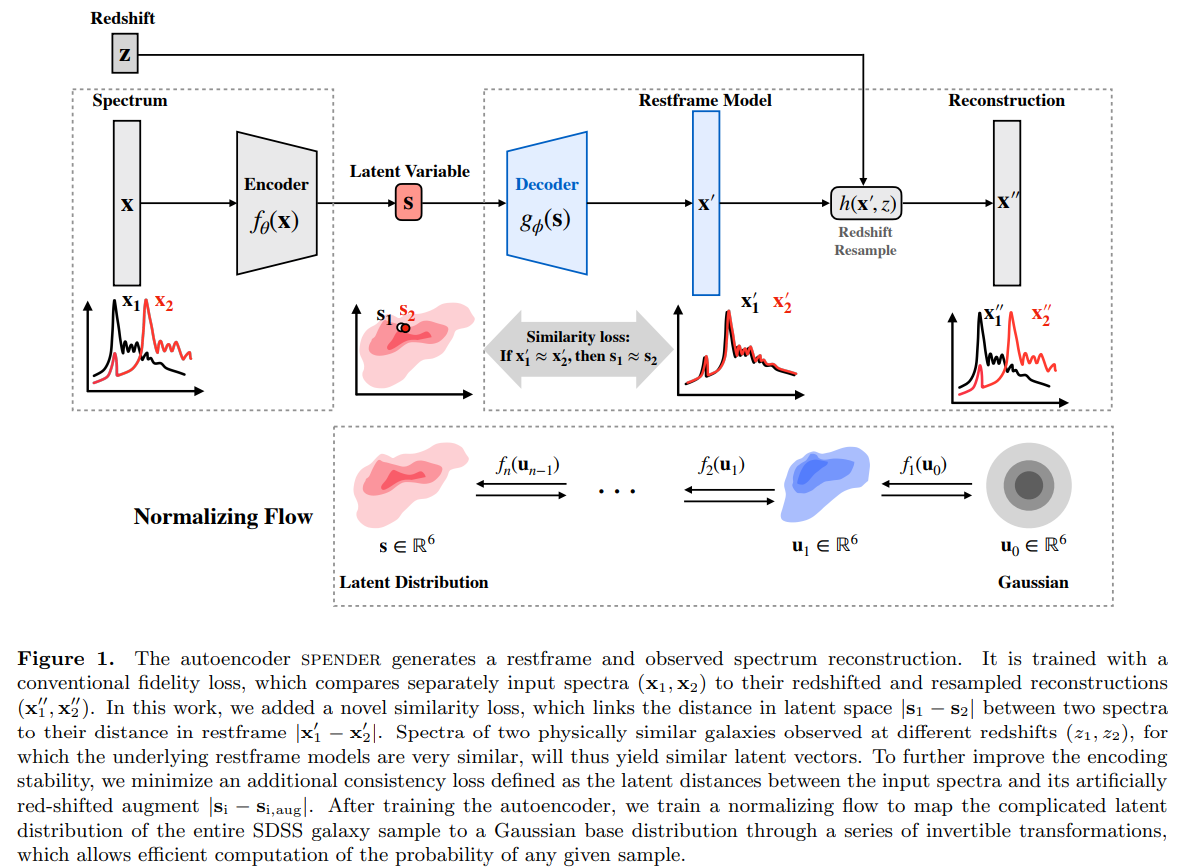

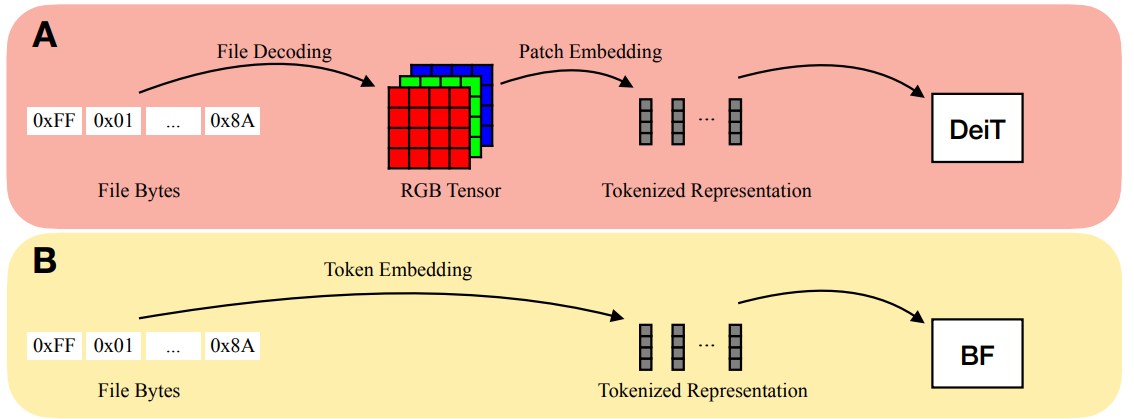

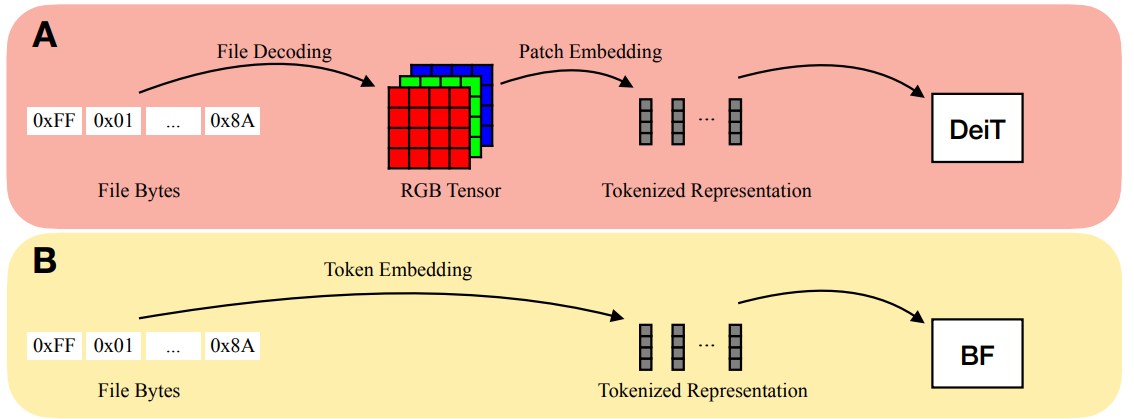

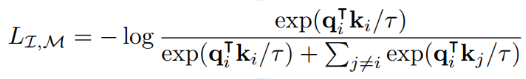

Bytes Are All You Need (Horton et al. 2023)

The Universal Neural Architecture Challenge

Most General

Most Specific

Independent models for all types of data

Single model capable of processing all types of data

Bytes Are All You Need (Horton et al. 2023)

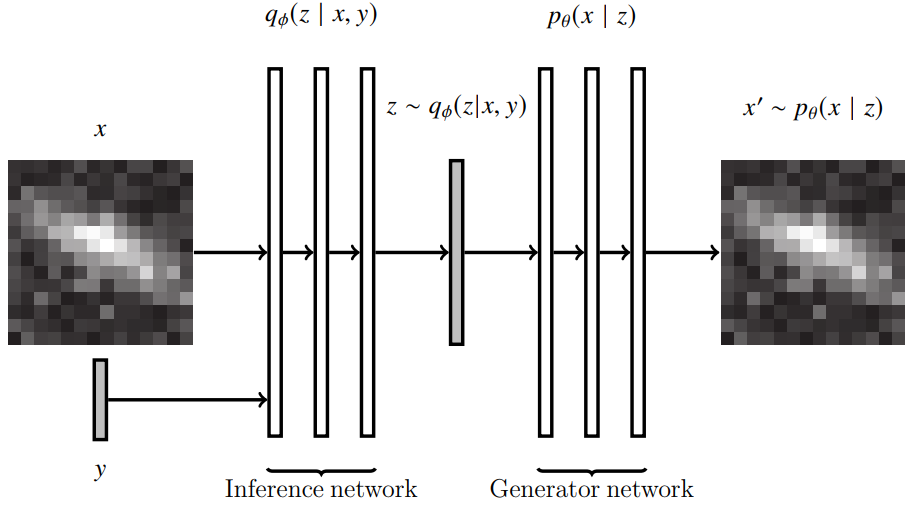

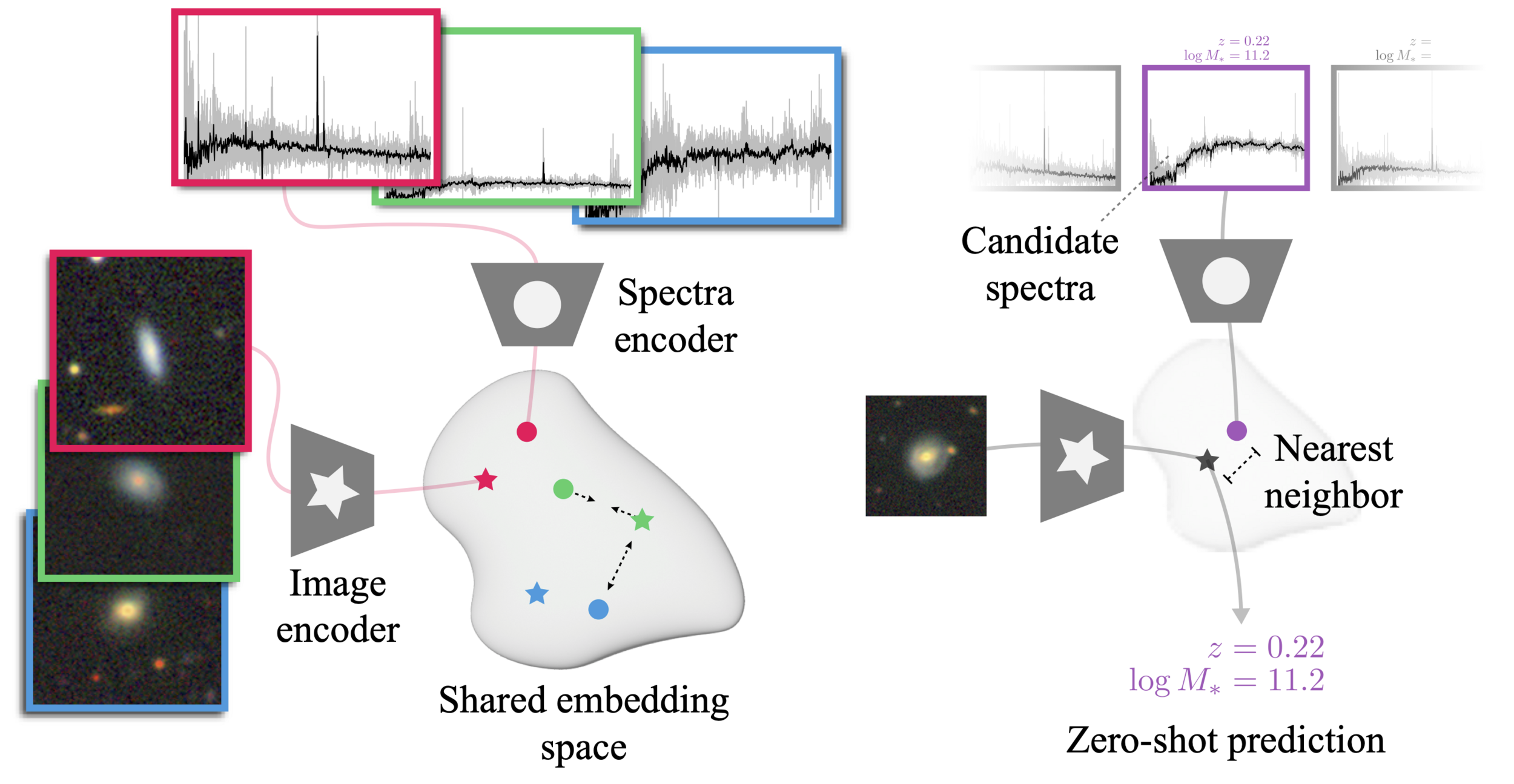

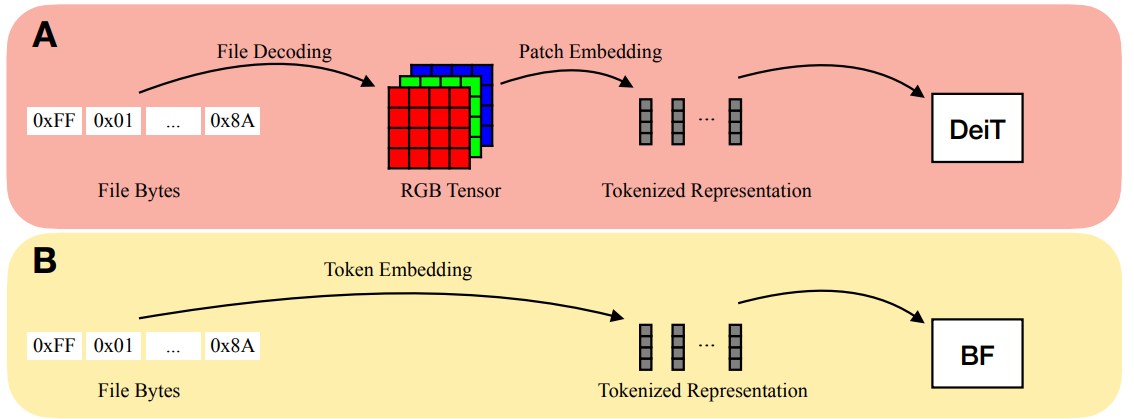

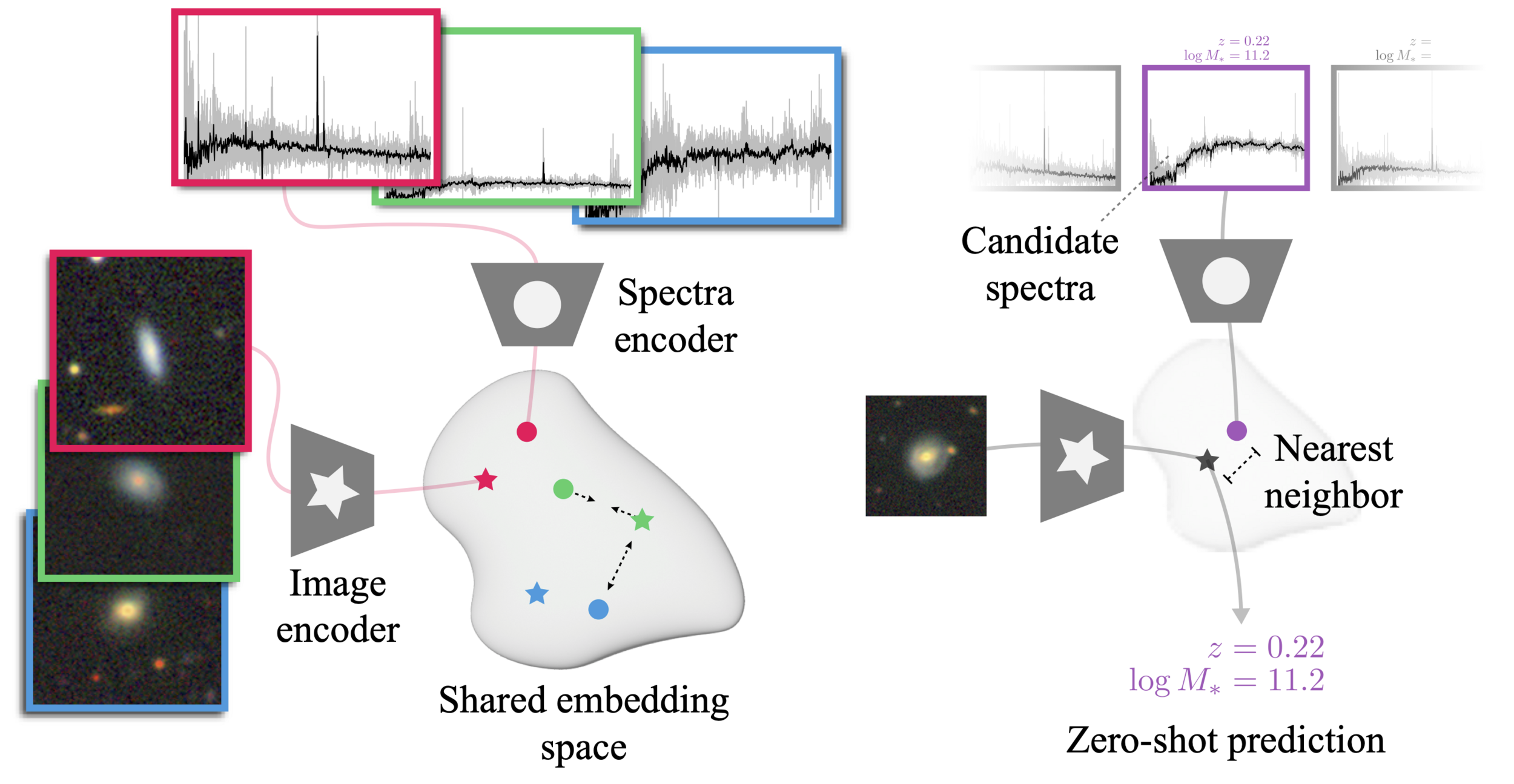

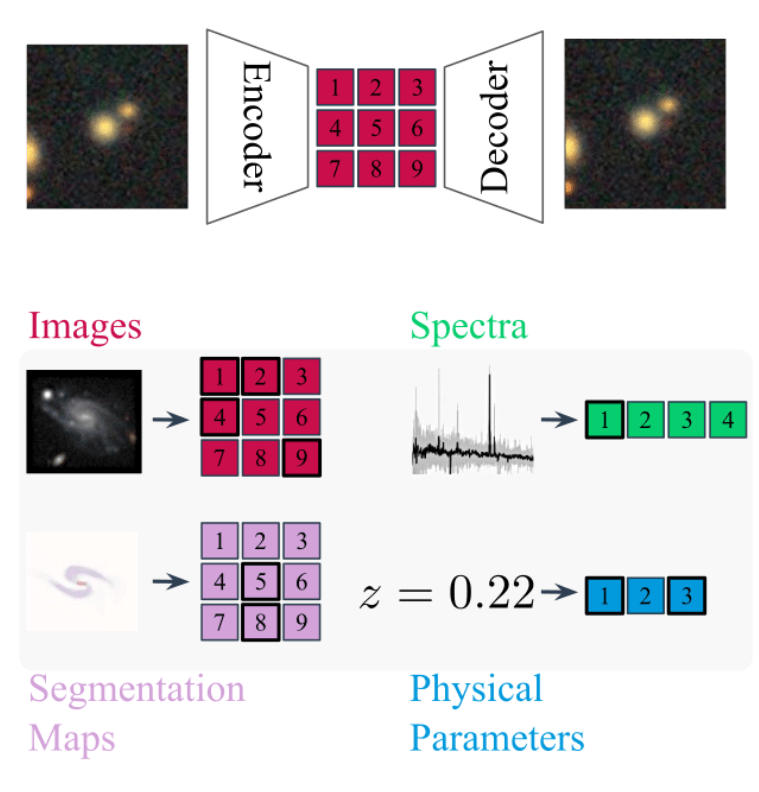

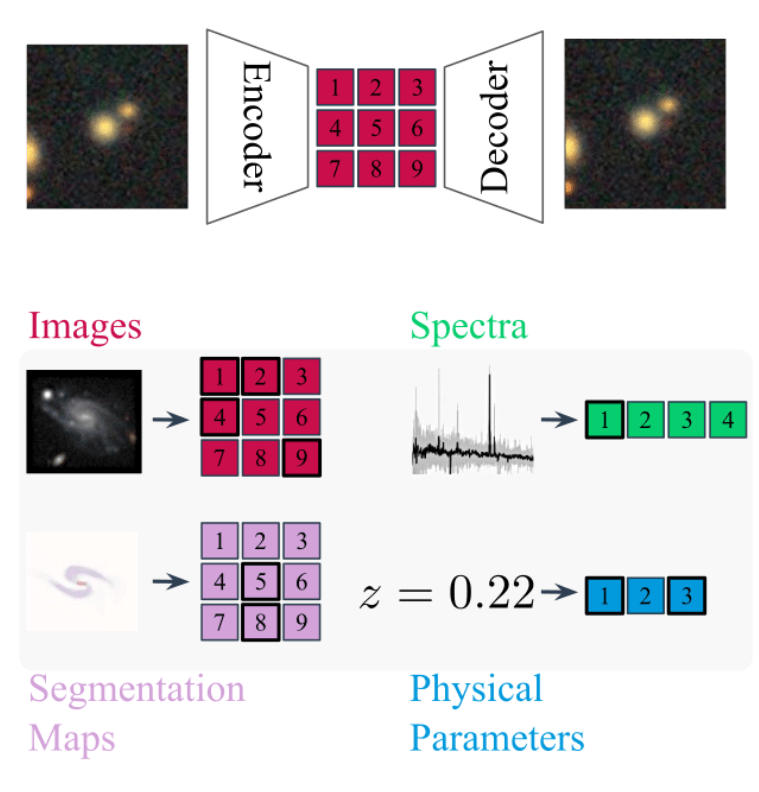

AstroCLIP (Parker et al. 2024)

AstroCLIP

The Universal Neural Architecture Challenge

Most General

Most Specific

Independent models for all types of data

Single model capable of processing all types of data

Bytes Are All You Need (Horton et al. 2023)

Early Fusion Multimodal Models

AstroCLIP (Parker et al. 2024)

Early-Fusion Multimodal Models

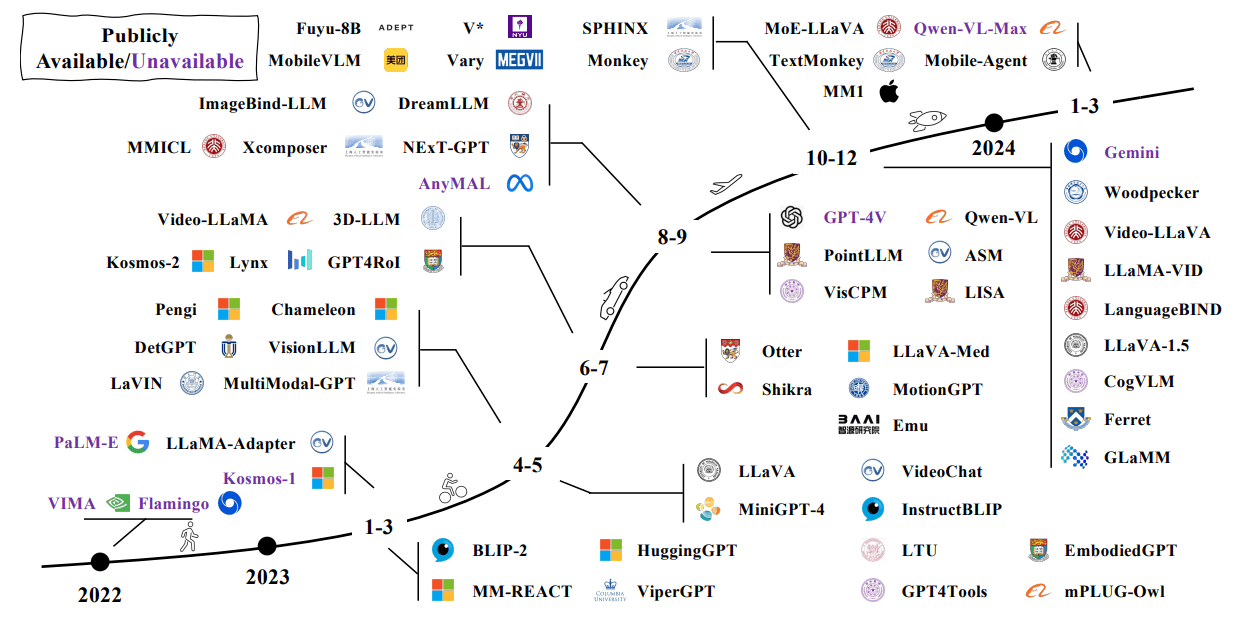

Flamingo: a Visual Language Model for Few-Shot Learning (Alayrac et al. 2022)

Chameleon: Mixed-Modal Early-Fusion Foundation Models (Chameleon team, 2024)

AION-1

AstronomIcal Omnimodal Network

with extensive support from the rest of the team.

Project led by:

Francois

Lanusse

Liam

Parker

Jeff

Shen

Tom

Hehir

Ollie

Liu

Lucas

Meyer

Leopoldo

Sarra

Sebastian Wagner-Carena

Helen

Qu

Micah

Bowles

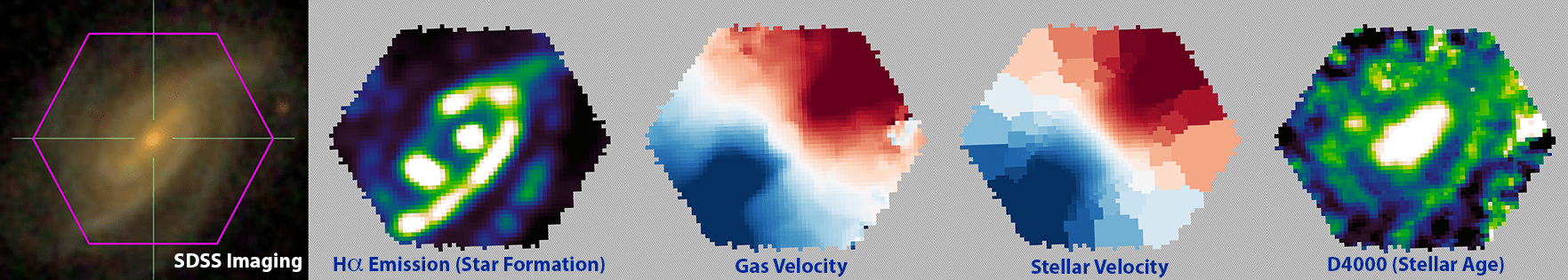

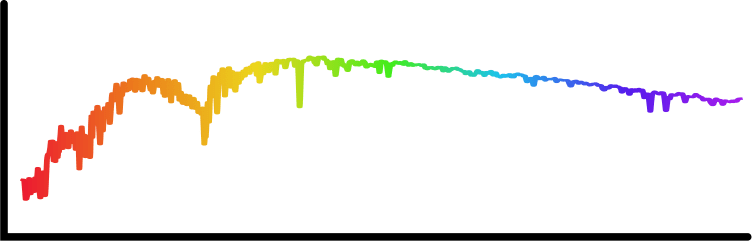

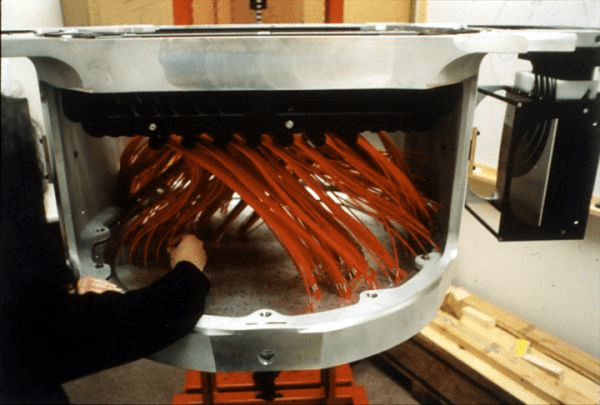

Diverse data modalities for diverse science cases

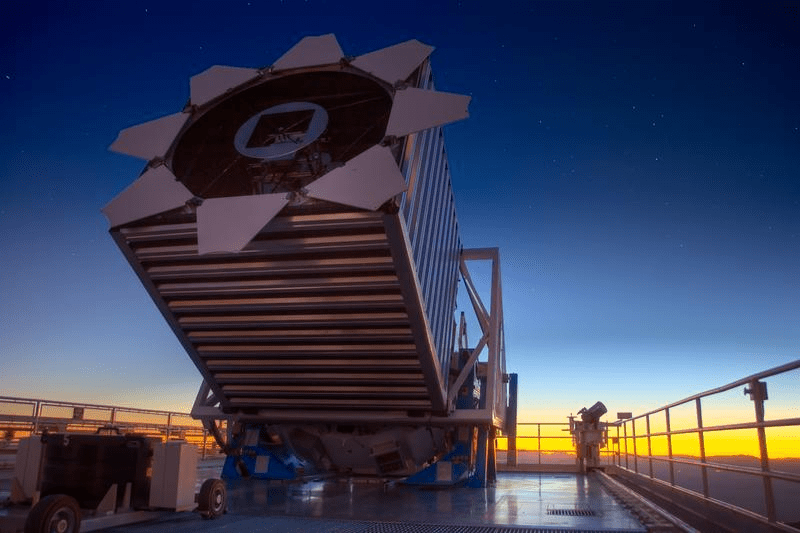

(Blanco Telescope and Dark Energy Camera.

Credit: Reidar Hahn/Fermi National Accelerator Laboratory)

(Subaru Telescope and Hyper Suprime Cam. Credit: NAOJ)

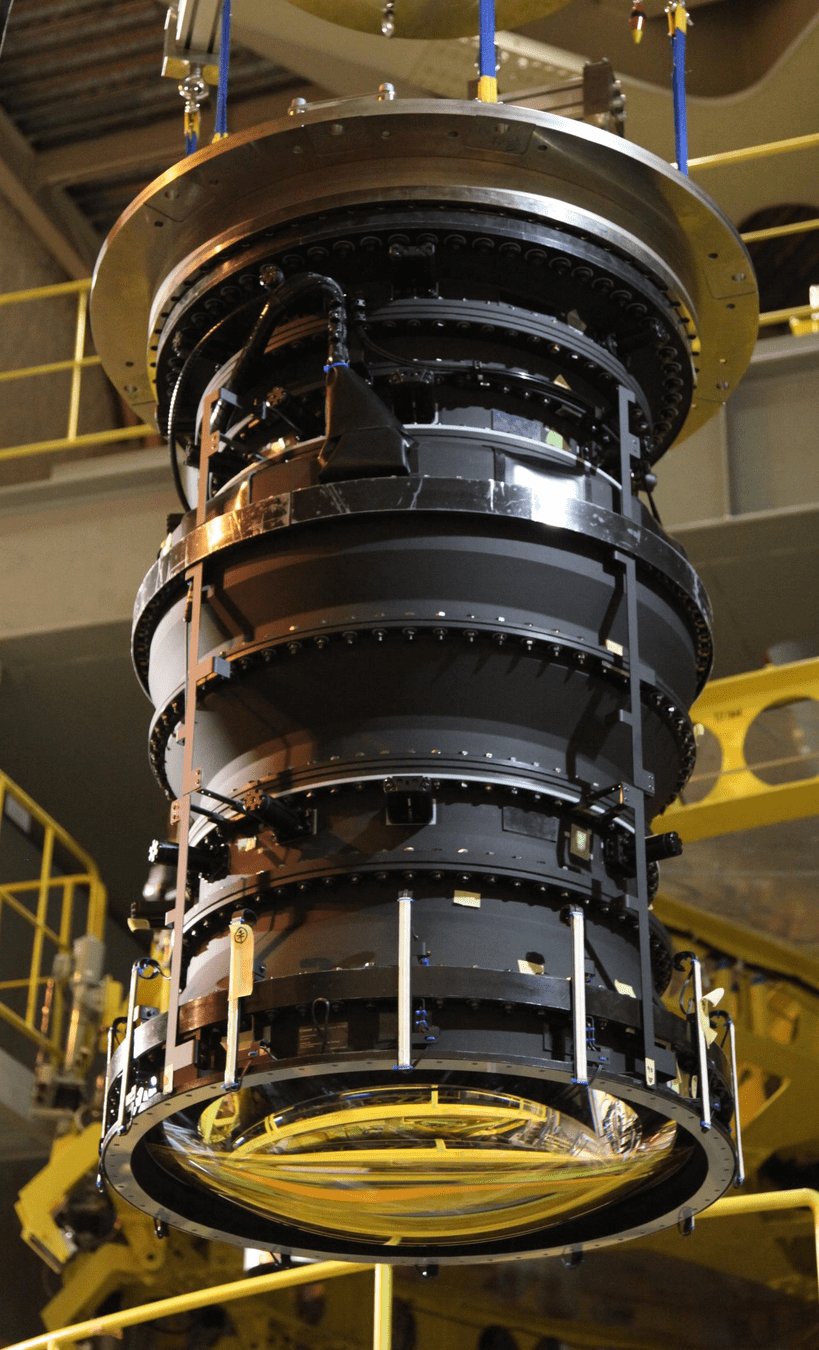

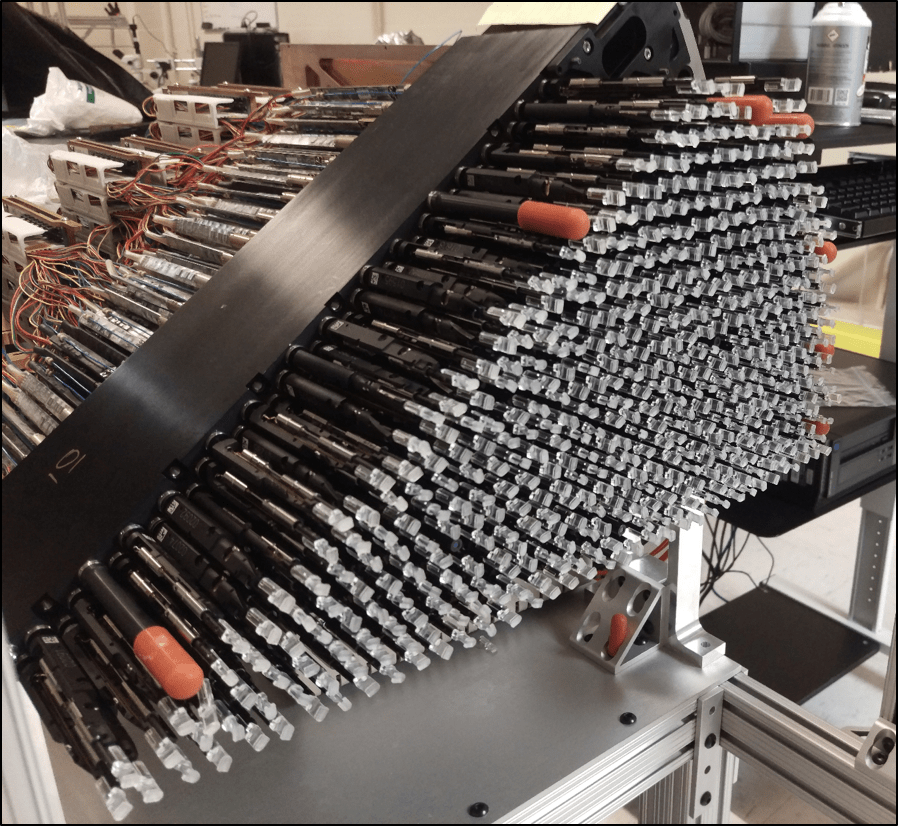

(Dark Energy Spectroscopic Instrument)

(Sloan Digital Sky Survey. Credit: SDSS)

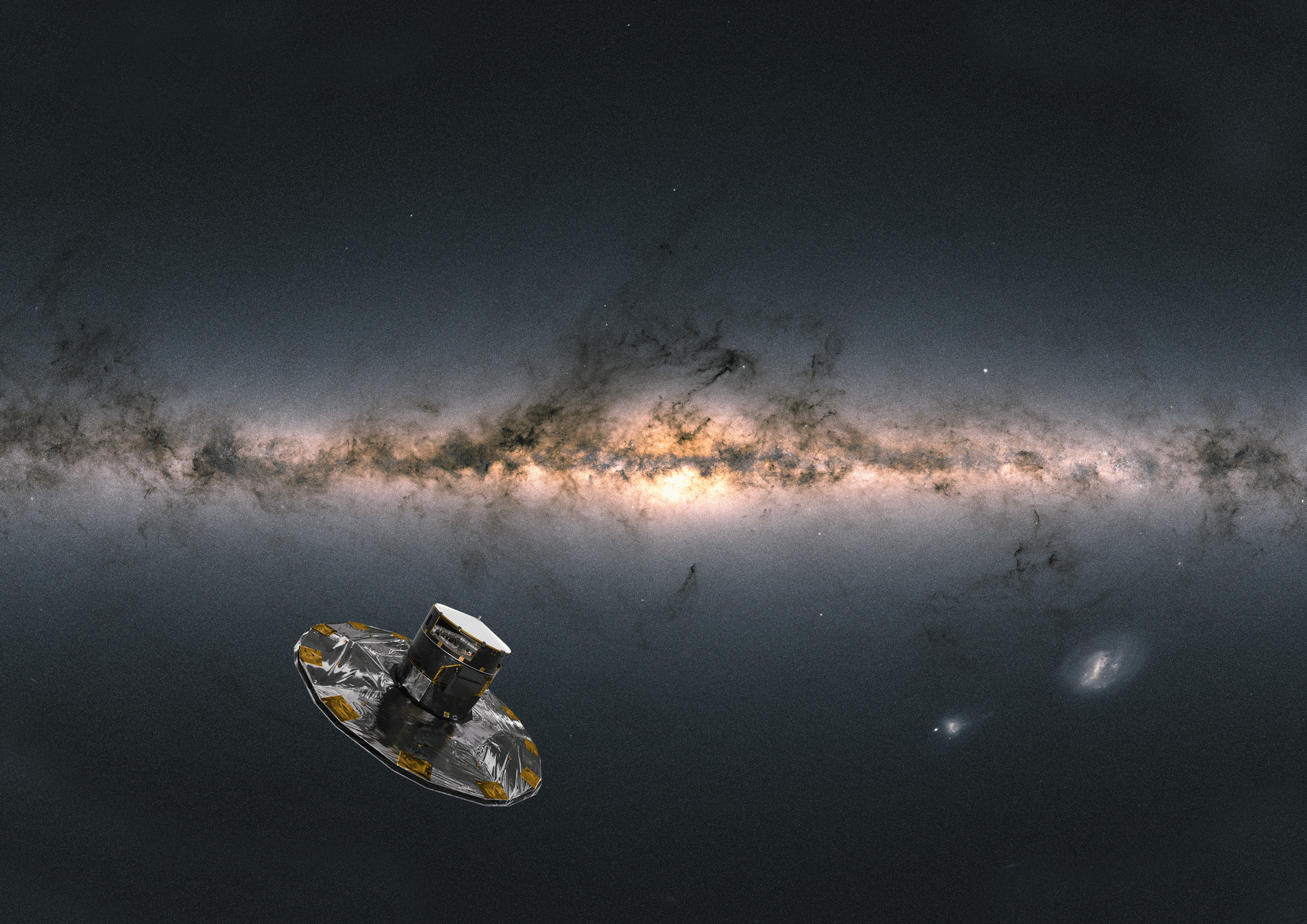

(Gaia Satellite. Credit: ESA/ATG)

- Galaxy formation

- Cosmology

- Stellar physics

- Galaxy archaeology

- ...

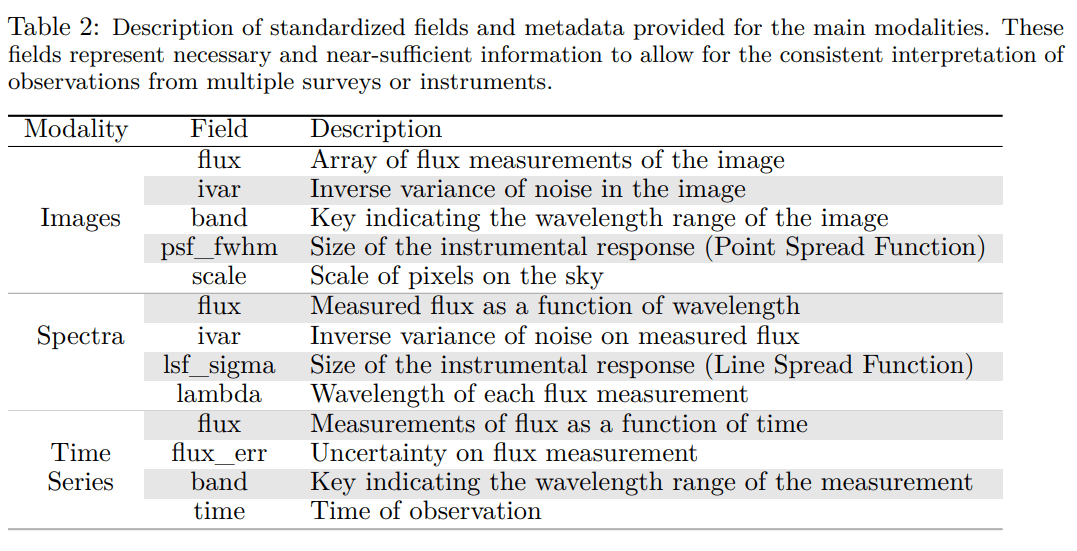

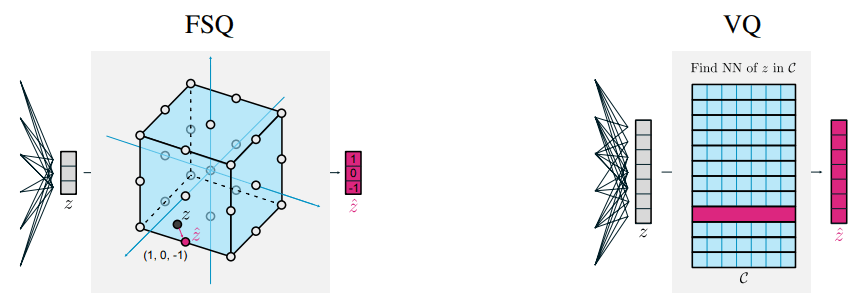

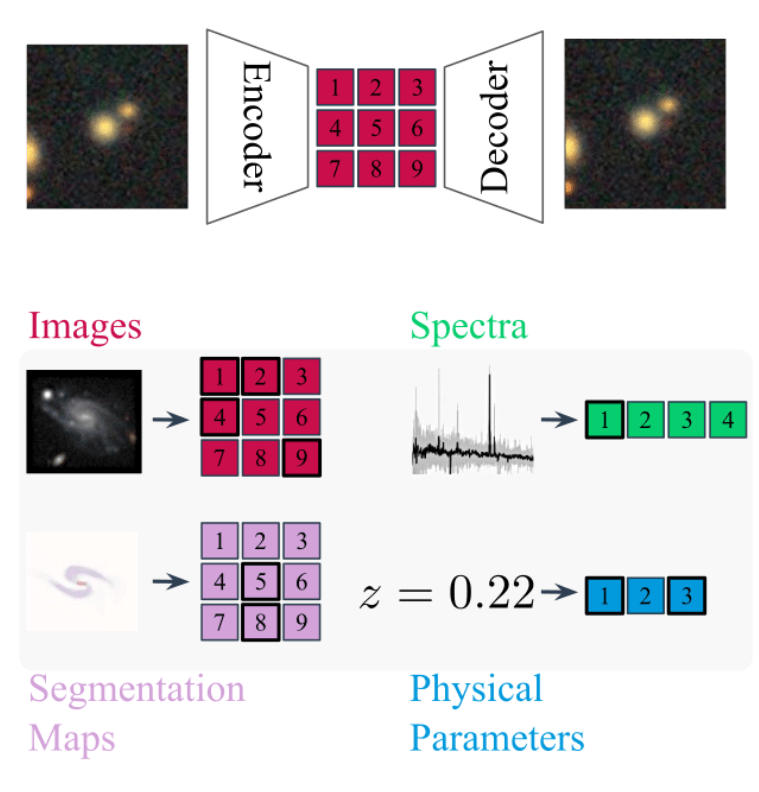

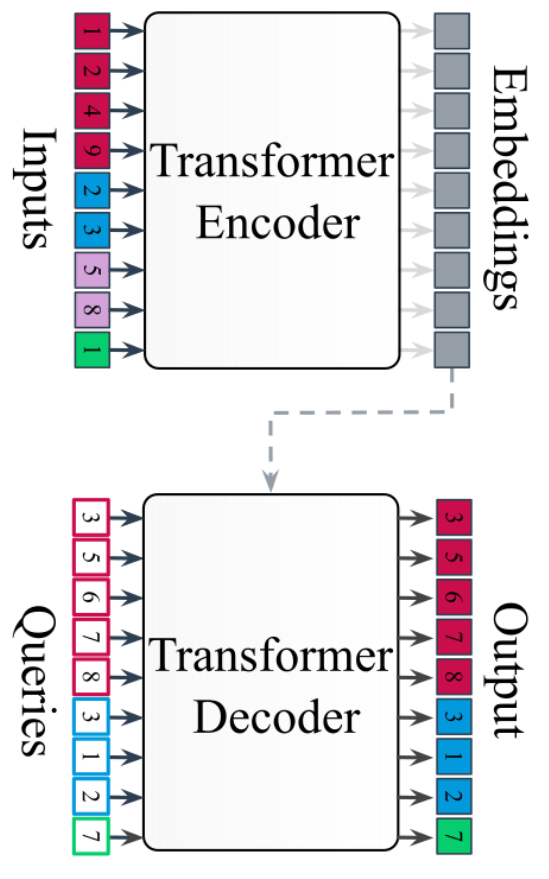

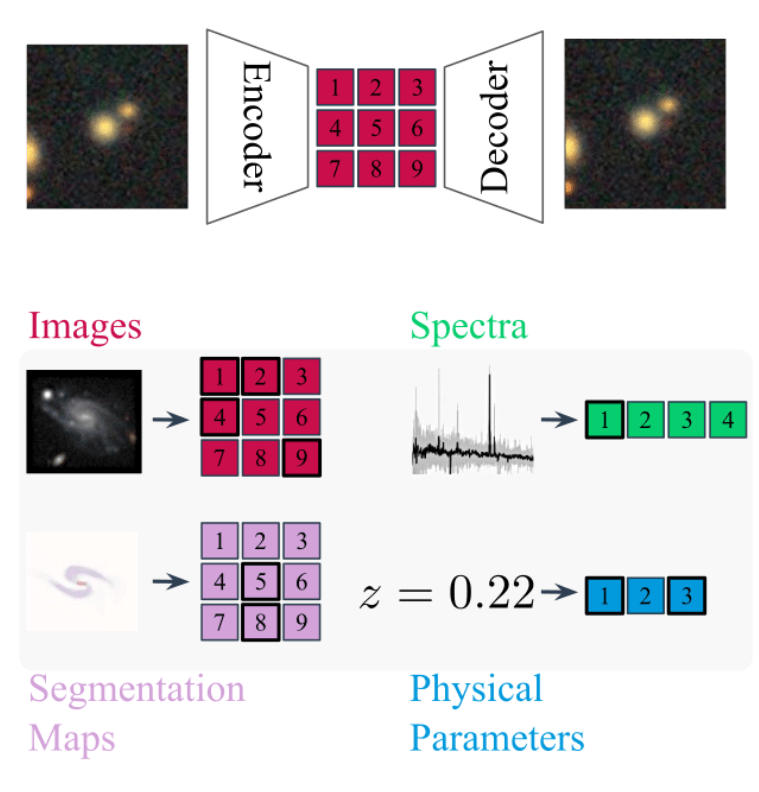

Standardizing all modalities through tokenization

- For each modality class (e.g. image, spectrum) we build dedicated metadata-aware tokenizers

- For Aion-1, we integrate 39 different modalities (different instruments, different measurements, etc.)

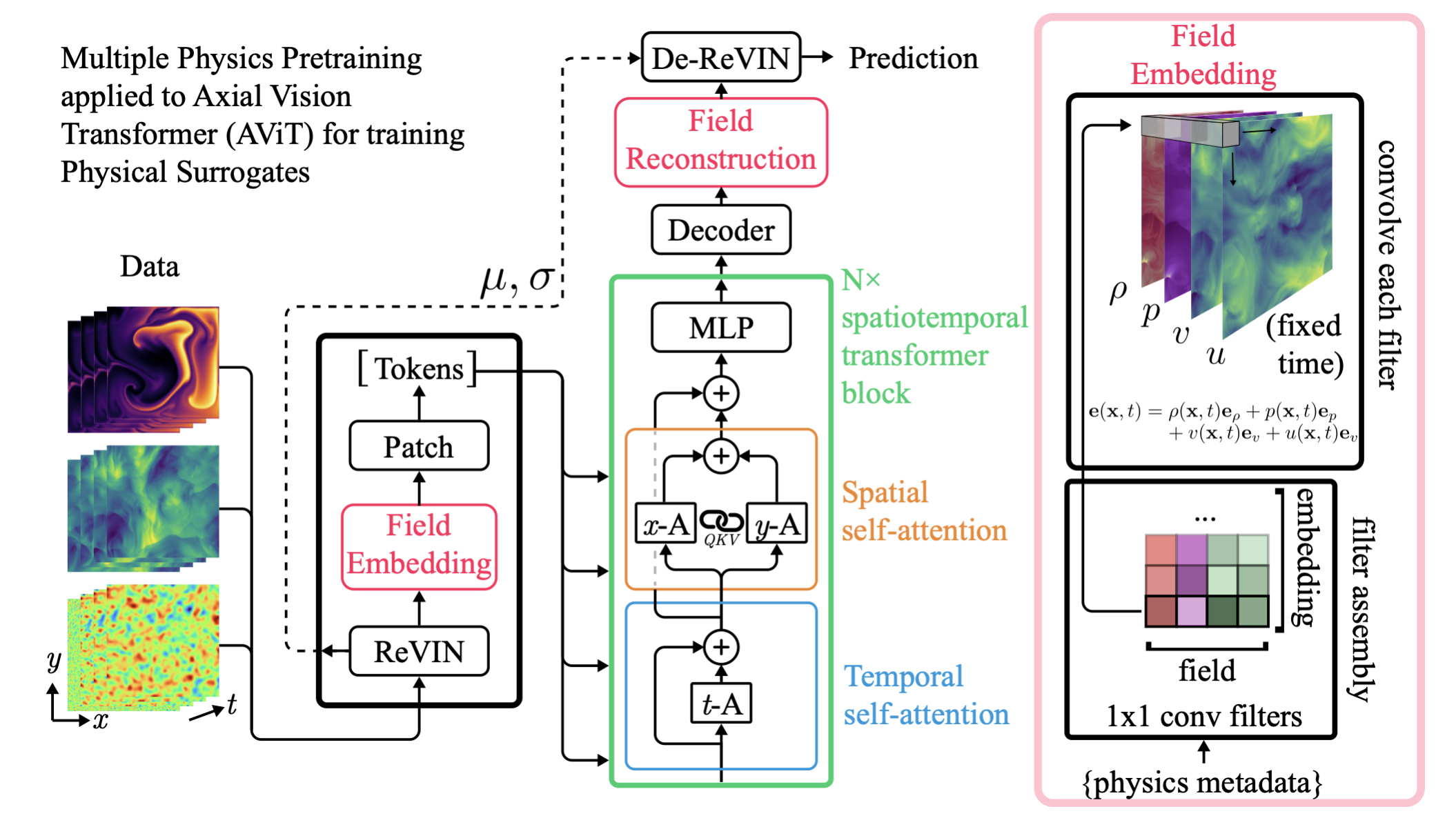

Field Embedding Strategy Developed for

Multiple Physics Pretraining (McCabe et al. 2023)

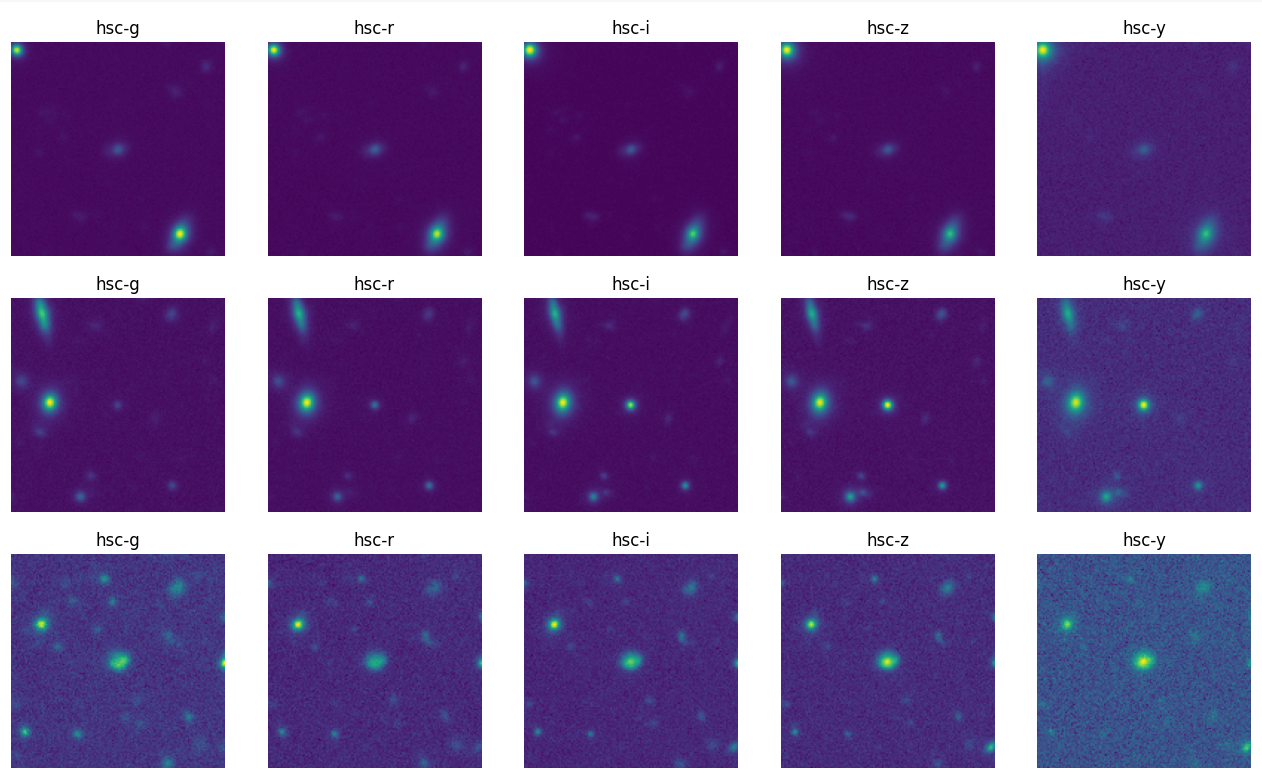

DES g

DES r

DES i

DES z

HSC g

HSC r

HSC i

HSC z

HSC y

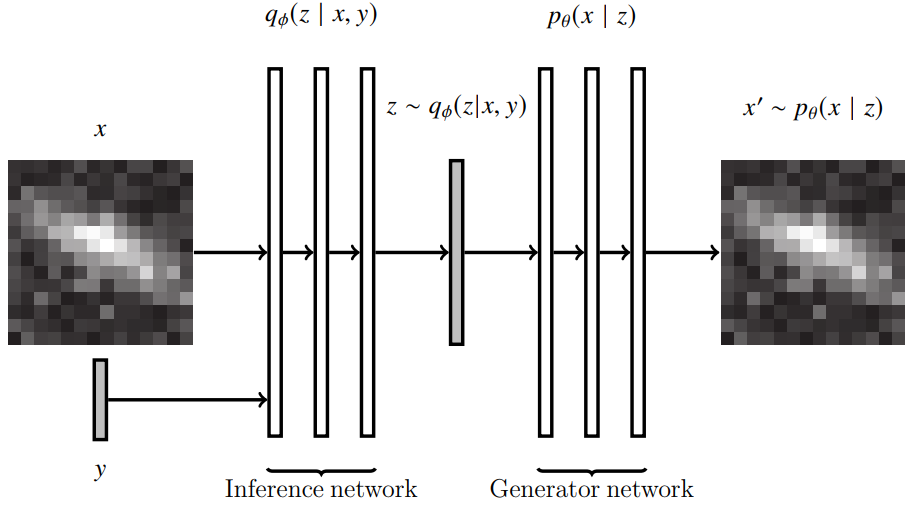

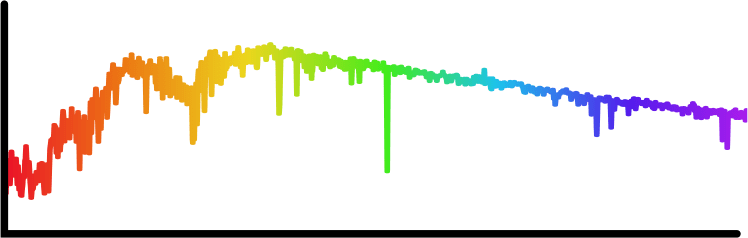

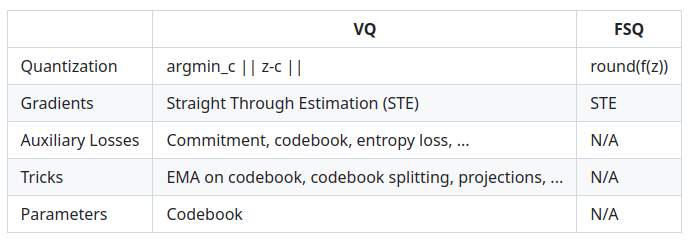

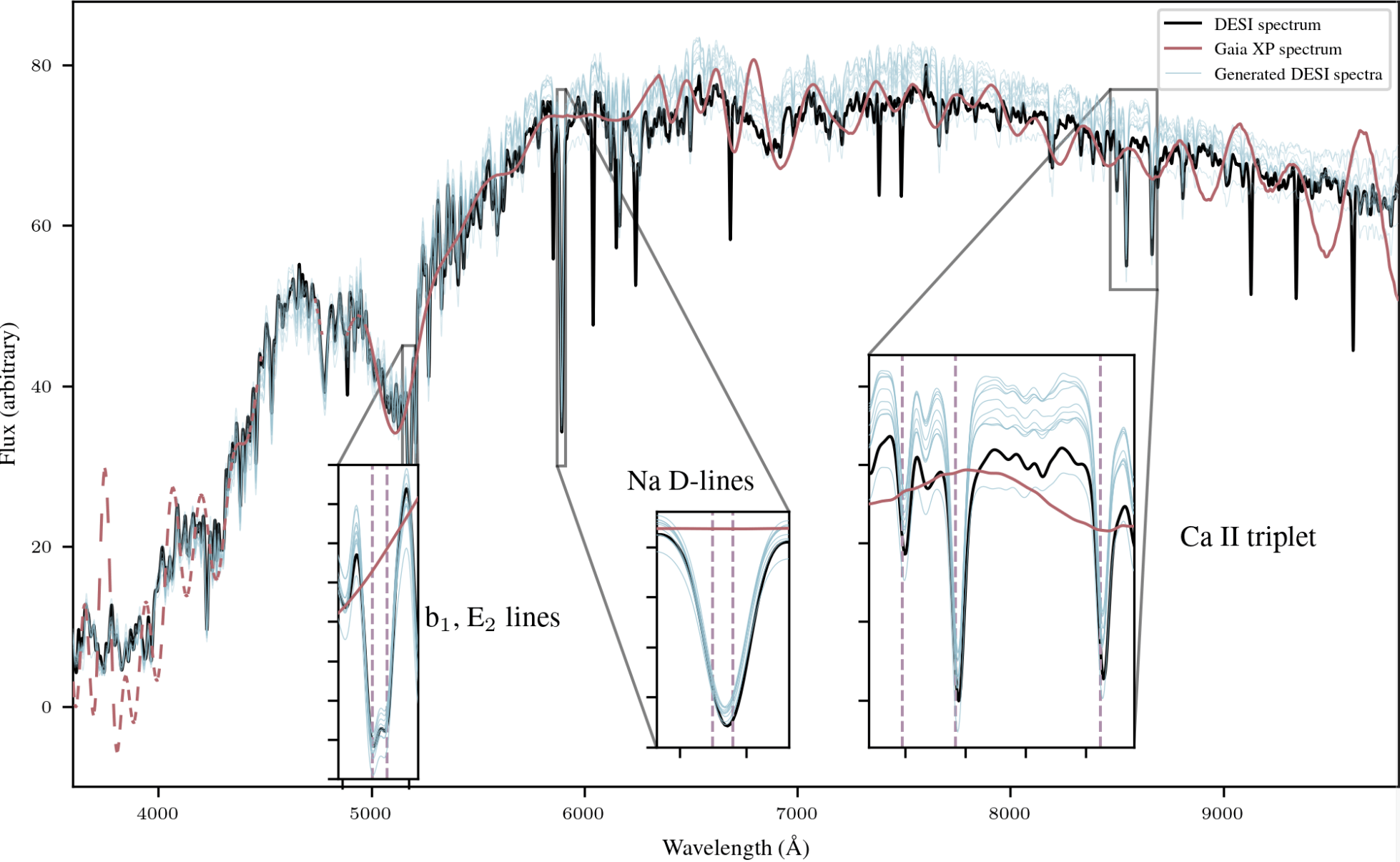

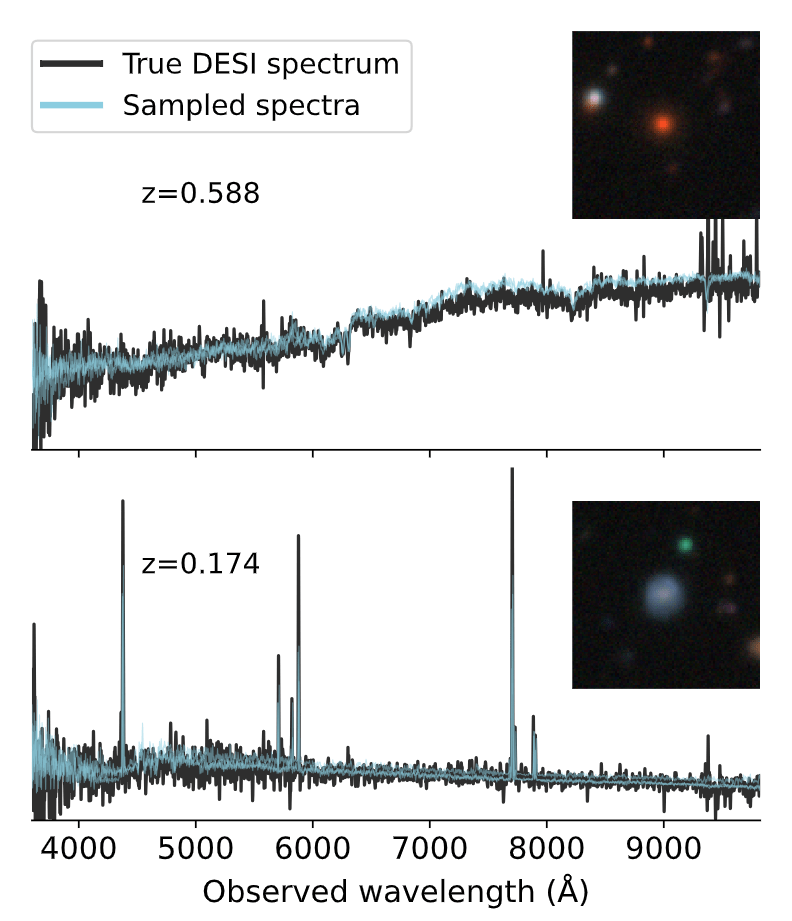

Any-to-Any Modeling with Generative Masked Modeling

- Training is done by pairing observations of the same objects from different instruments.

- Each input token is tagged with a modality embedding that specifies provenance metadata.

- Model is trained by cross-modal generative masked modeling (Mizrahi et al. 2023)

=> Learns the joint and all conditional distributions of provided modalities:

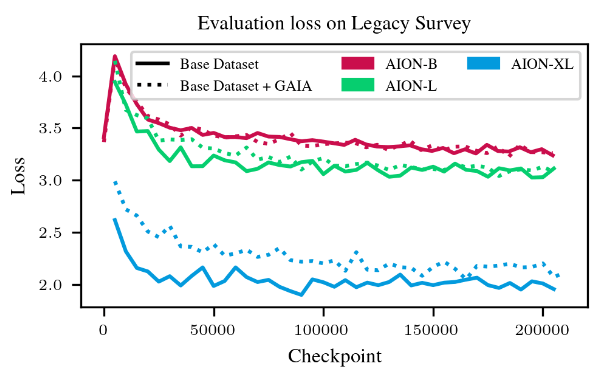

AION-1 family of models

- Models trained as part of the 2024 Jean Zay Grand Challenge, following an extension to a new partition of 1400 H100s

- AION-1 Base: 300 M parameters

- 64 H100s - 1.5 days

- AION-1 Large: 800 M parameters

- 100 H100s - 2.5 days

- AION-1 XLarge: 3B parameters

- 288 H100s - 3.5 days

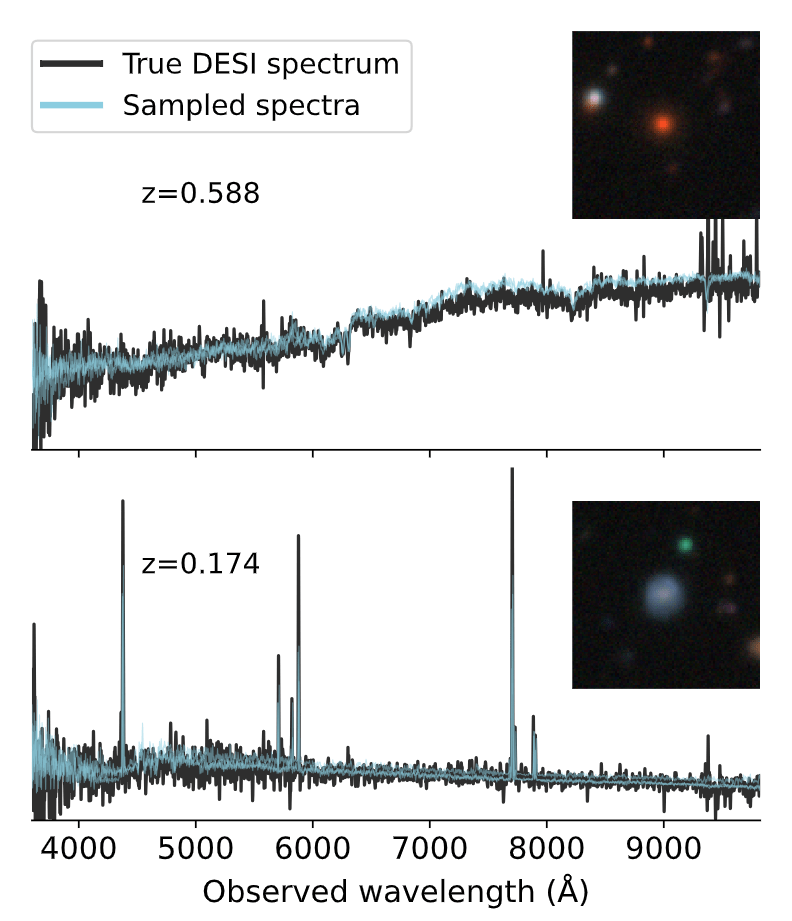

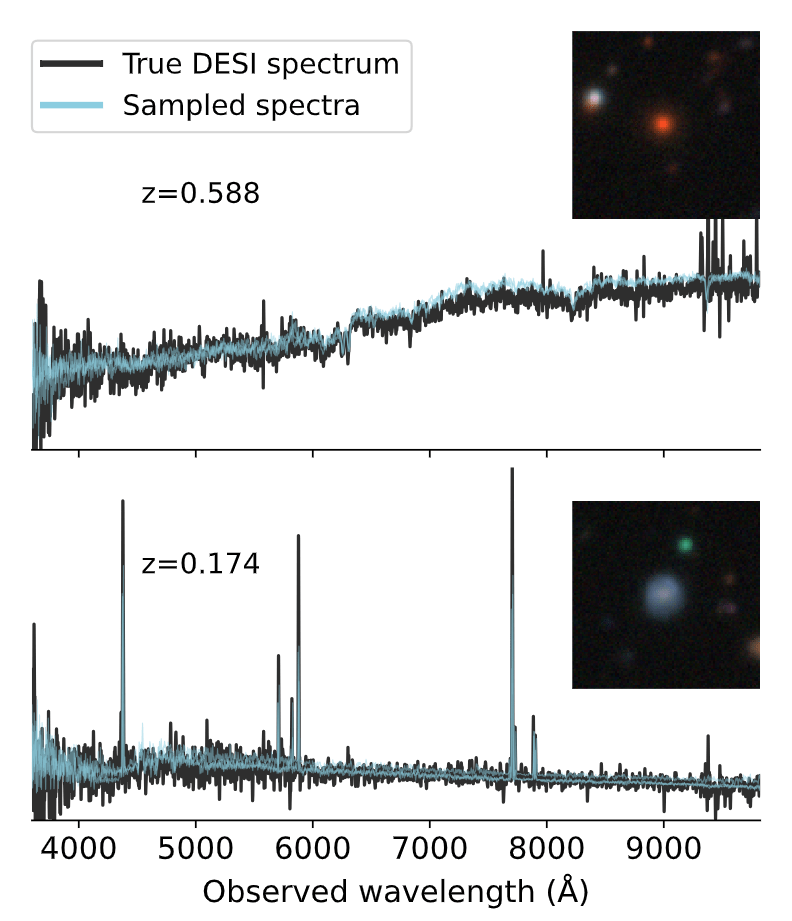

Example of out-of-the-box capabilities

Survey translation

Spectrum super-resolution

Example of emergent multimodal understanding

- Direct association between DESI and HSC was excluded during pretraining

=> This task is out of distribution!

Accelerating Downstream Science

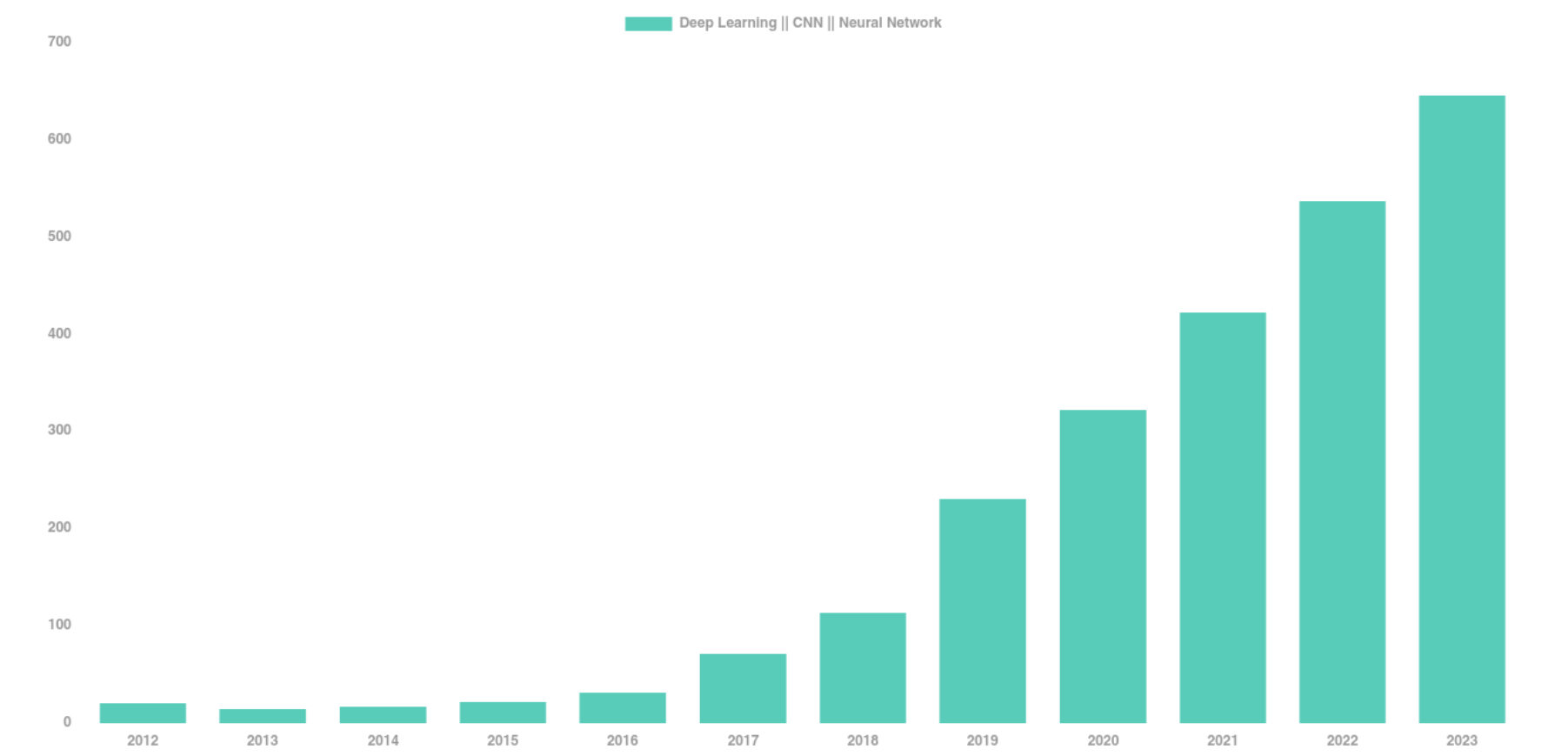

The Deep Learning Boom in Astrophysics

astro-ph abstracts mentioning Deep Learning, CNN, or Neural Networks

The vast majority of these results has relied on supervised learning and networks trained from scratch.

Francois' first Deep Learning Paper in Astro (with Barnabas Poczos)

Rethinking the way we use Deep Learning

Conventional scientific workflow with deep learning

- Build a large training set of realistic data

- Design a neural network architecture for your data

- Deal with data preprocessing/normalization issues

- Train your network on some GPUs for a day or so

- Apply your network to your problem

-

Throw the network away...

=> Because it's completely specific to your data, and to the one task it's trained for.

Conventional researchers @ CMU

Circa 2016

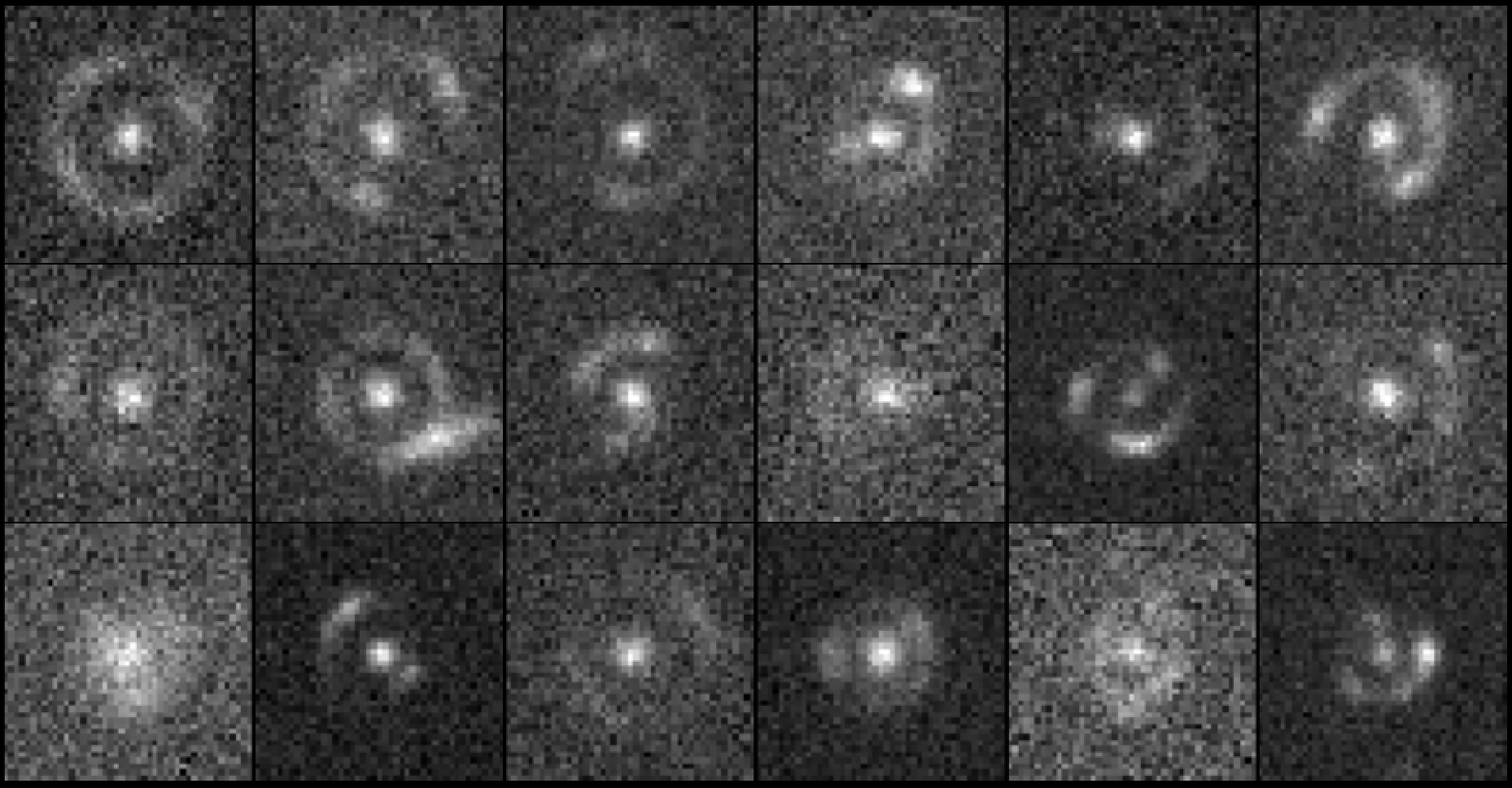

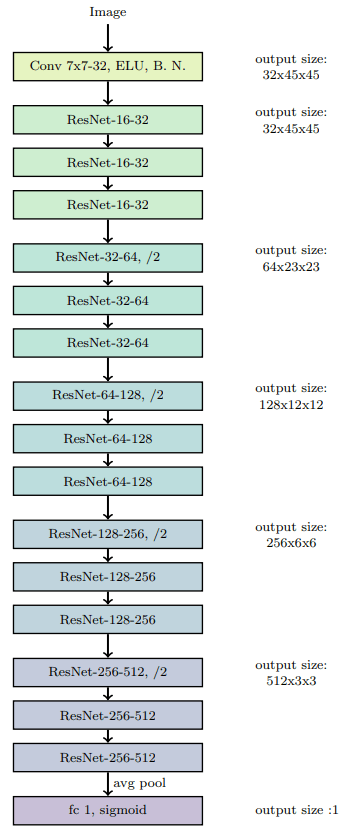

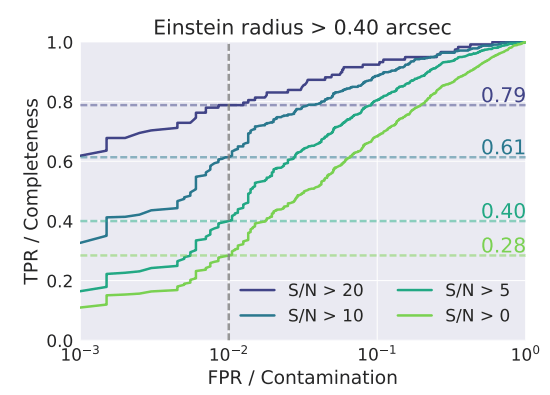

CMU DeepLens (Lanusse et al 2017)

Rethinking the way we use Deep Learning

Foundation Model-based Scientific Workflow

- Build a small training set of realistic data

- Design a neural network architecture for your data

- Deal with data preprocessing/normalization issues

- Adapt a model in a matter of minutes

- Apply your model to your problem

- Throw the network away...

=> Because it's completely specific to your data, and to the one task it's trained for.

Already taken care of

=> Let's discuss embedding-based adaptation

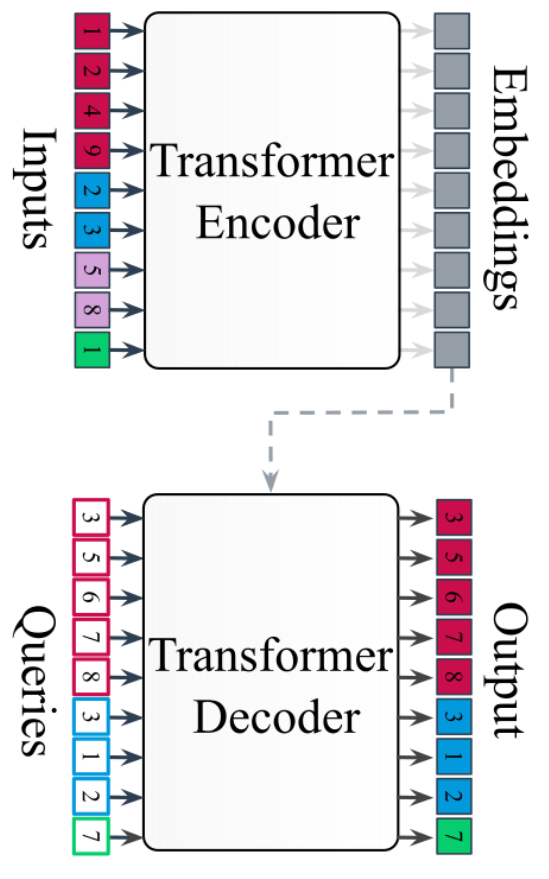

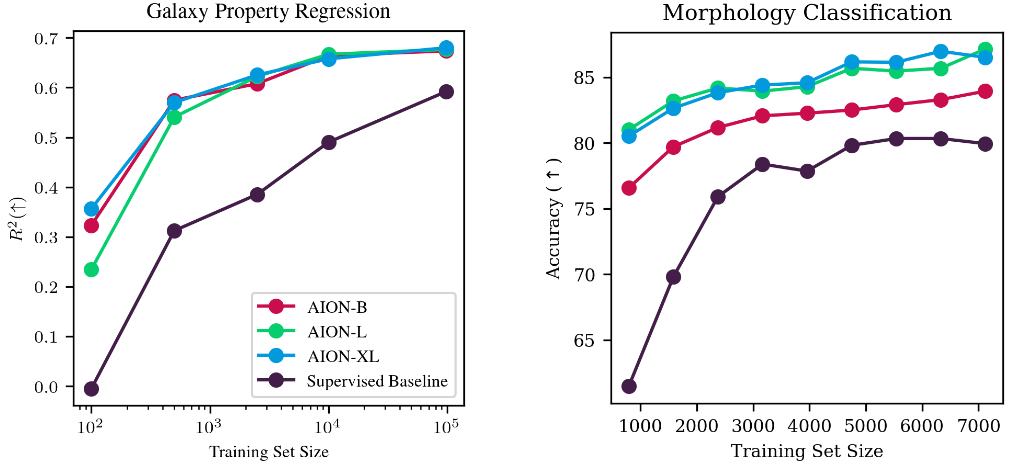

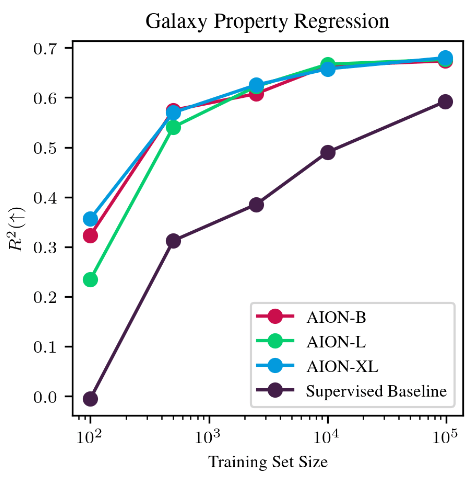

Adaptation of AION-1 embeddings

Adaptation at low cost

with simple strategies:

- Mean pooling + linear probing

- Attentive pooling

- Can be used trivially on any input data AION-1 was trained for

- Flexible to varying number/types of inputs

=> Allows for trivial data fusion

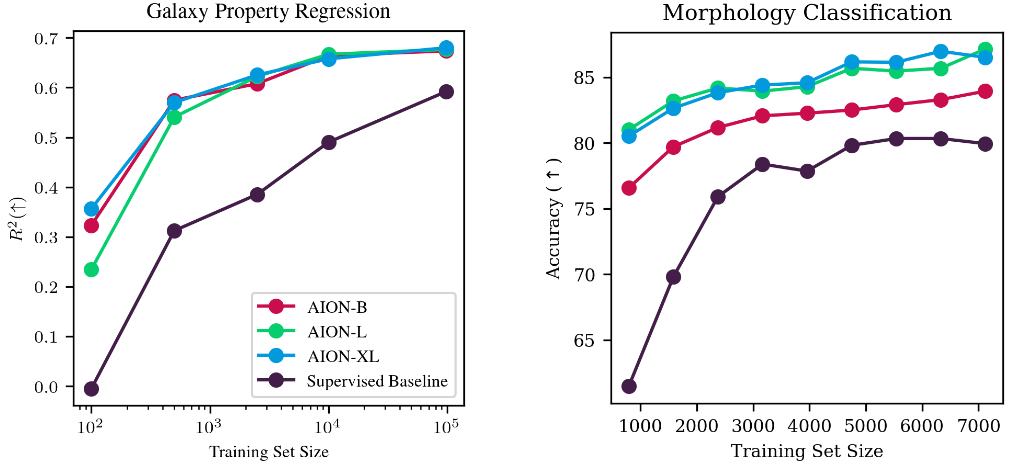

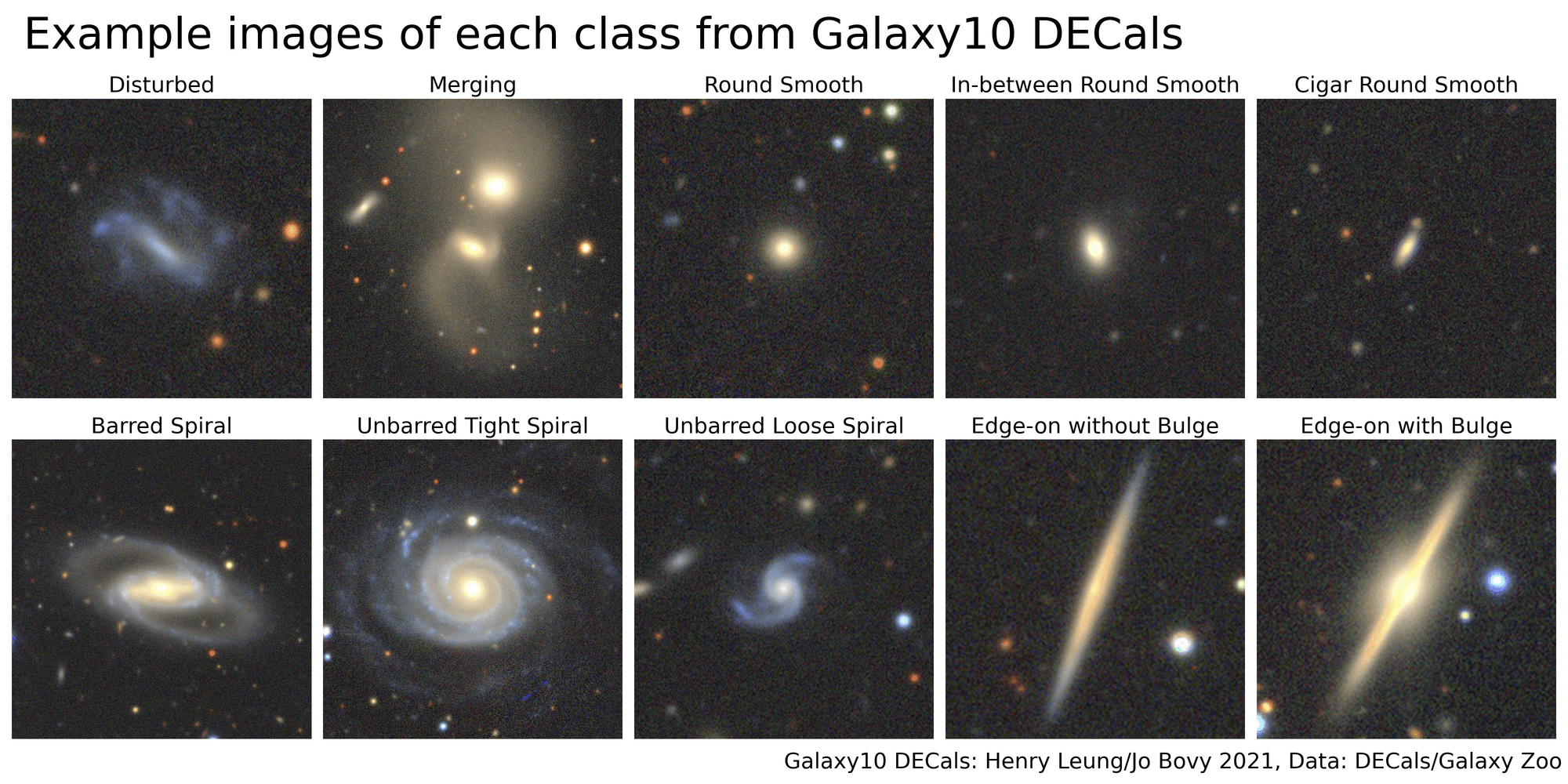

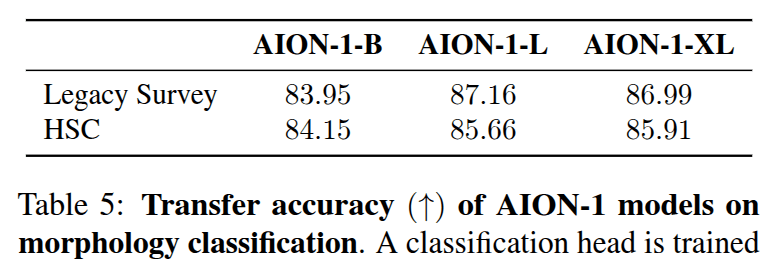

Morphology classification by Linear Probing

Trained on ->

Eval on ->

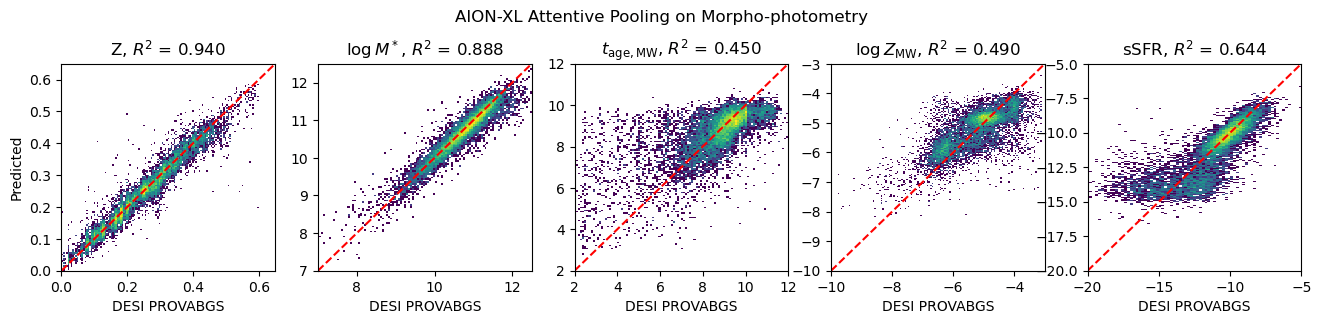

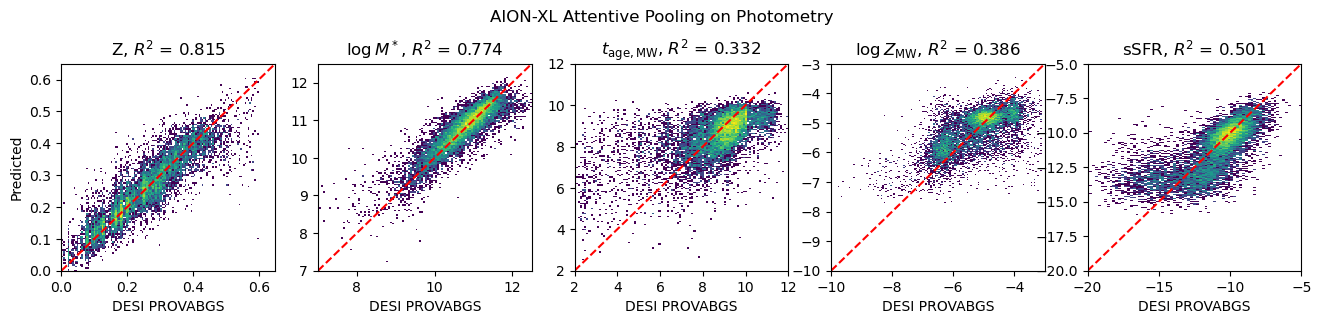

Physical parameter estimation and data fusion

Inputs:

measured fluxes

Inputs:

measured fluxes + image

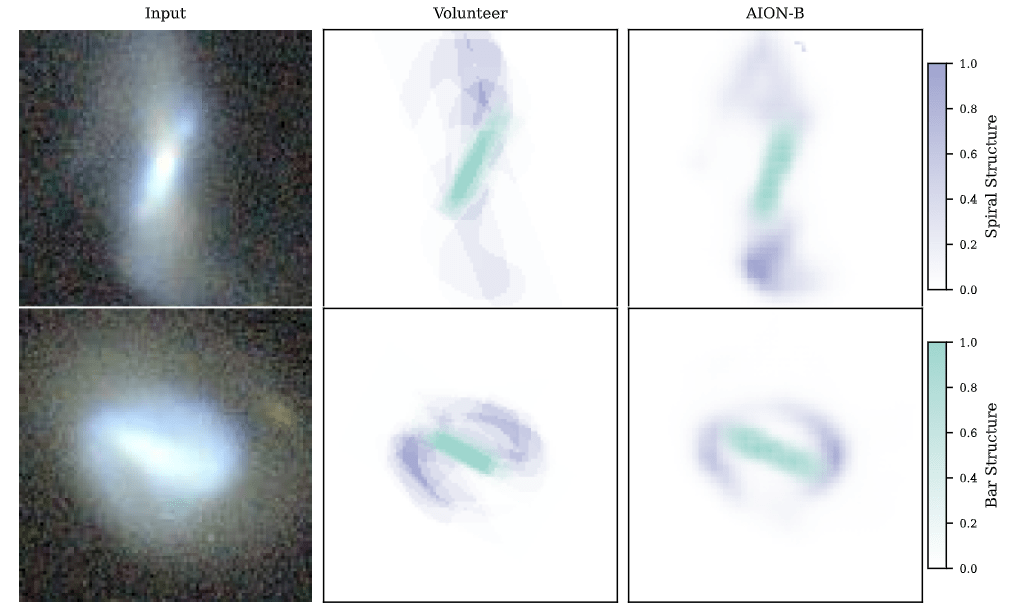

Semantic segmentation

Segmenting central bar and spiral arms in galaxy images based on Galaxy Zoo 3D

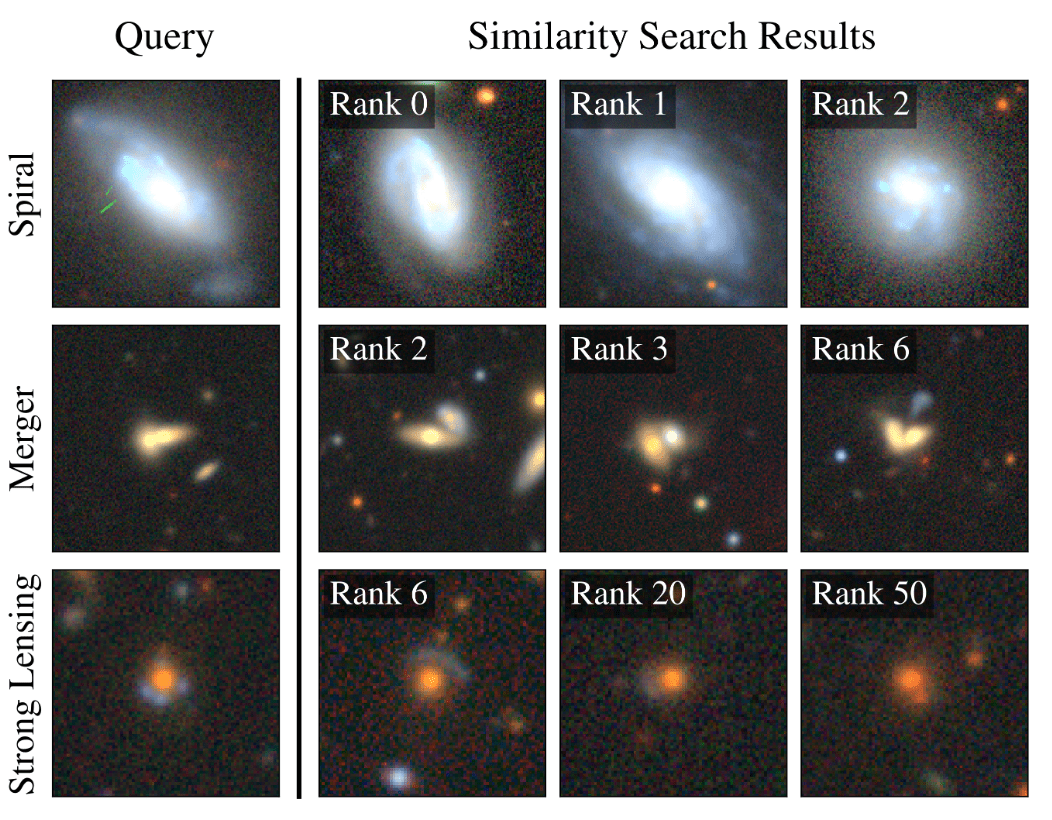

Example-based retrieval from mean pooling embeddings

Why are such Embedding Models useful for Scientists?

-

Never have to retrain my own neural networks from scratch

- Existing pre-trained models would already be near optimal, no matter the task at hand

-

Saves a lot of time and energy

- Practical large scale Deep Learning even in very few example regime

-

Searching for very rare objects in large astronomical surveys becomes possible

-

Searching for very rare objects in large astronomical surveys becomes possible

- If the information is embedded in a space where it becomes linearly accessible, very simple analysis tools are enough for downstream analysis

Polymathic's recipe for developing Multimodal Scientific Models

Takeaways

Engagement with Scientific Communities

Data Curation And Aggregation

Dedicated ML R&D

Follow us online!

Thank you for listening!

Multimodal Foundation Models for Scientific Data

By eiffl

Multimodal Foundation Models for Scientific Data

Polymathic AI talk at FM4Science Conference

- 532