Foundation Models for Science: Myth or Reality?

François Lanusse

CNRS Researcher @ AIM, CEA Paris-Saclay

Polymathic AI

SCOPE: Science at the Convergence of AI and Exascale computing, March 11th

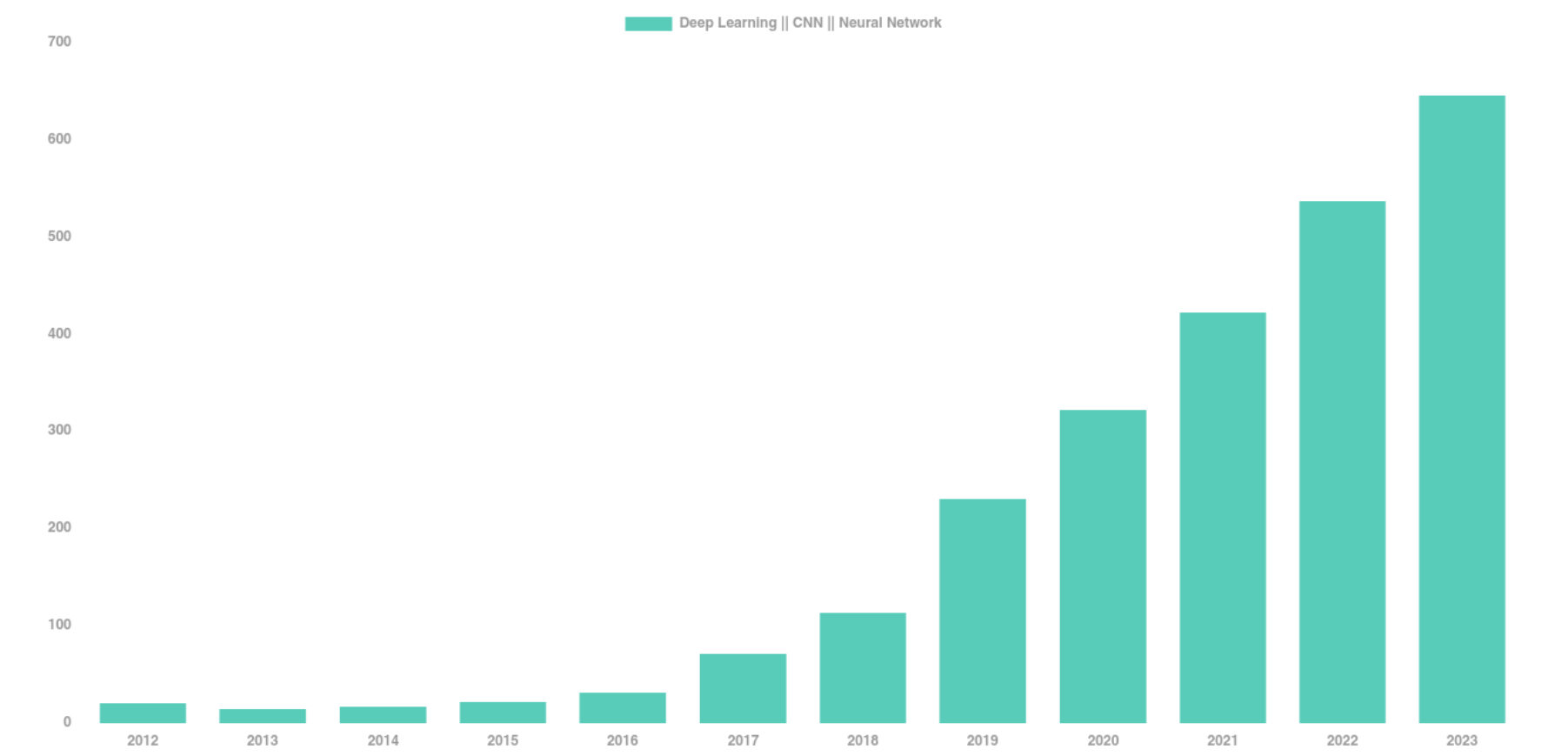

The Deep Learning Boom in Astrophysics

astro-ph abstracts mentioning Deep Learning, CNN, or Neural Networks

The vast majority of these results has relied on supervised learning and networks trained from scratch.

The Limits of Traditional Deep Learning

-

Limited Supervised Training Data

- Rare or novel objects have by definition few labeled examples

- In Simulation Based Inference (SBI), training a neural compression model requires many simulations

- Rare or novel objects have by definition few labeled examples

-

Limited Reusability

- Existing models are trained supervised on a specific task, and specific data.

=> Limits in practice the ease of using deep learning for analysis and discovery

Meanwhile, in Computer Science...

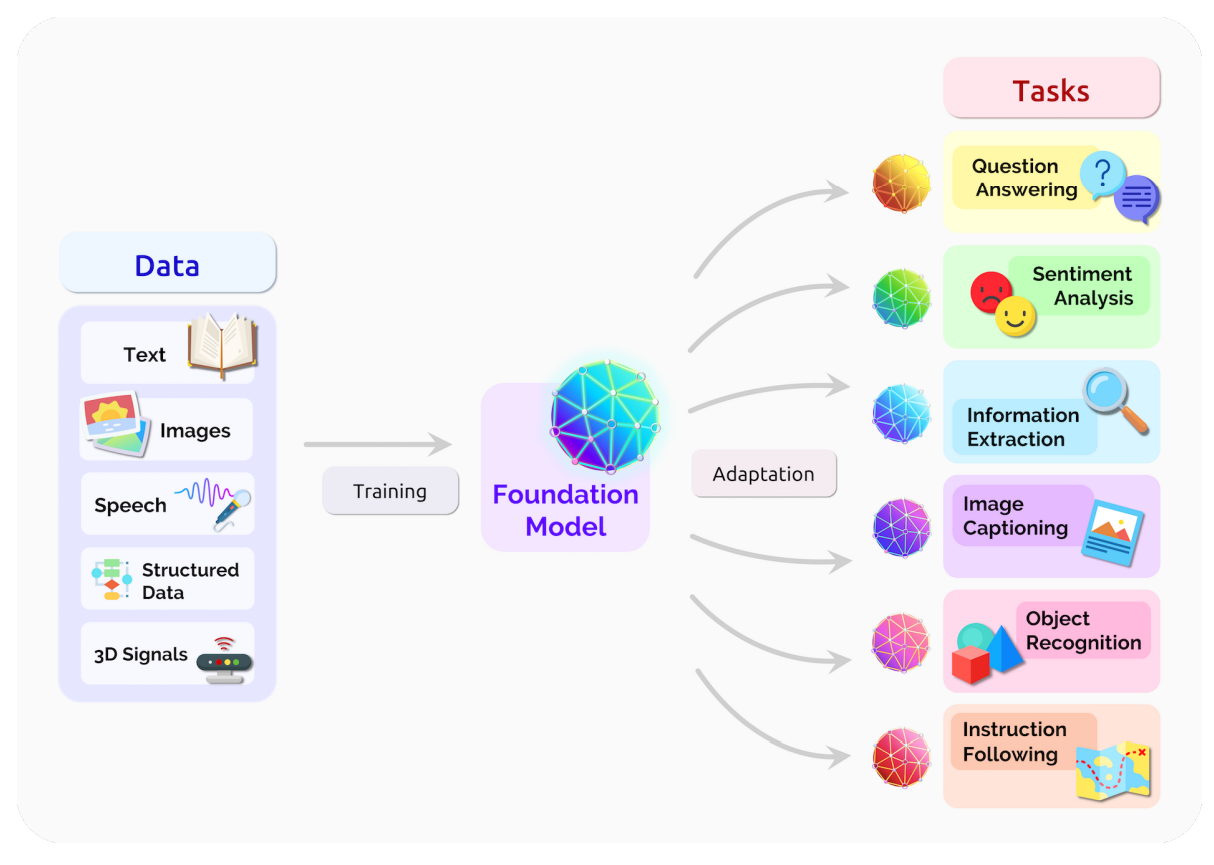

The Rise of The Foundation Model Paradigm

-

Foundation Model approach

-

Pretrain models on pretext tasks, without supervision, on very large scale datasets.

- Adapt pretrained models to downstream tasks.

-

Pretrain models on pretext tasks, without supervision, on very large scale datasets.

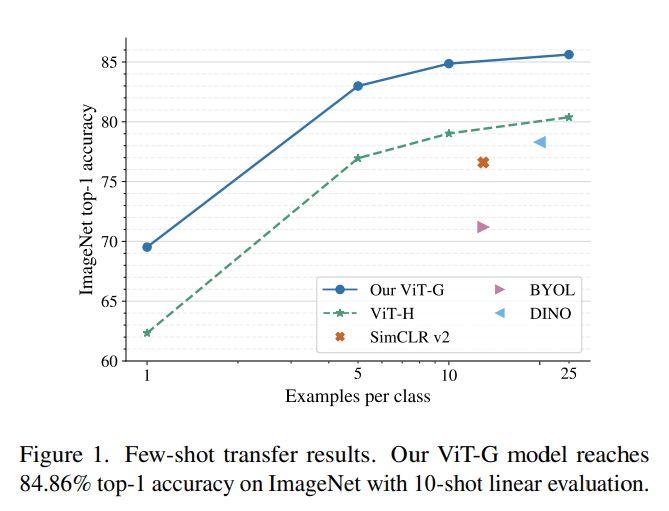

The Advantage of Scale of Data and Compute

Easy Downstream Adaptation

What This New Paradigm Could Mean for Us

-

Never have to retrain my own neural networks from scratch

-

Existing pre-trained models would already be near optimal, no matter the task at hand

-

Existing pre-trained models would already be near optimal, no matter the task at hand

- Practical large scale Deep Learning even in very few example regime

-

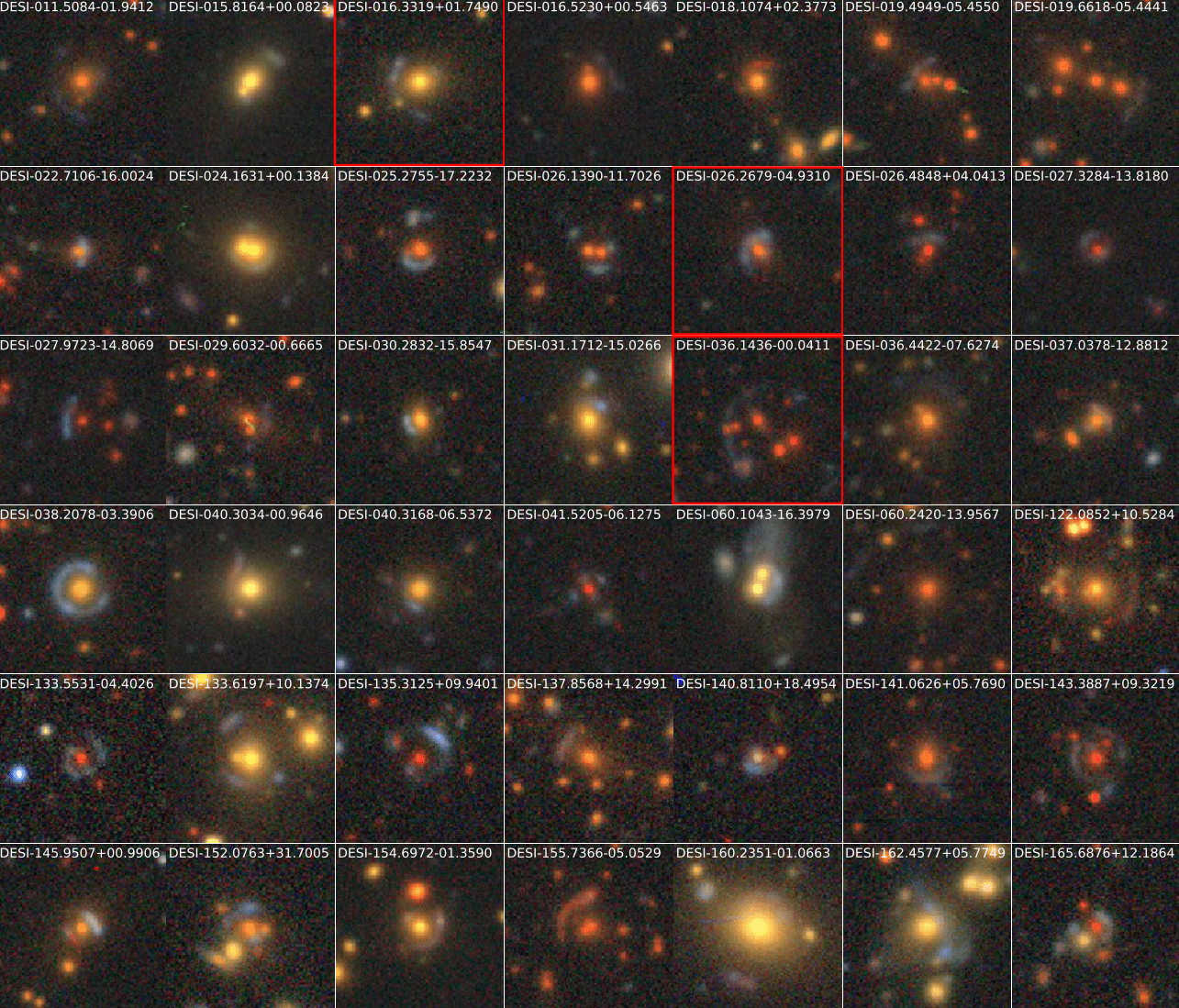

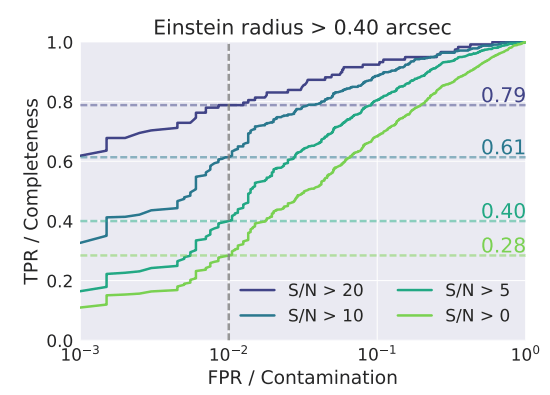

Searching for very rare objects in large surveys like Euclid or LSST becomes possible

-

Searching for very rare objects in large surveys like Euclid or LSST becomes possible

- If the information is embedded in a space where it becomes linearly accessible, very simple analysis tools are enough for downstream analysis

- In the future, survey pipelines may add vector embedding of detected objects into catalogs, these would be enough for most tasks, without the need to go back to pixels

AION-1

Omnimodal Foundation Model for

Astronomical Surveys

Accepted at NeurIPS 2025, spotlight presentation at NeurIPS 2025 AI4Science Workshop

Project led by:

Francois

Lanusse

Liam

Parker

Jeff

Shen

Tom

Hehir

Ollie

Liu

Lucas

Meyer

Sebastian Wagner-Carena

Helen

Qu

Micah

Bowles

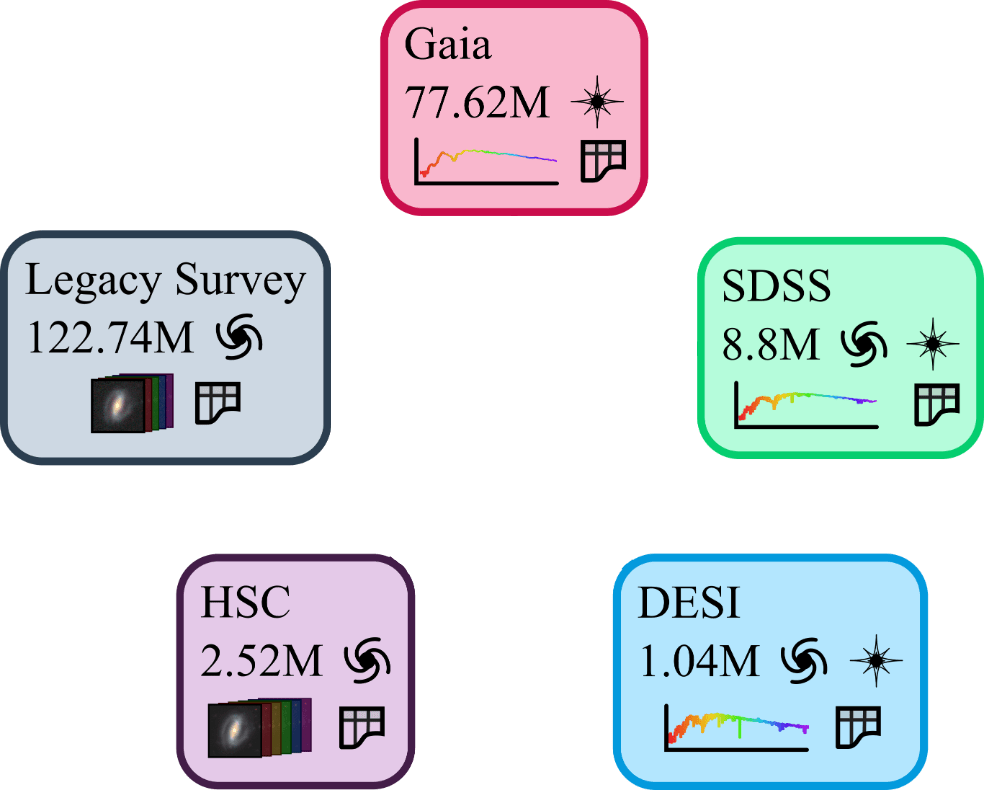

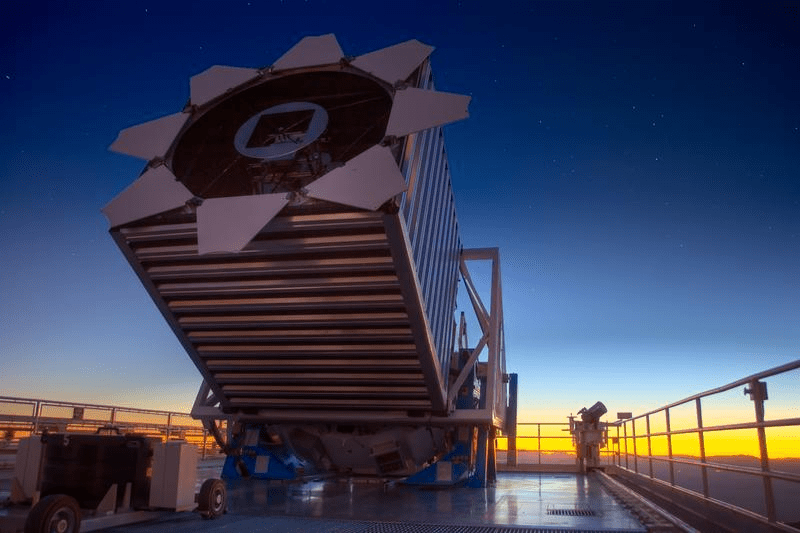

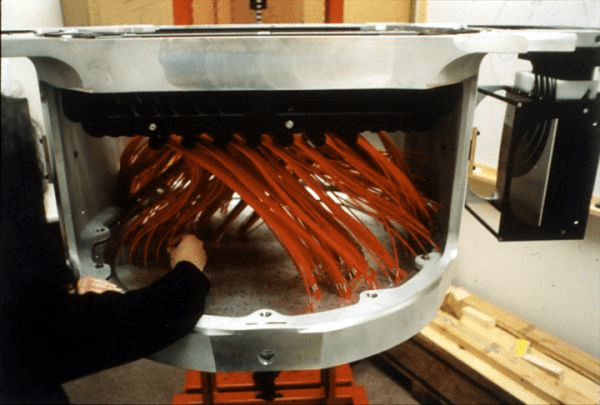

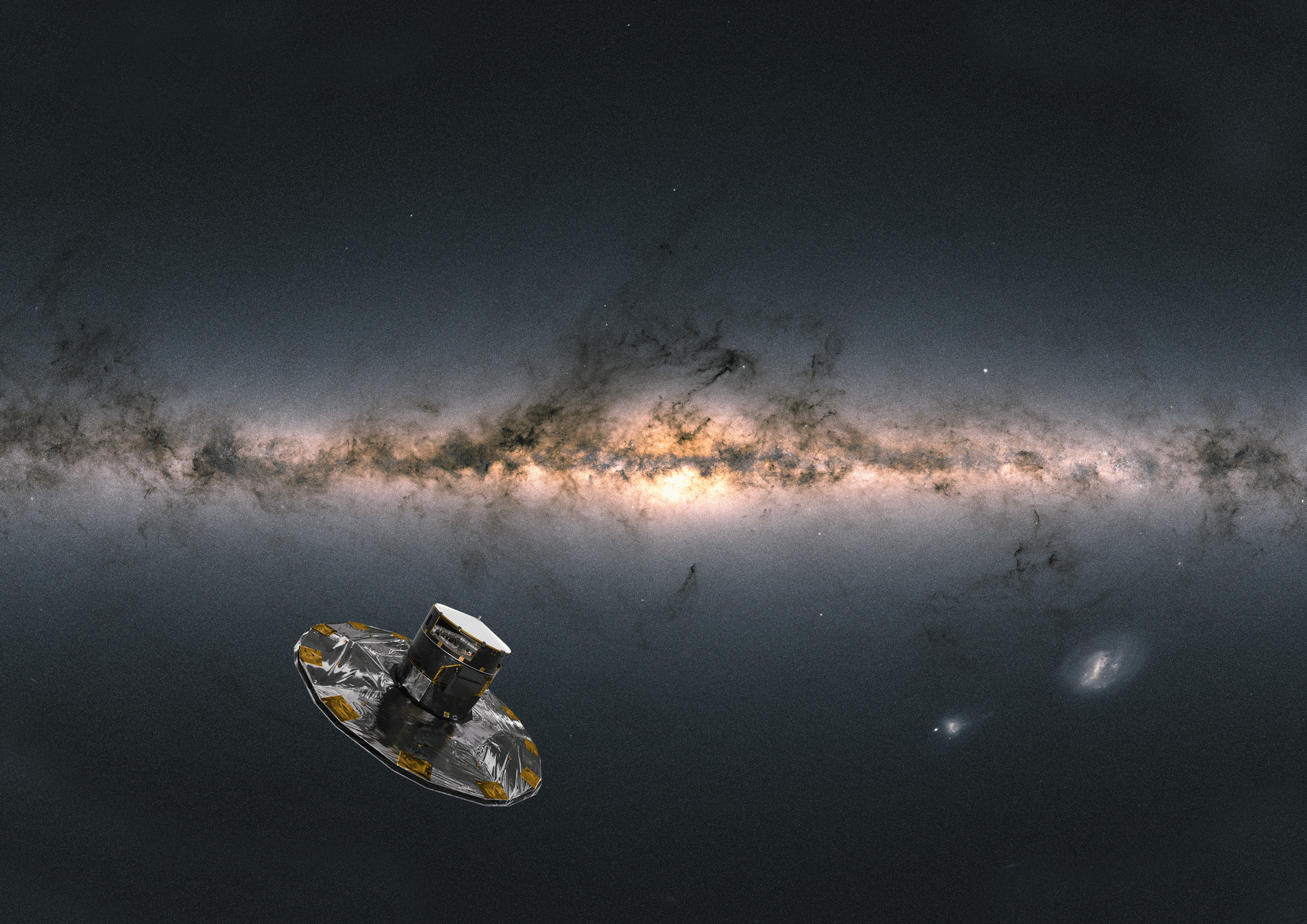

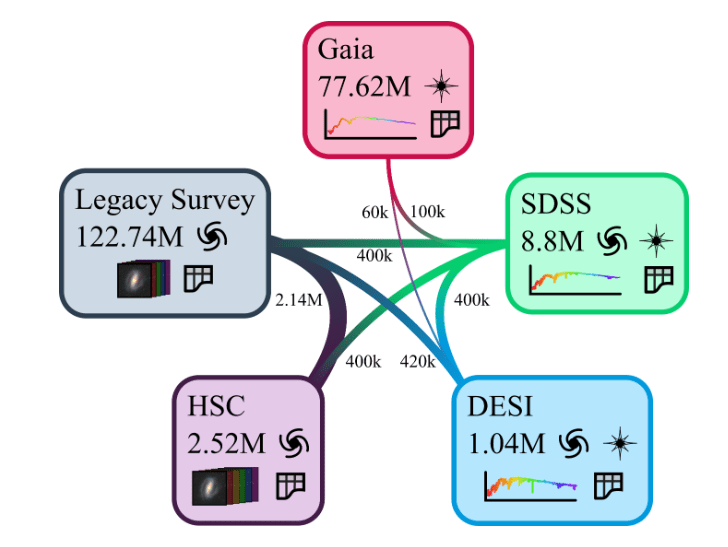

The AION-1 Data Pile

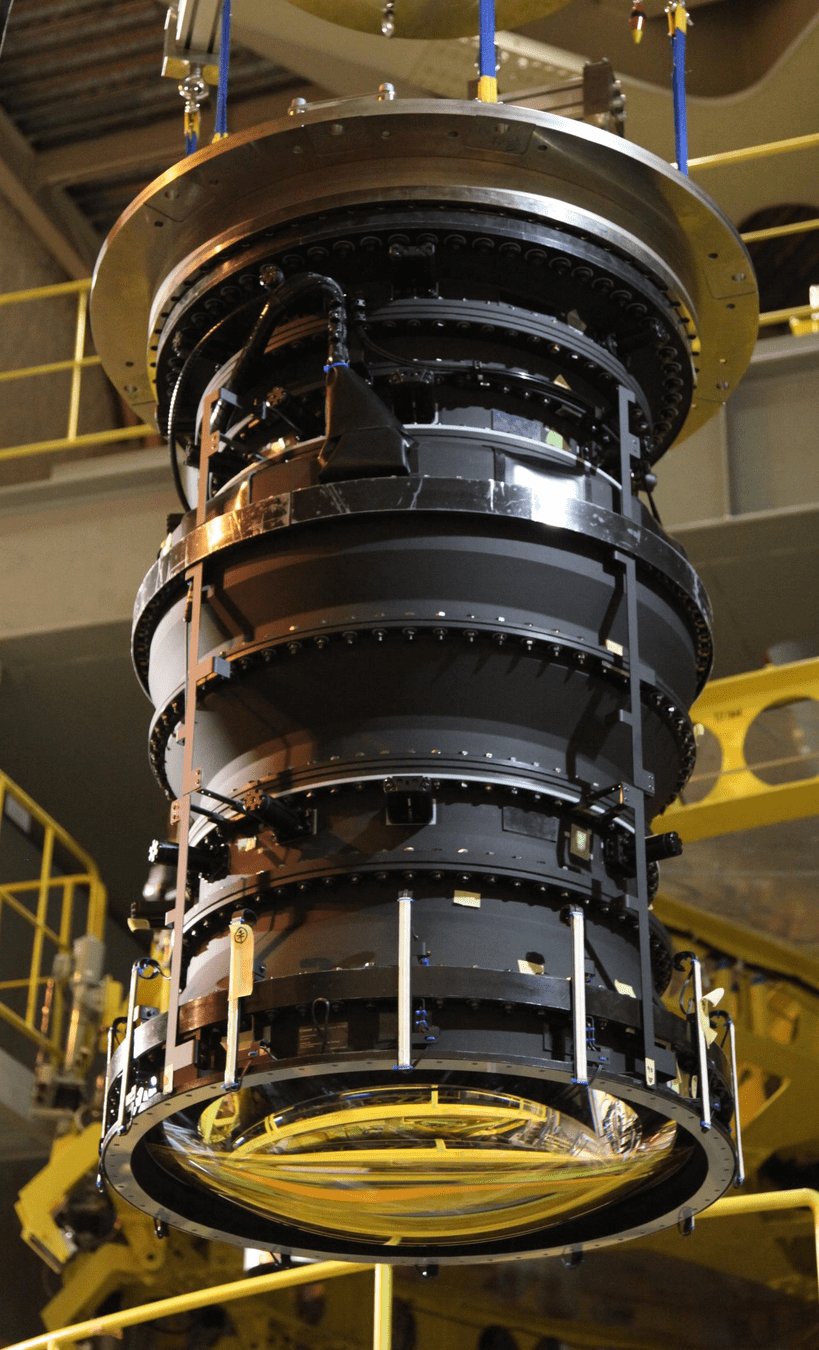

(Blanco Telescope and Dark Energy Camera.

Credit: Reidar Hahn/Fermi National Accelerator Laboratory)

(Subaru Telescope and Hyper Suprime Cam. Credit: NAOJ)

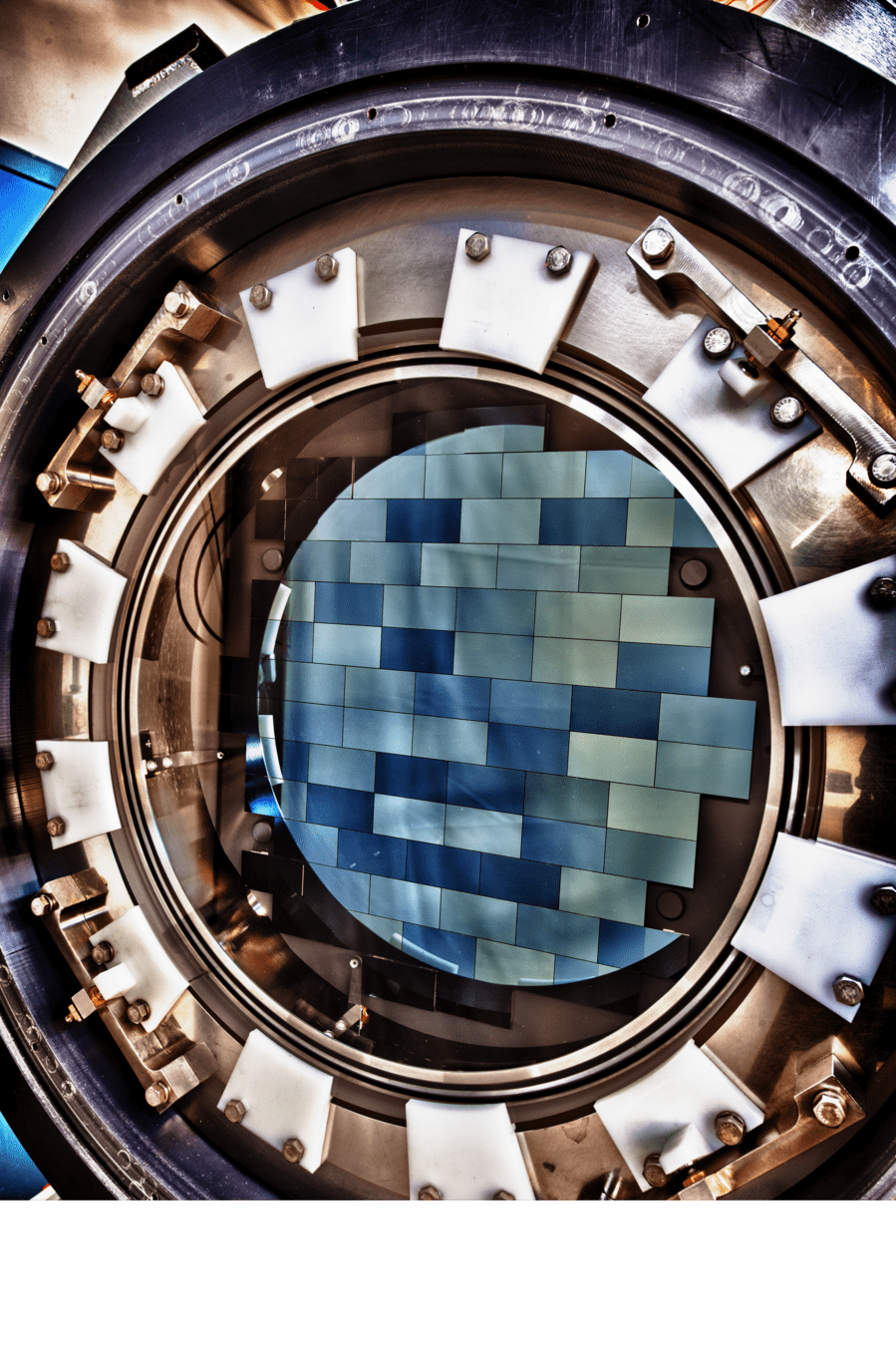

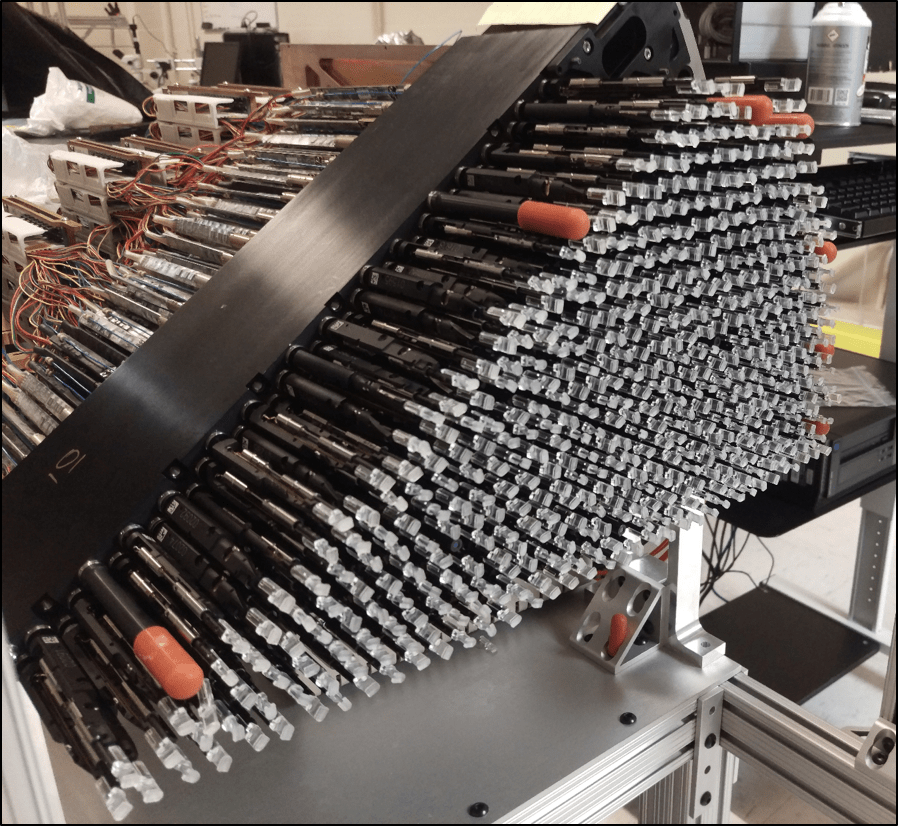

(Dark Energy Spectroscopic Instrument)

(Sloan Digital Sky Survey. Credit: SDSS)

(Gaia Satellite. Credit: ESA/ATG)

Cuts: extended, full color griz, z < 21

Cuts: extended, full color grizy, z < 21

Cuts: parallax / parallax_error > 10

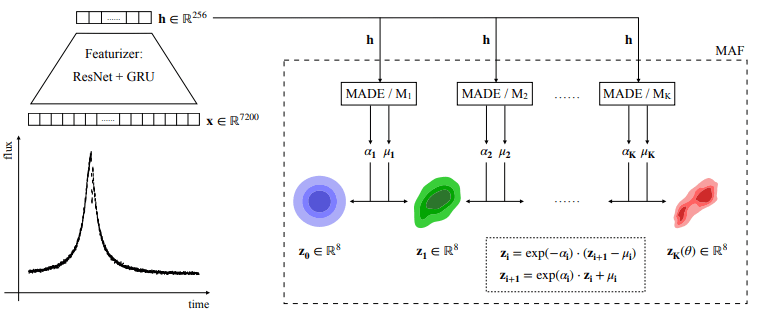

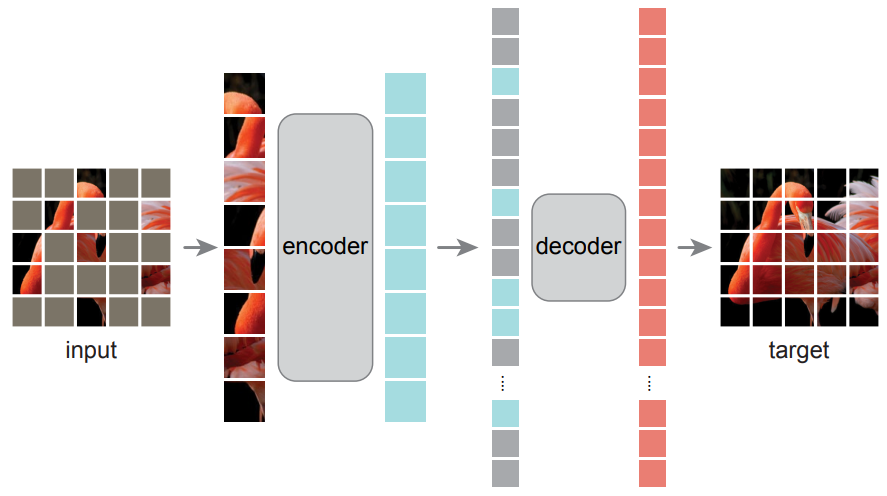

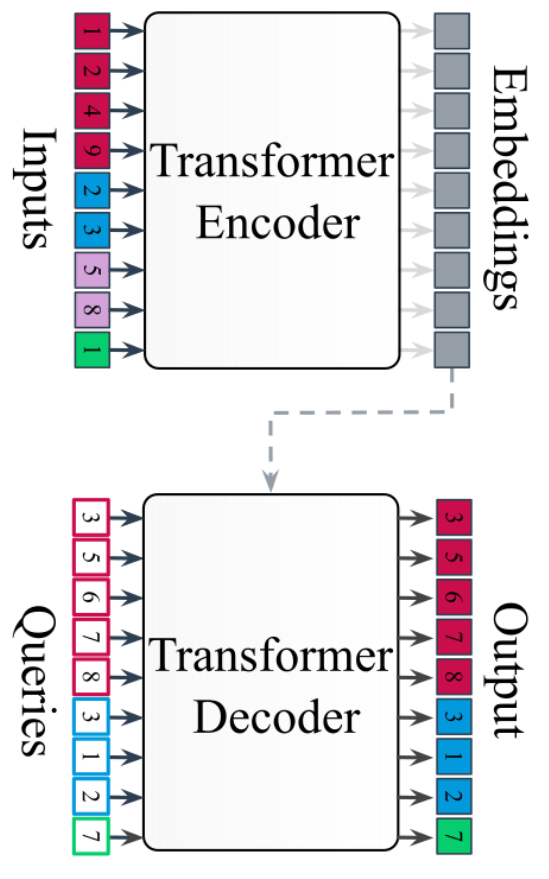

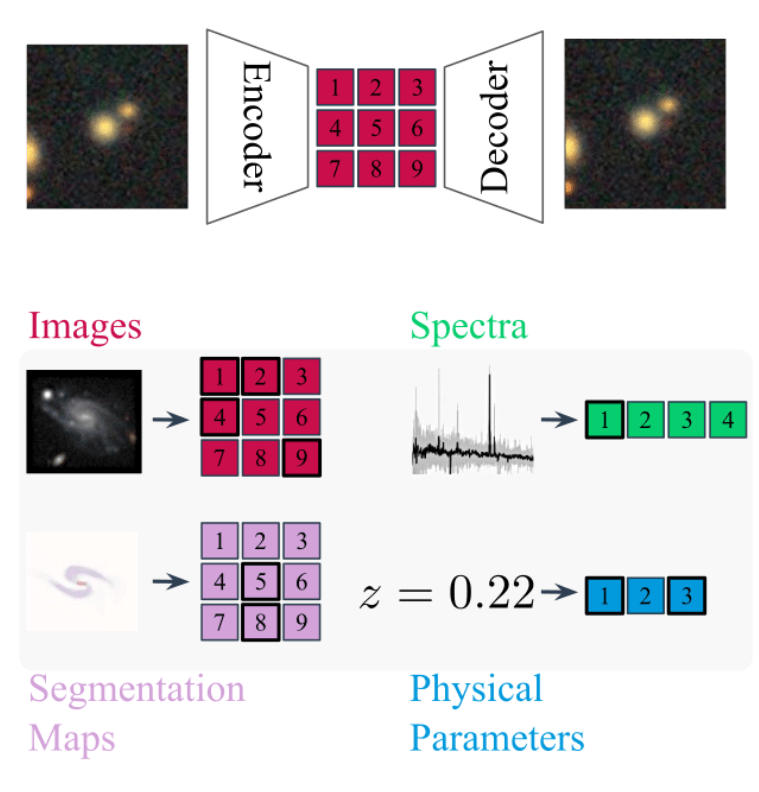

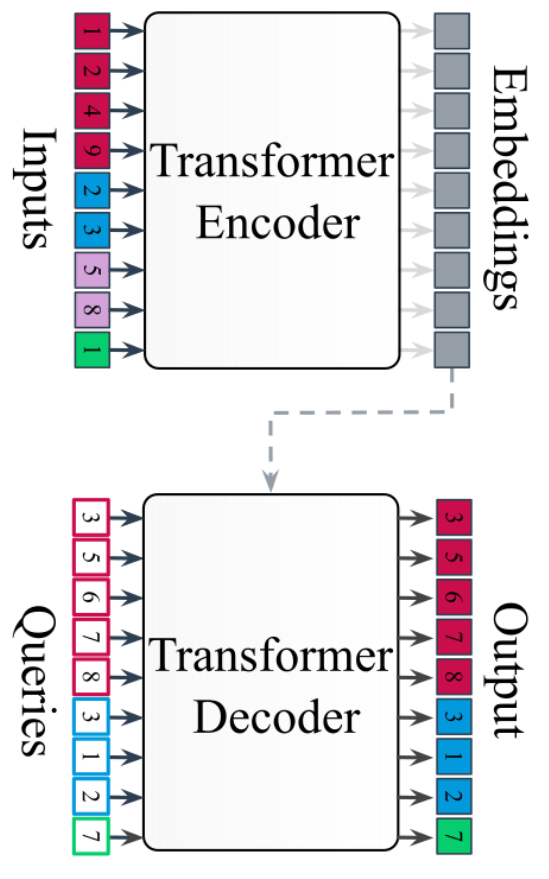

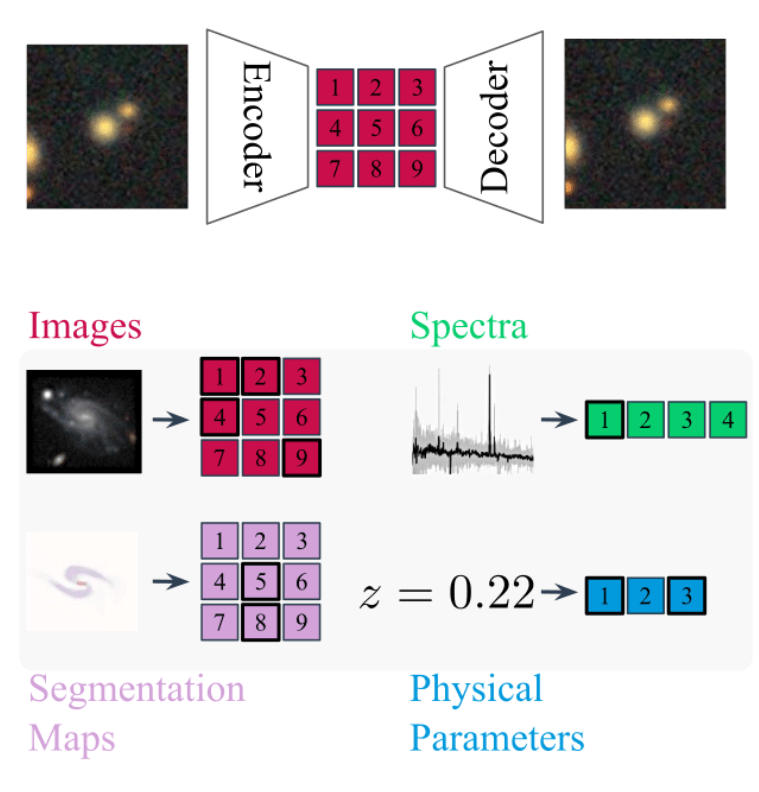

Any-to-Any Modeling with Generative Masked Modeling

- Given standardized and cross-matched dataset, we can feed the data to a large Transformer Encoder Decoder

- Flexible to any combination of input data, can be prompted to generate any output.

- Model is trained by cross-modal generative masked modeling

=> Learns the joint and all conditional distributions of provided modalities:

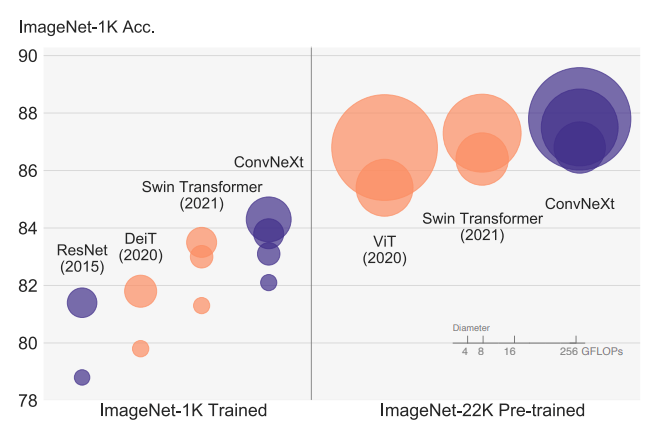

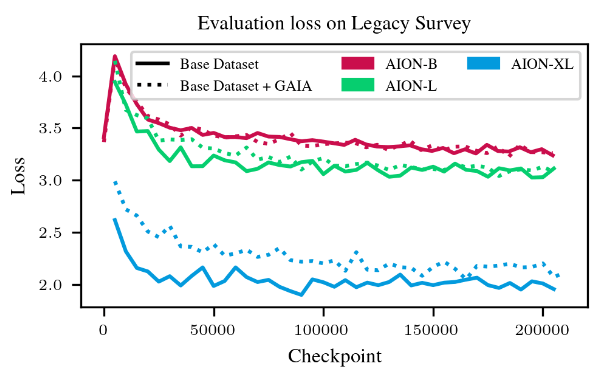

AION-1 family of models

- Models trained as part of the 2024 Jean Zay Grand Challenge, following an extension to a new partition of 1400 H100s

- AION-1 Base: 300 M parameters

- 64 H100s - 1.5 days

- AION-1 Large: 800 M parameters

- 100 H100s - 2.5 days

- AION-1 XLarge: 3B parameters

- 288 H100s - 3.5 days

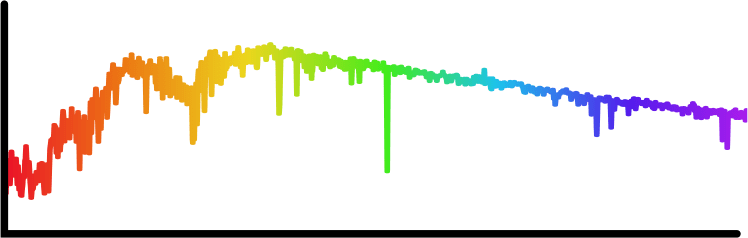

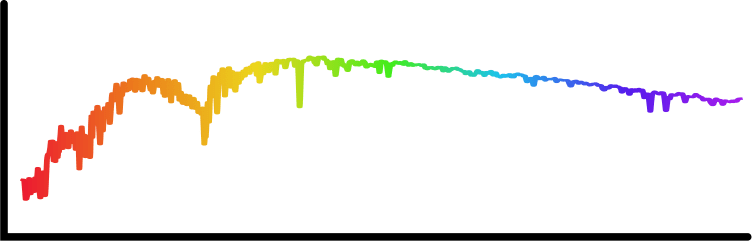

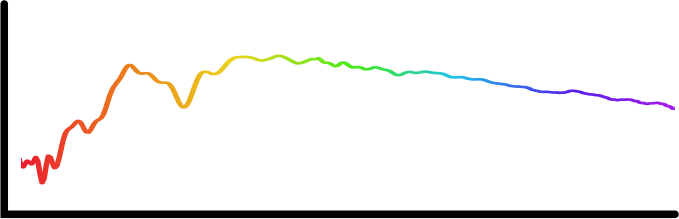

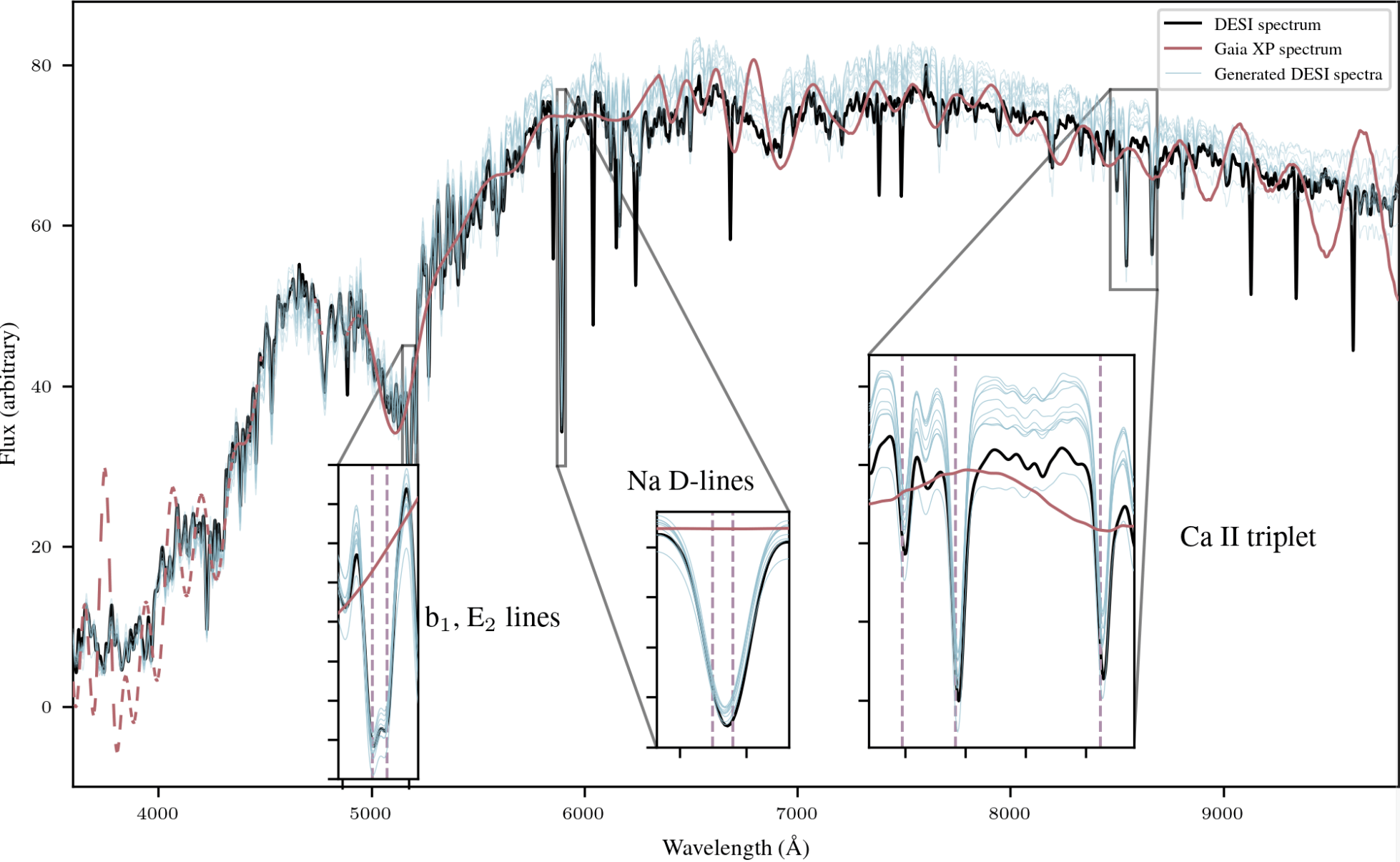

Example of out-of-the-box capabilities

Survey translation

Spectrum super-resolution

Rethinking the way we use Deep Learning

Conventional scientific workflow with deep learning

- Build a large training set of realistic data

- Design a neural network architecture for your data

- Deal with data preprocessing/normalization issues

- Train your network on some GPUs for a day or so

- Apply your network to your problem

- Throw the network away...

=> Because it's completely specific to your data, and to the one task it's trained for.

Conventional researchers @ CMU

Circa 2016

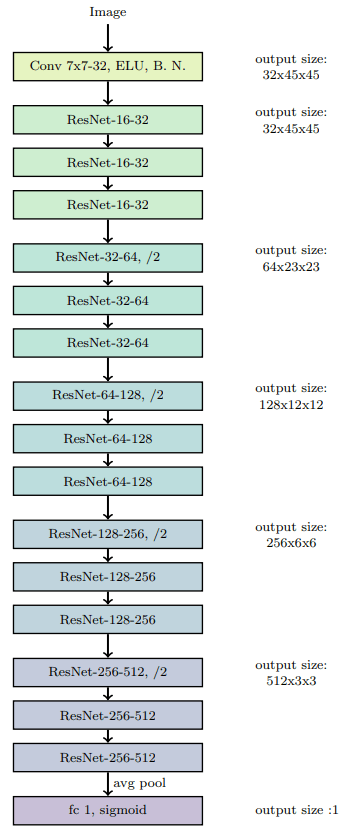

CMU DeepLens (Lanusse et al 2017)

Rethinking the way we use Deep Learning

Foundation Model-based Scientific Workflow

- Build a small training set of realistic data

- Design a neural network architecture for your data

- Deal with data preprocessing/normalization issues

- Adapt a model in a matter of minutes

- Apply your model to your problem

- Throw the network away...

=> Because it's completely specific to your data, and to the one task it's trained for.

Already taken care of

=> Let's discuss embedding-based adaptation

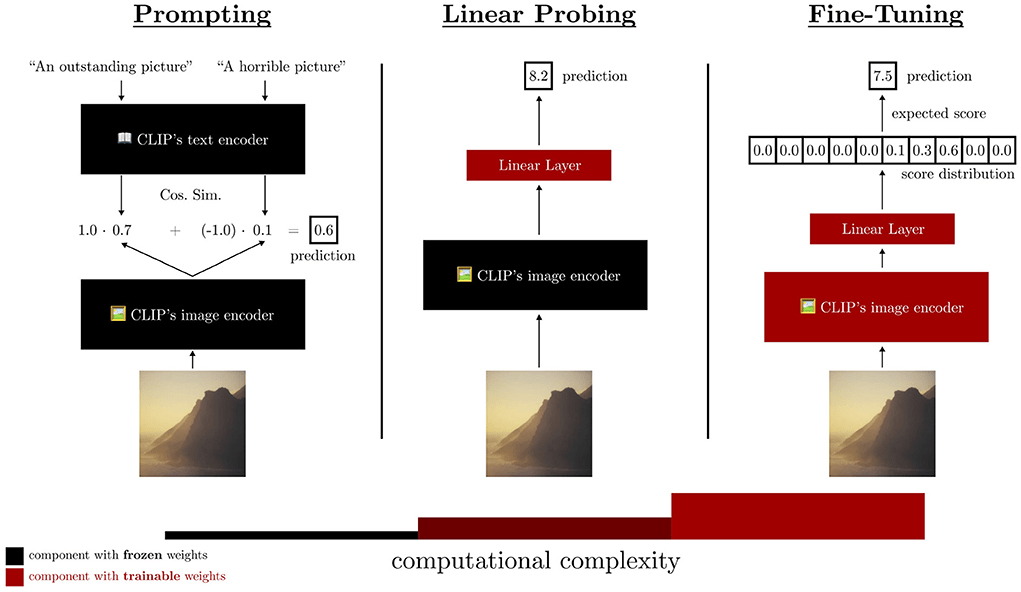

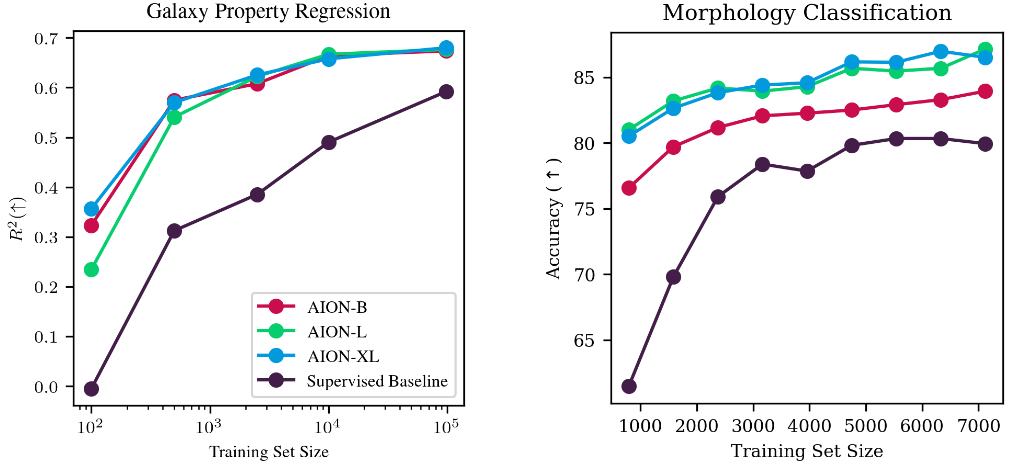

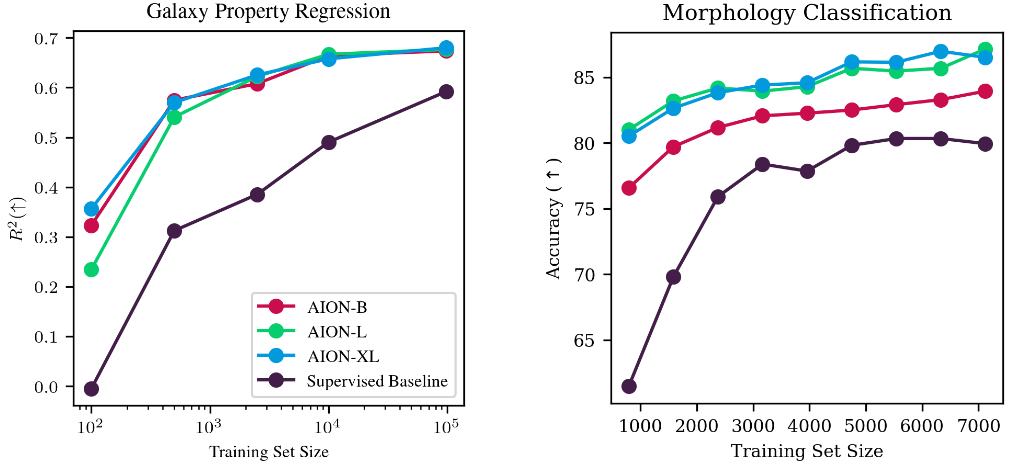

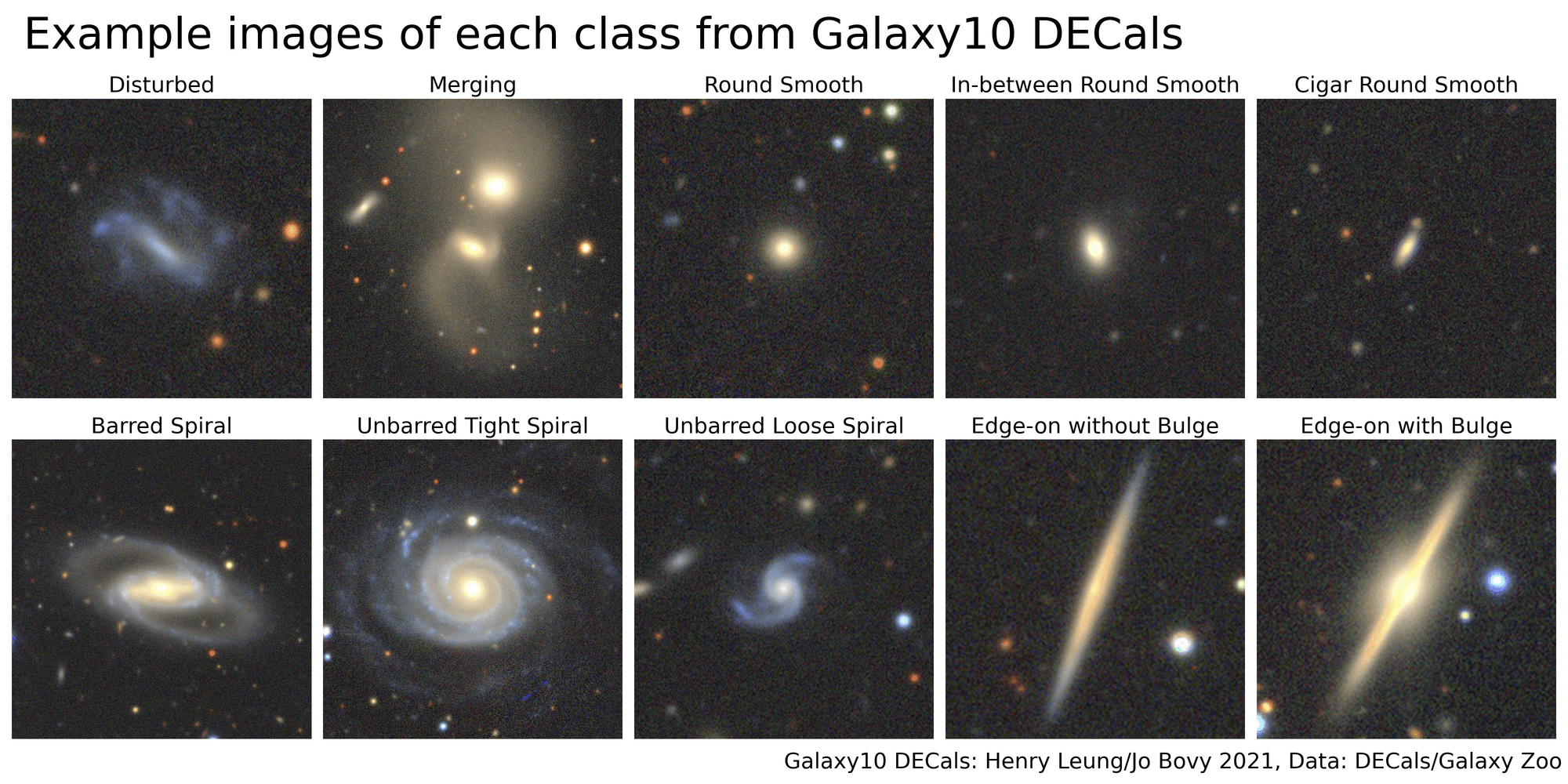

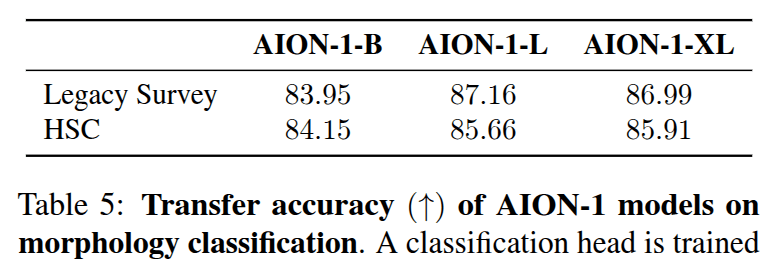

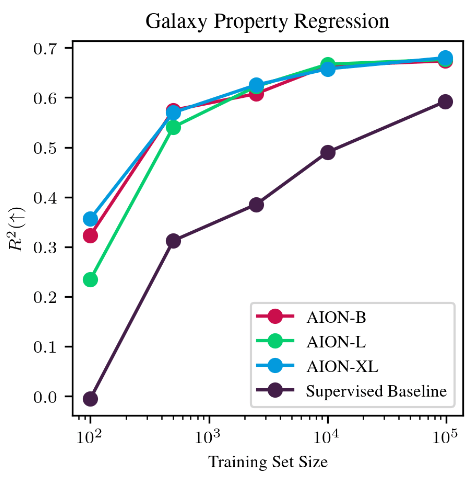

Adaptation of AION embeddings

Adaptation at low cost

with simple strategies:

- Mean pooling + linear probing

- Attentive pooling

- Can be used trivially on any input data Aion was trained for

- Flexible to varying number/types of inputs

=> Allows for trivial data fusion

x_train = Tokenize(hsc_images, modality='HSC')

model = FineTunedModel(base='Aion-B',

adaptation='AttentivePooling')

model.fit(x_train, y_train)

y_test = model.predict(x_test)

Morphology classification by Linear Probing

Trained on ->

Eval on ->

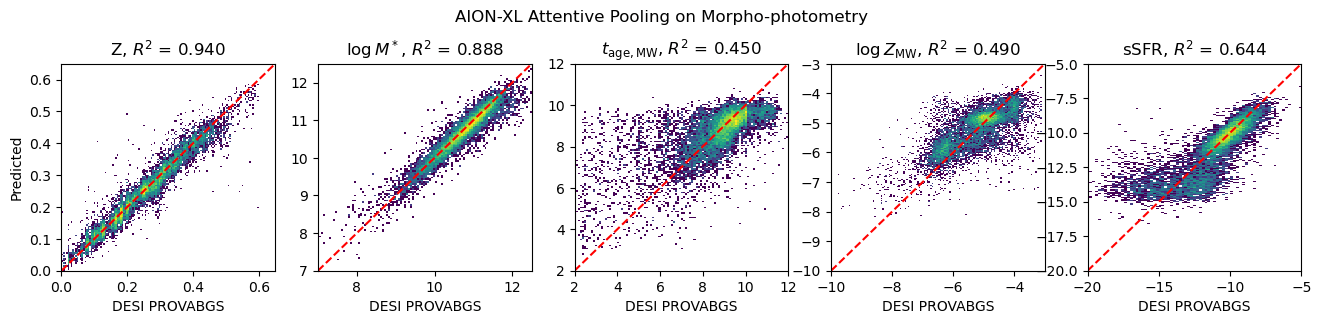

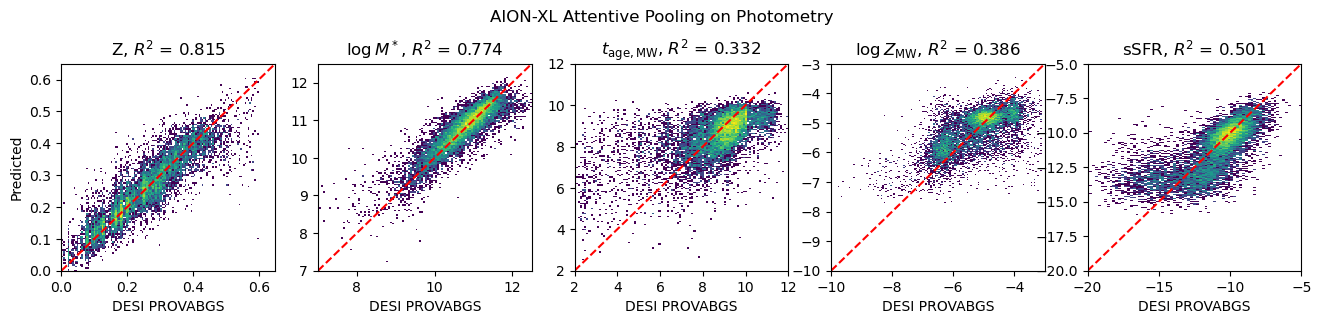

Physical parameter estimation and data fusion

Inputs:

measured fluxes

Inputs:

measured fluxes + image

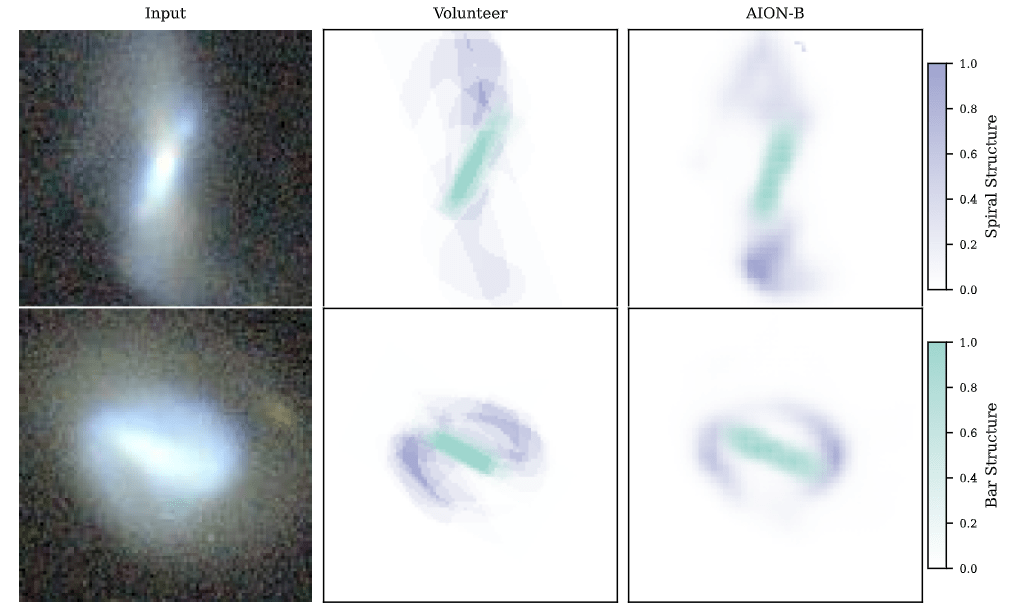

Semantic segmentation

Segmenting central bar and spiral arms in galaxy images based on Galaxy Zoo 3D

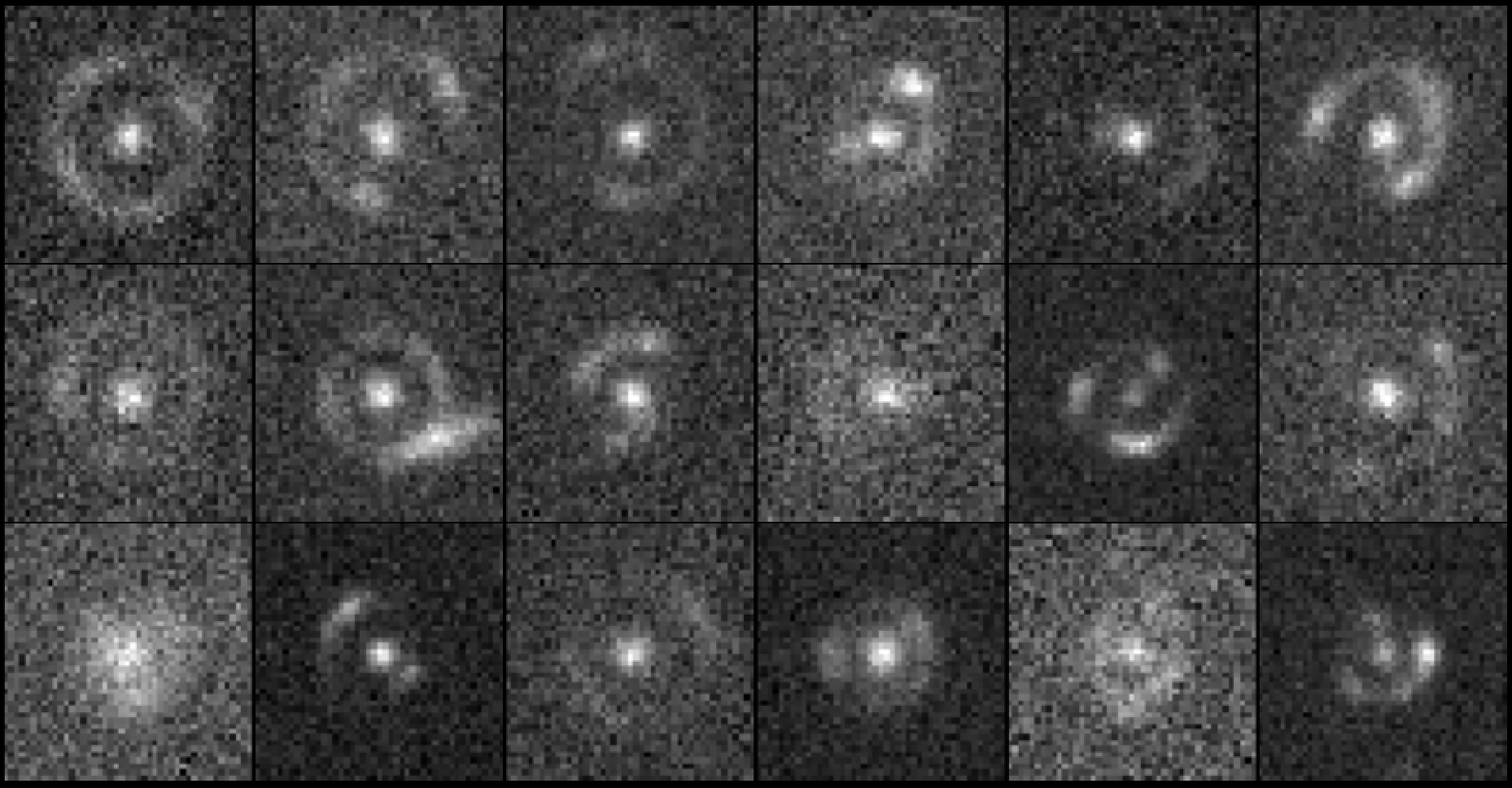

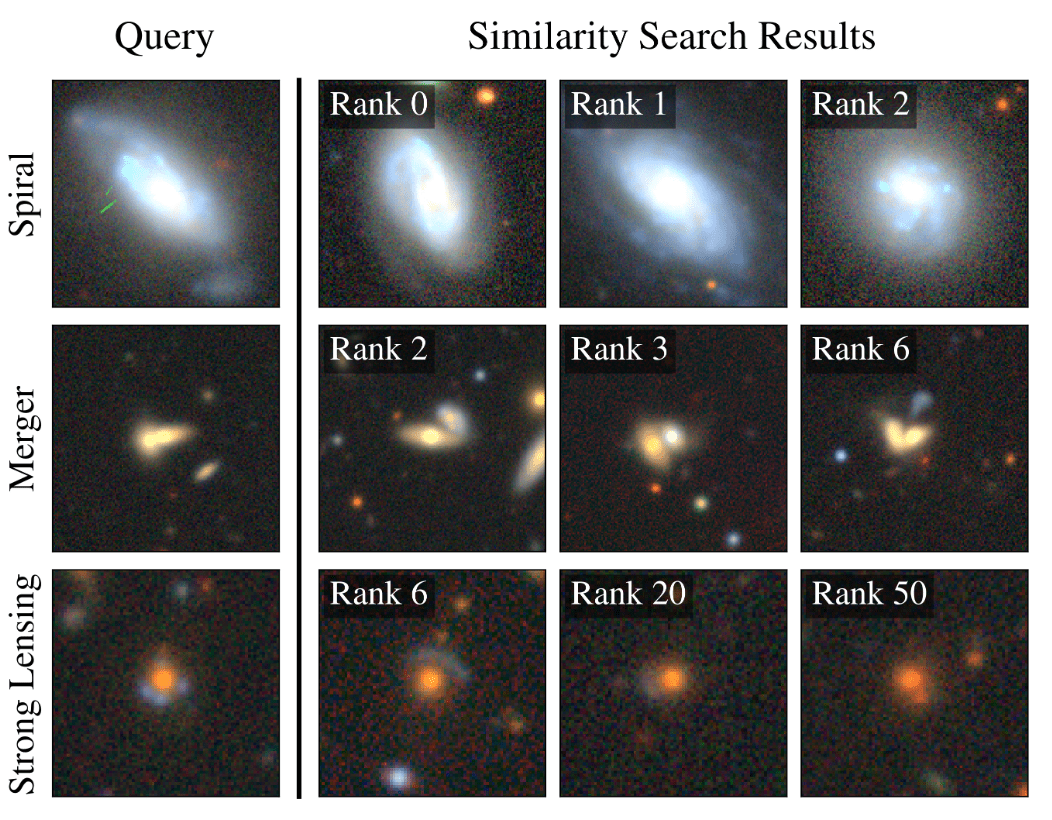

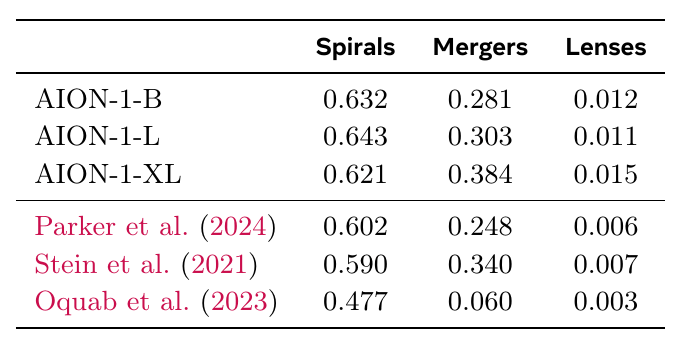

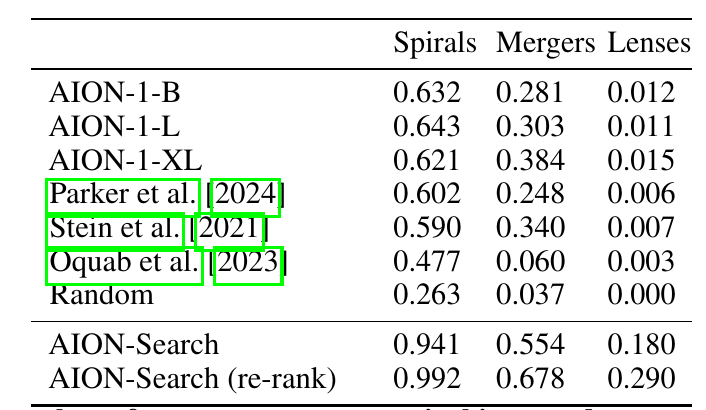

Example-based retrieval

nDCG@10 score

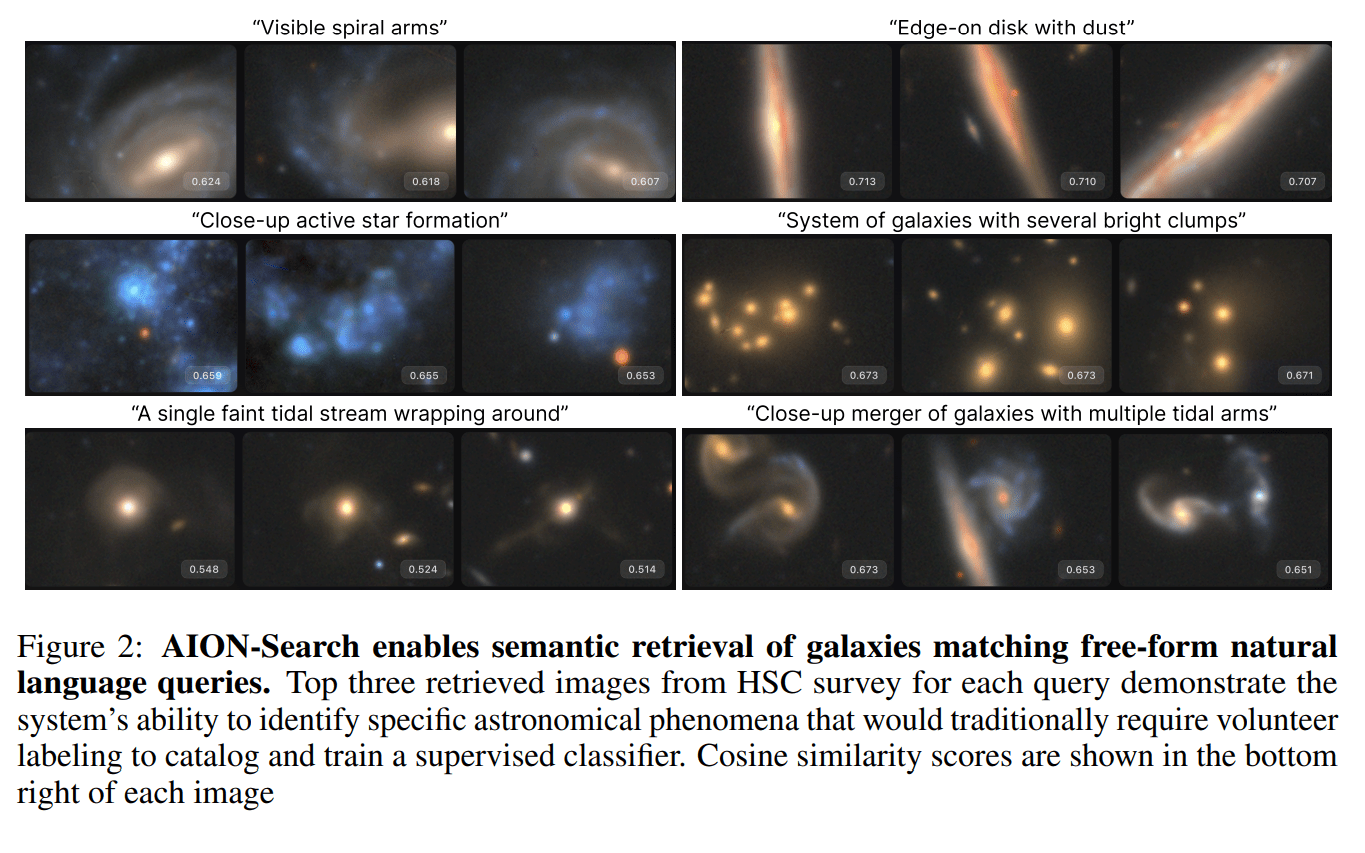

AION-Search: Natural Language Semantic Retrieval

Spotlight at 2025 NeurIPS AI4Science Workshop

Nolan Koblischke

nDCG@10 score

https://aion-search.github.io

Takeaways

- Scientific Foundation Models are not a myth

- They are truly useful tools to shrink time to science and discovery.

- At the same time, there is also no magic here

- We have yet to see discoveries only made possible by the existence of a Foundation Model (at least in astrophysics)

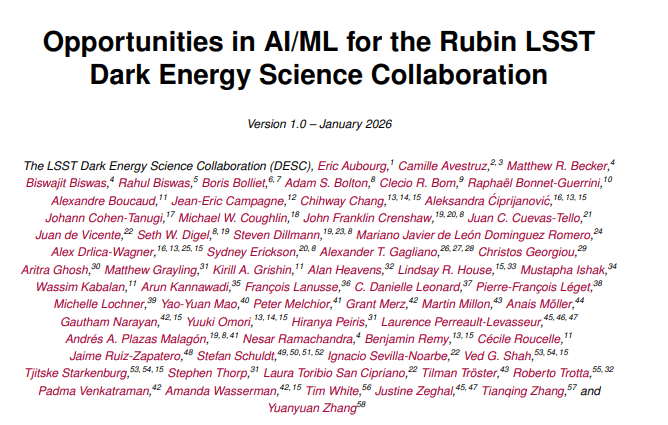

- Survey of emerging ML/AI methods for a large astronomical collaboration (LSST DESC)

Follow us online!

Thank you for listening!

Foundation Models for Science: Myth or Reality?

By eiffl

Foundation Models for Science: Myth or Reality?

SCOPE: Science at the Convergence of AI and Exascale computing 10-11 Mar 2026 Paris (France)

- 104