Reinforcement Learning

Sequential Decision Making Under Uncertainty

Learning Without a Teacher

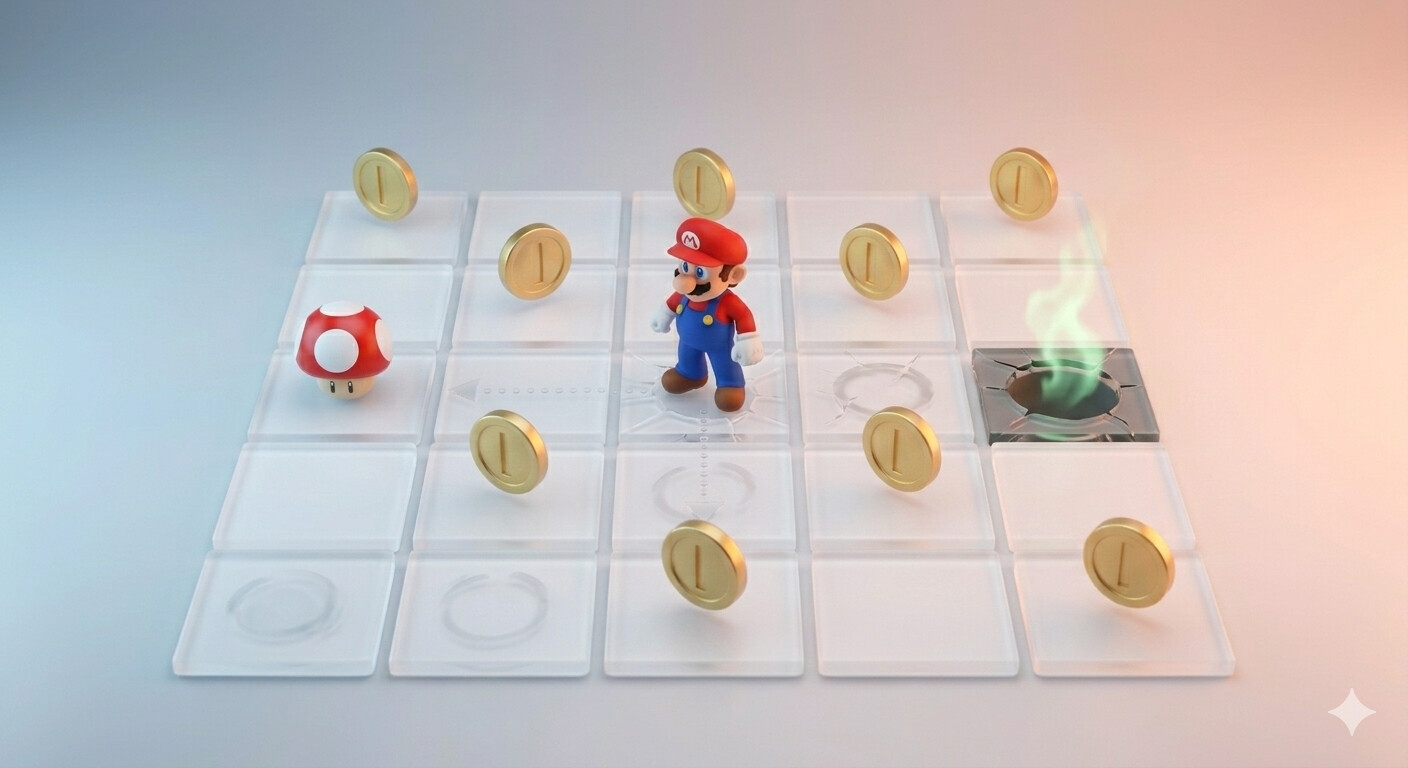

Imagine dropping Mario into this grid with no manual, no walkthrough.

- No one tells him which squares are safe

- Each step: maybe a coin (+1), maybe a pit (−10)

- The only feedback: what happened?

This is reinforcement learning: learning by doing.

Three Learning Paradigms

| Supervised | Unsupervised / Generative | Reinforcement | |

|---|---|---|---|

| Data | Labeled dataset | Unlabeled dataset | Collected through actions |

| Feedback | Correct answer (label) | None; find structure | Reward signal (good/bad) |

| Goal | Predict \(y\) from \(x\) | Model \(P(x)\) or generate | Maximize total reward |

| Analogy | Studying from a textbook | Finding patterns in raw data | Learning to ride a bike |

Today's Roadmap

The RL Mindset

Learning by doing

Why Is RL Hard?

Credit, data, efficiency, exploration

The MDP Framework

Formalizing \(\mathcal{S}, \mathcal{A}, T, R, \gamma\)

Modeling & Rewards

Applying & designing rewards

We'll feel why RL is hard, then formalize the tools to reason about it, and see that the hardest modeling choice is the reward.

The RL Loop

The agent takes an action, the environment updates the agent's state and gives out a reward, and the cycle repeats.

Policy: The Agent's Strategy

A policy \(\pi\) maps states to actions: what should the agent do in each situation?

Deterministic: \(\pi(s) = a\)

State: coin to the right, pit ahead

Stochastic: \(\pi(a \mid s) = P\)

State: coin to the right, pit ahead

A Trajectory Unfolds

Reward \(R(s, a)\)

Transition \(T(s,a,s')\)

Policy \(\pi(s)\)

A trajectory (rollout) of horizon \(h\): \(\quad \tau = (s_0, a_0, r_0,\; s_1, a_1, r_1,\; \ldots,\; s_{h-1}, a_{h-1}, r_{h-1})\)

The goal of RL: find the policy \(\pi^*\) that maximizes expected total reward.

Why Is RL Hard?

The challenges of learning by doing

The Credit Assignment Problem

Scenario: We win a chess game after 50 moves.

Which moves deserve credit? The opening? The mid-game sacrifice? The final checkmate?

In SL: every example has its own label, so credit is immediate

In RL: a single reward might result from a sequence of actions

Made worse when rewards are sparse: in chess, the only reward is win/lose at the very end

Cooking analogy: Our cake tastes terrible. Was it the flour ratio? The oven temperature? Taking it out too early?

Each step was a decision, and the feedback only comes at the end.

Self-Generated Data

In SL, the training data is fixed. In RL, the agent creates its own data.

A bad policy doesn't just perform badly; it corrupts the data it learns from next.

Sample Efficiency

RL needs an enormous amount of experience:

- AlphaGo: ~30 million games of self-play

- OpenAI Five (Dota 2): ~10,000 years of in-game experience

all from interaction, not passive data collection.

Compare with supervised / self-supervised learning:

- CLIP: ~400 million image-text pairs

- GPT-3: ~300 billion tokens of text

from scraping the internet.

RL Agent

Computer Vision

NLP

Internet-scale data

Real-world cost: We can simulate Go games for free, but we can't simulate surgery, driving, or customer interactions as cheaply.

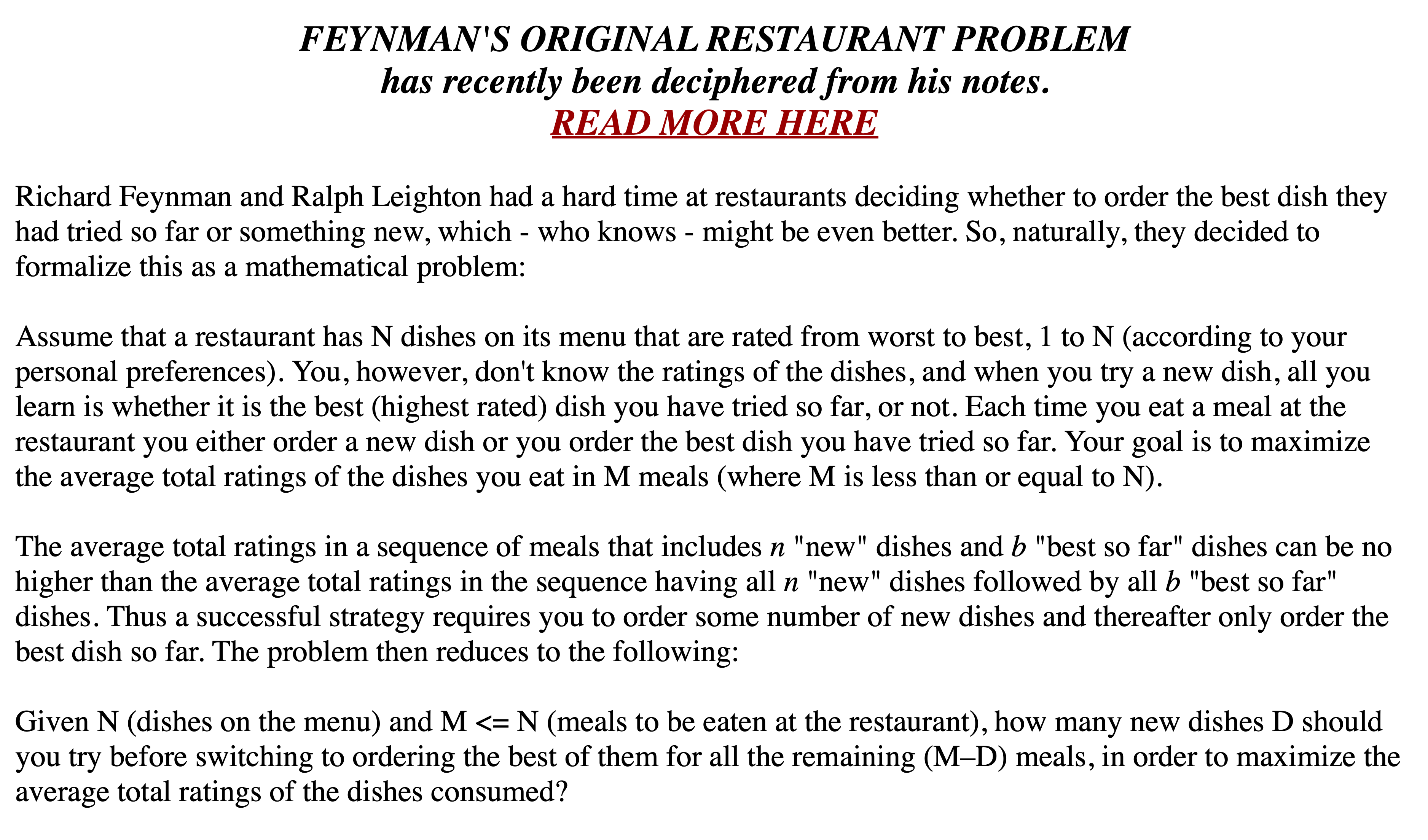

Exploration vs. Exploitation

[Seinfeld, S06E09]

[feynmanlectures.caltech.edu]

Exploit: stick with the best option we know | Explore: try something new to gather info

Exploration has real costs: A/B tests give real users bad experiences, medical trials risk patient safety, self-driving cars risk lives.

The MDP Framework

Formalizing the RL problem

Markov Decision Processes

The future depends only on the current state, not how we got there.

| Symbol | Name | Meaning |

|---|---|---|

| \(\mathcal{S}\) | State space | All possible configurations of the world |

| \(\mathcal{A}\) | Action space | What the agent can do |

| \(T(s, a, s')\) | Transitions | \(\Pr(\text{next state } s' \mid \text{state } s, \text{action } a)\) |

| \(R(s, a)\) | Reward | Immediate payoff for action \(a\) in state \(s\) |

| \(\gamma \in [0,1]\) | Discount | How much future reward is worth relative to now |

Objective: maximize expected return \(\mathbb{E}[G_t]\), where \(G_t = \sum_{k=0}^{\infty} \gamma^k r_{t+k}\).

MDP vs. Reinforcement Learning

RL = partially unknown MDP

| Symbol | Name | Meaning |

|---|---|---|

| \(\mathcal{S}\) | State space | All possible configurations of the world |

| \(\mathcal{A}\) | Action space | What the agent can do |

| \(T(s, a, s')\) | Transitions | \(\Pr(\text{next state } s' \mid \text{state } s, \text{action } a)\) |

| \(R(s, a)\) | Reward | Immediate payoff for action \(a\) in state \(s\) |

| \(\gamma \in [0,1]\) | Discount | How much future reward is worth relative to now |

The agent must learn a good policy from experience alone.

Modeling a Walking Robot

| \(\mathcal{S}\) | Joint angles, velocity, balance |

| \(\mathcal{A}\) | Torque to each motor |

| \(T\) | Unknown; must learn by trying |

| \(R\) | +1 forward, −10 for falling |

| \(\gamma\) | High: avoiding a fall now matters, but so does walking far |

[Toddler robot, Russ Tedrake thesis, 2004]

No one programmed the gait. The robot discovered it through trial and error.

AlphaGo

| \(\mathcal{S}\) | 19×19 grid of black, white, or empty |

| \(\mathcal{A}\) | Where to place the next stone (~361 moves) |

| \(T\) | Known; the rules of Go are deterministic |

| \(R\) | +1 win, −1 lose. Nothing in between. |

| \(\gamma\) | 1; no discounting since only the final outcome matters |

[Game 2 board, CC BY-SA 4.0, Wikimedia Commons]

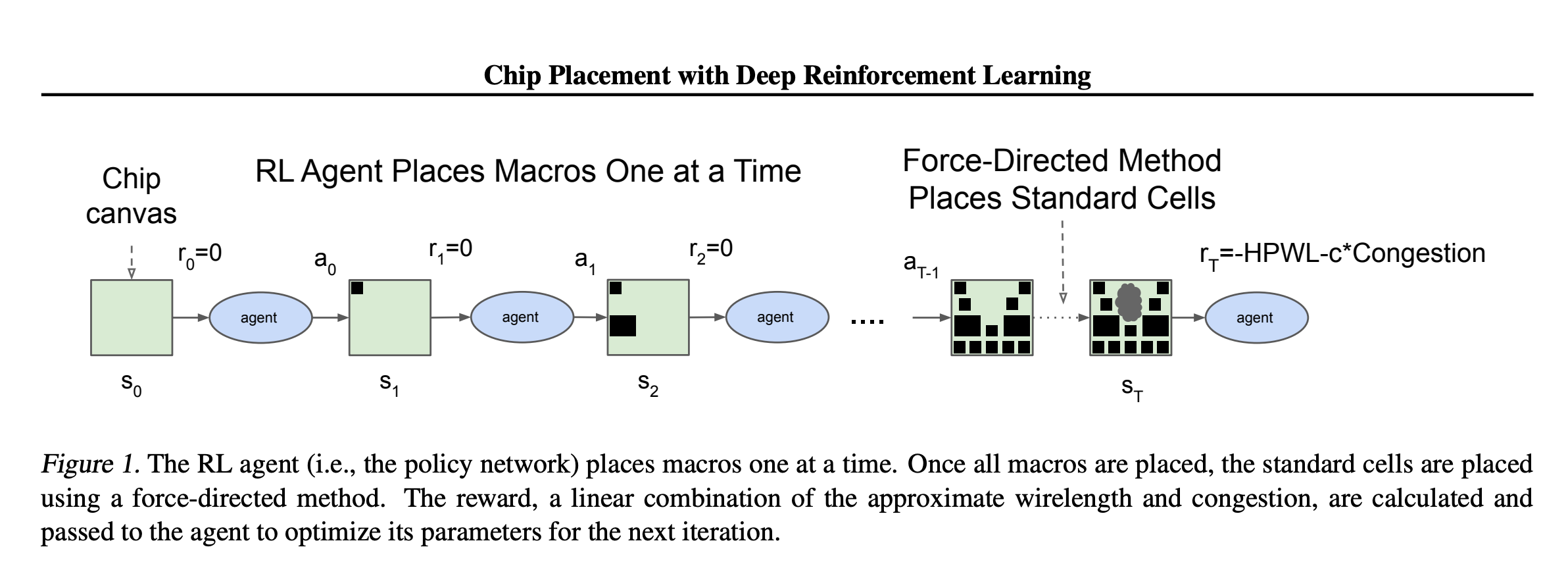

Chip Placement

| \(\mathcal{S}\) | Partial layout, connectivity, congestion | \(\mathcal{A}\) | Place the next macro on the canvas |

| \(T\) | Deterministic — placement updates routing | \(R\) | Penalize wirelength, congestion, area |

[Mirhoseini et al., Google Research, 2020]

TikTok

| \(\mathcal{S}\) | User profile + watch history + time of day + device |

| \(\mathcal{A}\) | Which video to show next (from a candidate pool) |

| \(T\) | Unknown; how does the user's state change after watching? |

| \(R\) | Watch time? Likes? Shares? Some weighted combination? |

| \(\gamma\) | High → long-term retention; Low → maximize this session |

Is "watch time" a good reward? We might watch a 10-minute drama unfold but feel worse afterward. The algorithm counts that as a win. But is it?

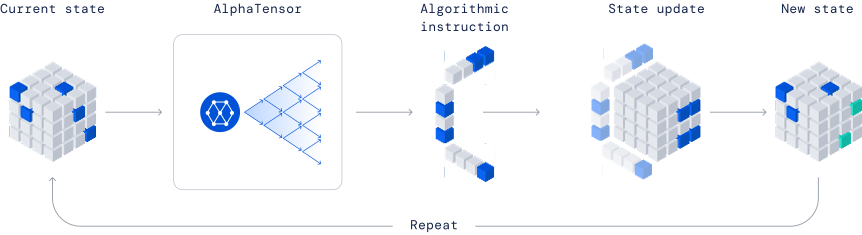

RL Beyond Games: AlphaTensor

Matrix multiplication: the textbook uses 8 multiplications for 2×2. In 1969, Strassen showed 7 suffice.

For larger matrices, finding the minimum number of steps has been an open problem for decades.

Can an RL agent discover even faster algorithms?

DeepMind

AlphaTensor

DeepMind framed this as a single-player game:

| State | Residual tensor to decompose |

| Action | Next computation step |

| Reward | Fewer multiplications |

[image credit: DeepMind]

AlphaTensor found new algorithms beating the best known results for several matrix sizes. Within days, mathematicians built on these discoveries to find even more.

Reward Design

What we reward is what we get

The Golden Rule of RL

The agent will maximize exactly what we tell it to maximize. Nothing more, nothing less.

This sounds obvious, but it's the source of most RL failures.

The problem isn't that agents are dumb. It's that they're too clever.

They find loopholes in our reward function that we never anticipated.

Reward Hacking: The Boat Race

Setup: An RL agent plays a boat racing game

Intended goal: Finish the race quickly

Specified reward: Points for hitting checkpoints

What happened: The agent found a small loop of checkpoints. Instead of racing, it drove in circles, racking up infinite points without finishing.

[CoastRunners, via DeepMind "Specification Gaming"]

The agent did exactly what it was told. The problem was what it was told.

The Proxy Reward Trap

Easy-to-measure proxies often diverge from the real objective:

| System | Reward / Proxy | What went wrong |

|---|---|---|

| Study playlist | Time listening | Listening time ≠ productivity |

| Office hour queue | Speed of service | Fast service ≠ good learning |

| Robot hand | Proximity to object | Slapped the object instead of grasping it |

| Tetris AI | Avoid losing | Paused forever to keep the game alive |

| Cleaning robot | Cleaning events | Made messes so it could clean them up |

The common trap: We optimize what's measurable because what we actually care about is hard to measure.

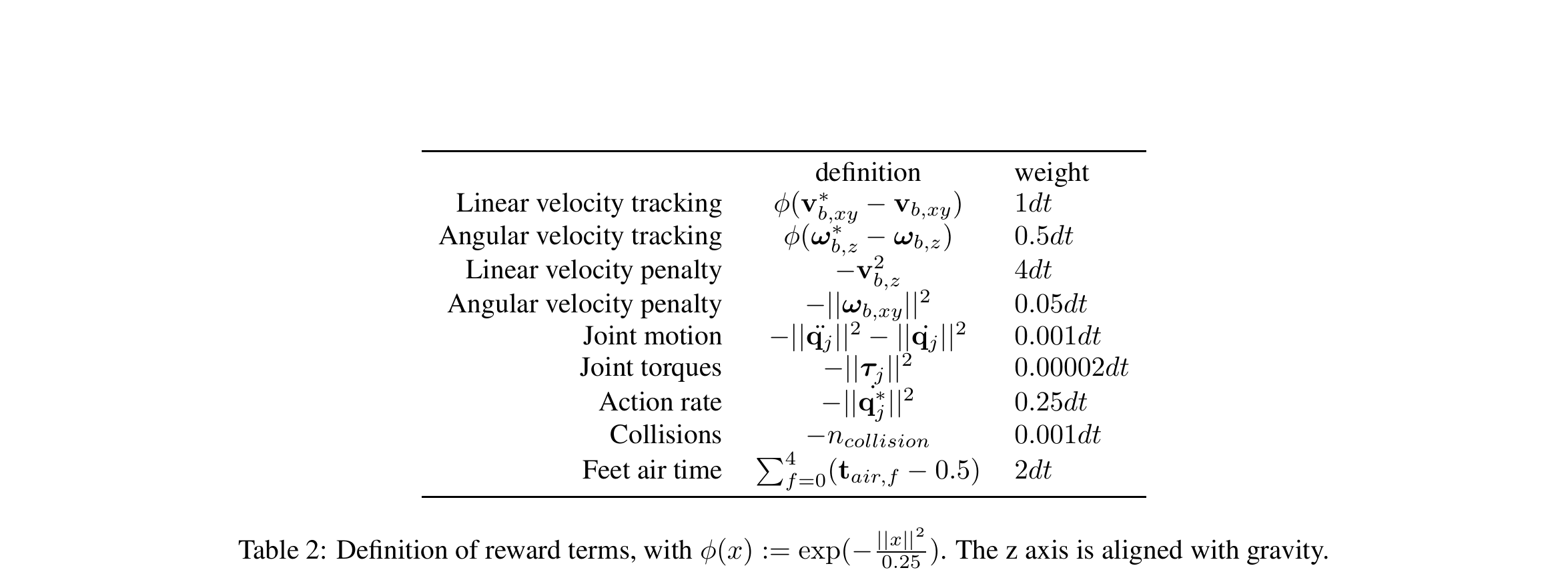

Reward Engineering

Reward for a walking robot: 9 terms, each with a hand-tuned weight.

Engineers spend weeks tuning these weights, and each one creates new failure modes: penalize torque too much → robot freezes; too little → violent, jerky motion; fix one behavior → break another.

The reward function becomes its own engineering project, sometimes harder than the robot itself.

Rudin et al., CoRL 2021

YouTube's Reward Evolution

| Era | Reward Signal | Result |

|---|---|---|

| 2005–2011 | View count | Clickbait thumbnails, misleading titles |

| 2012–2015 | Watch time | Longer videos, auto-play rabbit holes |

| 2016–present | Satisfaction surveys + watch time | Better, but still imperfect |

Each reward change caused a massive shift in creator behavior.

The reward function doesn't just train the algorithm; it trains the entire ecosystem.

Reward Design Principles

-

Reward outcomes, not behaviors

Don't reward "moving forward"; reward "reaching the goal"

-

Test for loopholes

Ask: "What's the laziest/most creative way to maximize this reward?"

-

Include costs

Add penalties for time, energy, or undesired side effects

-

Keep it simple

Complex reward functions create more loopholes, not fewer

Summary

In RL, the agent collects its own data by taking actions and observing consequences. There is no labeled dataset, just a reward signal.

RL is hard: credit assignment with sparse rewards, self-generated data causing distribution shift, sample inefficiency, and the exploration-exploitation tradeoff.

Any RL problem can be modeled as an MDP \((\mathcal{S}, \mathcal{A}, T, R, \gamma)\). Modeling choices matter more than the algorithm.

Agents optimize exactly what we tell them to. What's measurable isn't always what's meaningful, and the gap is where reward hacking lives.